Pdf Ell 1 Regularized Gradient Temporal Difference Learning

Temporal Difference Learning Pdf Theoretical Computer Science The present work combines the gtd algorithms with $\ell 1$ regularization. we propose a family of $\ell 1$ regularized gtd algorithms, which employ the well known soft thresholding. We propose a family of ℓ1 regularized gtd algorithms, which employ the well known soft thresholding operator. we investigate convergence properties of the proposed algorithms, and depict their performance with several numerical experiments.

Unit 06 Temporal Difference Learning Pdf Applied Mathematics In this paper, we study the temporal difference (td) learning with linear value function approximation. it is well known that most td learning algorithms are unstable with linear function approximation and off policy learning. In this paper, we propose regularized gtd (r gtd), a new variant of gtd2 that introduces a regularized convex– concave saddle point formulation with a unique solution without imposing the nonsingularity assumption on the fim. finally, the main contributions are summarized as follows:. In this paper, we introduce a new method called td with regularized corrections (tdrc), that attempts to balance ease of use, soundness, and performance. it behaves as well as td, when td performs well, but is sound in cases where td diverges. In this paper, we propose a regularized optimization objective by reformulating the mean square projected bellman error (mspbe) minimization.

Pdf Ell 1 Regularized Gradient Temporal Difference Learning In this paper, we introduce a new method called td with regularized corrections (tdrc), that attempts to balance ease of use, soundness, and performance. it behaves as well as td, when td performs well, but is sound in cases where td diverges. In this paper, we propose a regularized optimization objective by reformulating the mean square projected bellman error (mspbe) minimization. View a pdf of the paper titled regularized gradient temporal difference learning, by hyunjun na and donghwan lee. It is well known that most td learning algorithms are unstable with linear function approximation and off policy learning. recent development of gradient td (gtd) algorithms has addressed this problem successfully. however, the success of gtd algorithms requires a set of well chosen features, which are not always available. This formulation naturally yields a regularized gtd algorithms, referred to as r gtd, which guarantees convergence to a unique solution even when the fim is singular. This formulation naturally yields a regularized gtd algorithms, referred to as r gtd, which guarantees convergence to a unique solution even when the fim is singular.

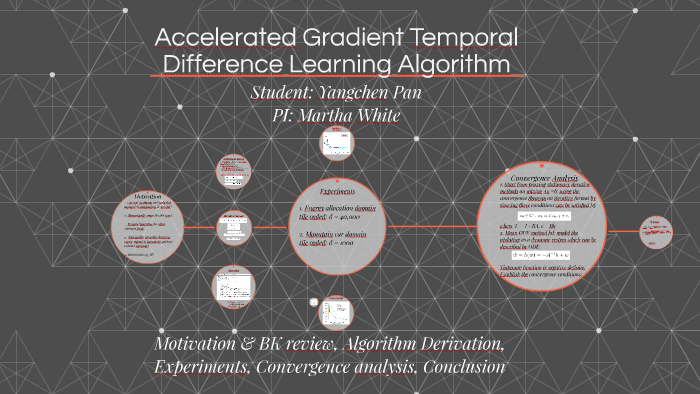

Accelerated Gradient Temporal Difference Learning By Yangchen Pan On Prezi View a pdf of the paper titled regularized gradient temporal difference learning, by hyunjun na and donghwan lee. It is well known that most td learning algorithms are unstable with linear function approximation and off policy learning. recent development of gradient td (gtd) algorithms has addressed this problem successfully. however, the success of gtd algorithms requires a set of well chosen features, which are not always available. This formulation naturally yields a regularized gtd algorithms, referred to as r gtd, which guarantees convergence to a unique solution even when the fim is singular. This formulation naturally yields a regularized gtd algorithms, referred to as r gtd, which guarantees convergence to a unique solution even when the fim is singular.

Gradient Descent Temporal Difference Difference Learning Deepai This formulation naturally yields a regularized gtd algorithms, referred to as r gtd, which guarantees convergence to a unique solution even when the fim is singular. This formulation naturally yields a regularized gtd algorithms, referred to as r gtd, which guarantees convergence to a unique solution even when the fim is singular.

Direct Gradient Temporal Difference Learning Deepai

Comments are closed.