Pdf Batch Machine Learning Inference Serving On Serverless Platforms

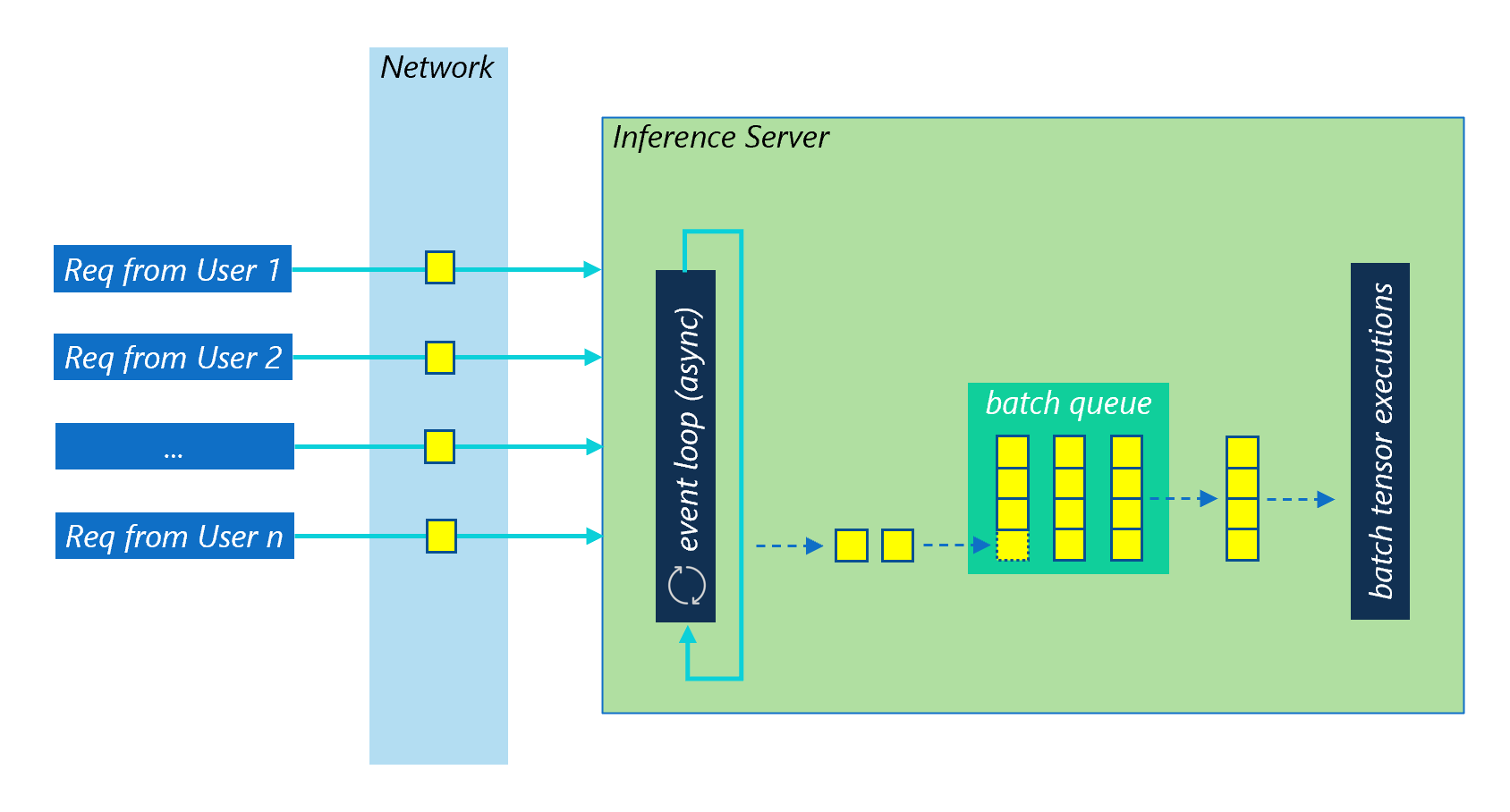

Pdf Batch Machine Learning Inference Serving On Serverless Platforms Our experiments show that without batching, machine learning serving cannot reap the benefits of serverless computing. in this paper, we present batch, a framework for supporting efficient machine learning serving on serverless platforms. Serverless computing is a new pay per use cloud service paradigm that automates resource scaling for stateless functions and can potentially facilitate bursty m.

Batch Inference Toolkit Batch Inference Toolkit 1 0rc0 Documentation Pdf | on nov 1, 2020, ahsan ali and others published batch: machine learning inference serving on serverless platforms with adaptive batching | find, read and cite all the. In this paper, we present batch, a framework for supporting efficient machine learning serving on serverless platforms. batch uses an optimizer to provide inference tail latency guarantees and cost optimization and to enable adaptive batching support. Our experiments show that without batching, machine learning serving cannot reap the benefits of serverless computing. in this paper, we present batch, a framework for supporting efficient machine learning serving on serverless platforms. In this paper, we present batch, a framework of their functions without worrying about virtual machine for supporting efficient machine learning serving on serverless platforms.

Github Microsoft Batch Inference Dynamic Batching Library For Deep Our experiments show that without batching, machine learning serving cannot reap the benefits of serverless computing. in this paper, we present batch, a framework for supporting efficient machine learning serving on serverless platforms. In this paper, we present batch, a framework of their functions without worrying about virtual machine for supporting efficient machine learning serving on serverless platforms. In this paper, we present batch, a framework for supporting efficient machine learning serving on serverless platforms. batch uses an optimizer to provide inference tail latency guarantees and cost optimization and to enable adaptive batching support. “to the best of our knowledge this is the first analytical model that can capture accurately the shape of the latency distribution in the presence of bursty arrivals and deterministic service times.”. This paper proposes siren, an asynchronous distributed machine learning framework based on the emerging serverless architecture, with which stateless functions can be executed in the cloud without the complexity of building and maintaining virtual machine infrastructures.

Serverless Machine Learning Inference Datachef In this paper, we present batch, a framework for supporting efficient machine learning serving on serverless platforms. batch uses an optimizer to provide inference tail latency guarantees and cost optimization and to enable adaptive batching support. “to the best of our knowledge this is the first analytical model that can capture accurately the shape of the latency distribution in the presence of bursty arrivals and deterministic service times.”. This paper proposes siren, an asynchronous distributed machine learning framework based on the emerging serverless architecture, with which stateless functions can be executed in the cloud without the complexity of building and maintaining virtual machine infrastructures.

Conference Talks Talk Serverless Machine Learning Inference With This paper proposes siren, an asynchronous distributed machine learning framework based on the emerging serverless architecture, with which stateless functions can be executed in the cloud without the complexity of building and maintaining virtual machine infrastructures.

Comments are closed.