Part 2 Code Gen Ai Transformers With Pytorch Master Input Embedding Positional Encoding

Github Hesamalian Transformers 2d Positional Embedding Pytorch 🎓 master transformer basics with pytorch! 🎓 learn how to easily implement input embedding and positional encoding in transformers using pytorch, even if you have no prior. To incorporate the positional encoding with the input tensor, we simply add the positional encoding to the input tensor. this results in the final representation, which contains both the.

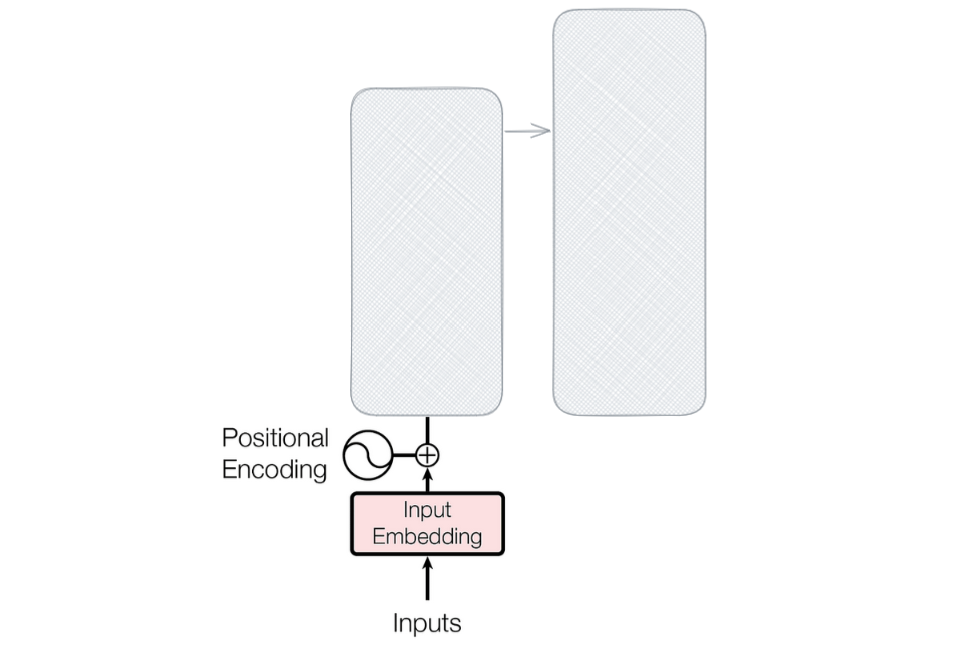

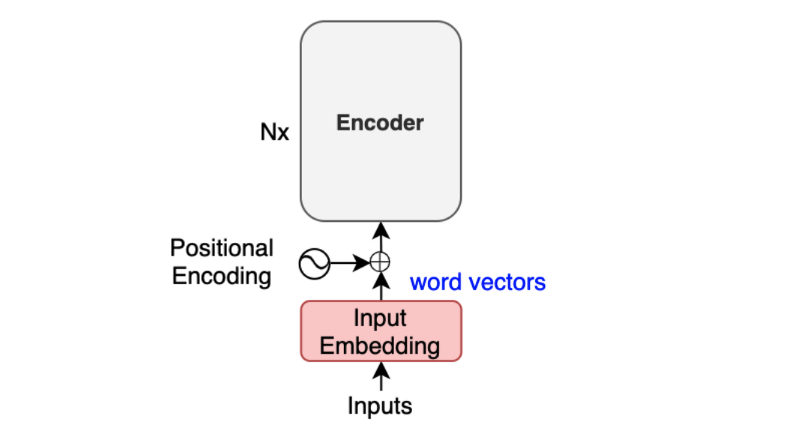

Transformer Positional Encoding Sequence Models Deeplearning Ai These will guide you through steps required to use the code contaied within the modelling package to train a language model and then use it to perform semantic search and sentiment classification tasks. In this blog, we have explored the fundamental concepts of transformer embeddings in pytorch, including word embeddings and positional encoding. We’ve come a long way in this article, starting from how raw text is tokenized, to how those tokens are mapped into vectors through embeddings, and finally how transformers preserve word order through positional encoding. The dominant approach for preserving information about the order of tokens is to represent this to the model as an additional input associated with each token. these inputs are called positional encodings, and they can either be learned or fixed a priori.

Part 2 Code Gen Ai Transformers With Pytorch Master Input Embedding We’ve come a long way in this article, starting from how raw text is tokenized, to how those tokens are mapped into vectors through embeddings, and finally how transformers preserve word order through positional encoding. The dominant approach for preserving information about the order of tokens is to represent this to the model as an additional input associated with each token. these inputs are called positional encodings, and they can either be learned or fixed a priori. In this comprehensive guide, we’ll walk through implementing every component of a transformer model using pytorch, giving you a deep understanding of how these powerful models work under the hood. Build a transformer from scratch with a step by step guide and implementation in pytorch. This blog is going to be about the positional encodings a.k.a. positional embeddings of the transformer neural network architecture. this article is organized as follows. This tutorial provides a step by step guide to implementing a basic transformer model using pytorch. we'll cover the essential components, including self attention, multi head attention, positional encoding, and the encoder decoder structure.

Positional Encoding In Transformers Ai Tutorial Next Electronics In this comprehensive guide, we’ll walk through implementing every component of a transformer model using pytorch, giving you a deep understanding of how these powerful models work under the hood. Build a transformer from scratch with a step by step guide and implementation in pytorch. This blog is going to be about the positional encodings a.k.a. positional embeddings of the transformer neural network architecture. this article is organized as follows. This tutorial provides a step by step guide to implementing a basic transformer model using pytorch. we'll cover the essential components, including self attention, multi head attention, positional encoding, and the encoder decoder structure.

Master Positional Encoding Part Ii By Jonathan Kernes Towards Data This blog is going to be about the positional encodings a.k.a. positional embeddings of the transformer neural network architecture. this article is organized as follows. This tutorial provides a step by step guide to implementing a basic transformer model using pytorch. we'll cover the essential components, including self attention, multi head attention, positional encoding, and the encoder decoder structure.

Transformer S Positional Encoding Naoki Shibuya

Comments are closed.