Part 2 Code Gen Ai Transformers With Pytorch Master Input Embedding

Part 2 Code Gen Ai Transformers With Pytorch Master Input Embedding 🎓 master transformer basics with pytorch! 🎓 learn how to easily implement input embedding and positional encoding in transformers using pytorch, even if you have no prior knowledge. Today, i’ll guide you through the essential building blocks of the transformer, focusing on input embedding and positional encoding. we’ll explore both the underlying theories and practical.

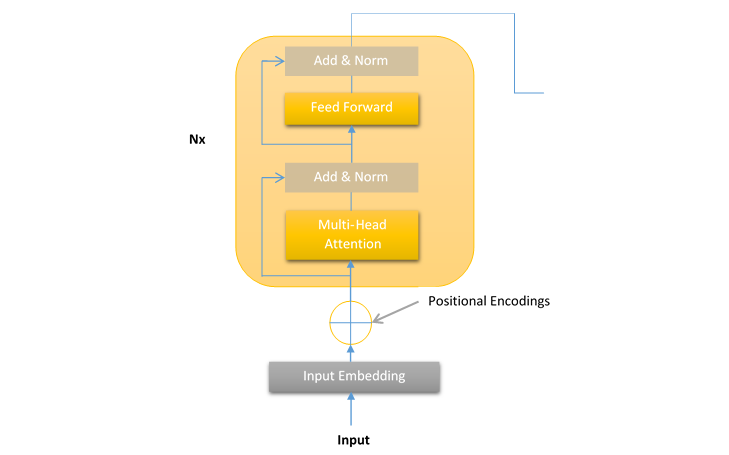

Architecture Of Transformers In Generative Ai Original: sasha rush. the transformer has been on a lot of people’s minds over the last year five years. this post presents an annotated version of the paper in the form of a line by line implementation. it reorders and deletes some sections from the original paper and adds comments throughout. 🎯 effortlessly learn transformers basics! 🎯 in this video, you’ll discover how to easily input text into transformers using input embedding and positional encoding, even if you have no. Build a gpt style transformer based language model using pure pytorch — step by step and from first principles. this project breaks down the inner workings of modern llms and guides you through creating your own generative model. In this blog, i’ll walk you through everything i built, step by step, in pytorch, to create an encoder decoder based seq2seq transformer model that translates sentences from english to hindi.

Transformers Managenрџ Ai Build a gpt style transformer based language model using pure pytorch — step by step and from first principles. this project breaks down the inner workings of modern llms and guides you through creating your own generative model. In this blog, i’ll walk you through everything i built, step by step, in pytorch, to create an encoder decoder based seq2seq transformer model that translates sentences from english to hindi. In this guide, we'll demystify the process of implementing transformers using pytorch, taking you on a journey from theoretical foundations to practical implementation. In this post, i'm going through the architecture of the transformer model, and we are going to code it from scratch. the complete source code shown in this article can be found here:. Build a transformer from scratch with a step by step guide and implementation in pytorch. To the best of our knowledge, however, the transformer is the first transduction model relying entirely on self attention to compute representations of its input and output without using sequence aligned rnns or convolution.

Intro To Ai Transformers Codecademy In this guide, we'll demystify the process of implementing transformers using pytorch, taking you on a journey from theoretical foundations to practical implementation. In this post, i'm going through the architecture of the transformer model, and we are going to code it from scratch. the complete source code shown in this article can be found here:. Build a transformer from scratch with a step by step guide and implementation in pytorch. To the best of our knowledge, however, the transformer is the first transduction model relying entirely on self attention to compute representations of its input and output without using sequence aligned rnns or convolution.

Github Pashu123 Transformers Pytorch Implementation Of Transformers Build a transformer from scratch with a step by step guide and implementation in pytorch. To the best of our knowledge, however, the transformer is the first transduction model relying entirely on self attention to compute representations of its input and output without using sequence aligned rnns or convolution.

Comments are closed.