Parallelism Management

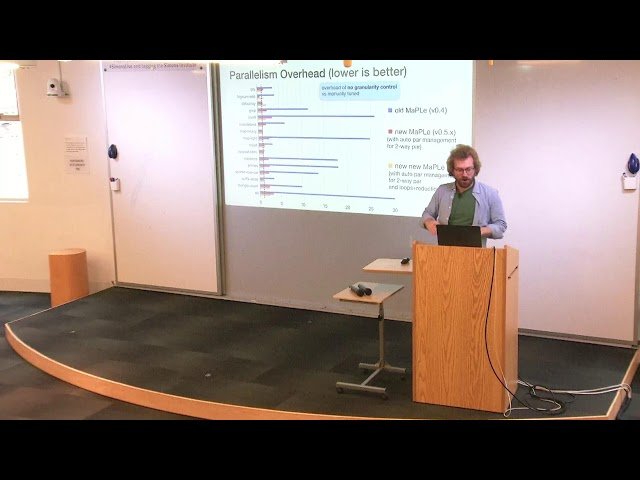

Parallelism Pdf Control Flow Variable Computer Science In this paper, we propose to manage the cost and benefit of parallelism automatically by a combination of static (language based) and dynamic (run time system) techniques. In this paper, we propose to manage the cost and benefit of parallelism automatically by a combination of static (language based) and dynamic (run time system) techniques.

Ab Initio Tutorials Parallelism Motivated by these challenges and the numerous advantages of high level languages, we believe that it has become essential to manage parallelism automatically so as to minimize its cost and maximize its benefit. Parallelism control refers to the management of the number of concurrent thread blocks executing on hardware resources, such as the many cores on graphics processing units (gpus), to maximize performance and efficient resource utilization. This paper proposes techniques for such automatic management of parallelism by combining static (compilation) and run time techniques. Parallelism is effective for cpu bound operations, where the workload can be divided and run across multiple cores. we’ll dive deeper into how it works, where it can improve performance, and how.

Free Video Automatic Parallelism Management From Simons Institute This paper proposes techniques for such automatic management of parallelism by combining static (compilation) and run time techniques. Parallelism is effective for cpu bound operations, where the workload can be divided and run across multiple cores. we’ll dive deeper into how it works, where it can improve performance, and how. We illustrate the task parallelism pattern using monte carlo simulation on multicore processors as an example to illustrate the definition of task granularity and the interactions between tasks. From simple high school physics (“resistance in series versus resistance in parallel”) all the way through to multicore cpus and cloud computing, employing parallelism to go faster is a natural thought process—electrical engineers do this all the time. Parallelism is about doing lots of things at once (task execution). in this article, we’ll break down the differences, explore how they work, and walk through real world applications with examples and code. Motivated by these challenges and the numerous advantages of high level languages, we believe that it has become essential to manage parallelism automatically so as to minimize its cost and maximize its benefit.

Parallelism Management We illustrate the task parallelism pattern using monte carlo simulation on multicore processors as an example to illustrate the definition of task granularity and the interactions between tasks. From simple high school physics (“resistance in series versus resistance in parallel”) all the way through to multicore cpus and cloud computing, employing parallelism to go faster is a natural thought process—electrical engineers do this all the time. Parallelism is about doing lots of things at once (task execution). in this article, we’ll break down the differences, explore how they work, and walk through real world applications with examples and code. Motivated by these challenges and the numerous advantages of high level languages, we believe that it has become essential to manage parallelism automatically so as to minimize its cost and maximize its benefit.

Image Of Slide 23 Parallelism is about doing lots of things at once (task execution). in this article, we’ll break down the differences, explore how they work, and walk through real world applications with examples and code. Motivated by these challenges and the numerous advantages of high level languages, we believe that it has become essential to manage parallelism automatically so as to minimize its cost and maximize its benefit.

Image Of Slide 11

Comments are closed.