Parallel Computing Platforms Shared Memory Architectures Course Hero

Parallel Computer Memory Architecture Hybrid Distributed Shared Memory Shared address space architectures n any processor can directly reference any memory location ¨ communication occurs implicitly as result of loads and stores n convenient: ¨ location transparency ¨ similar programming model to time sharing on uniprocessors ¨ wide range of scale: few to hundreds of processors n popularly known as shared. Lecture on parallel computing platforms and shared memory multiprocessors by professor zhu zhichun parallel computing platforms and shared memory.

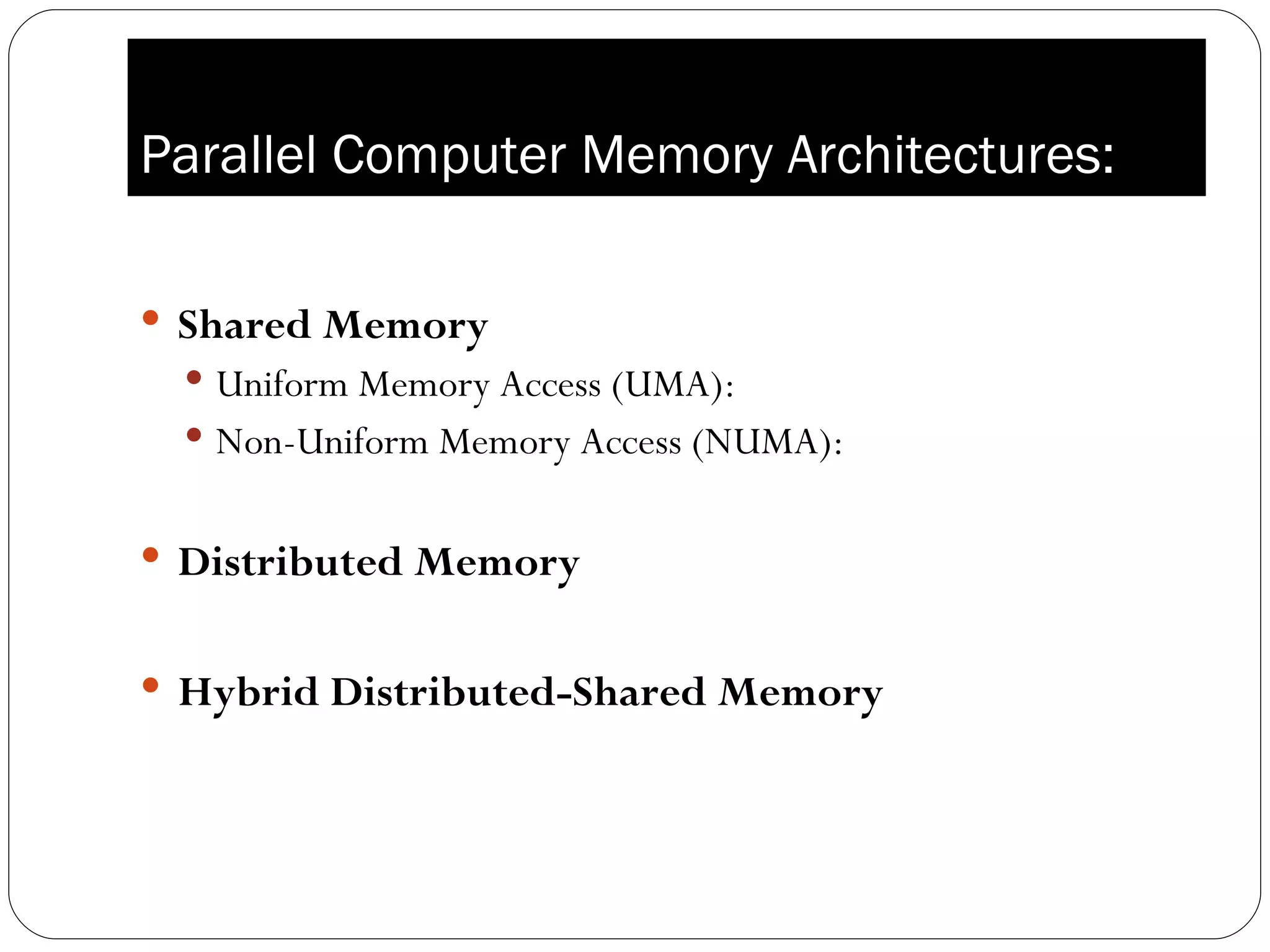

Ppt Shared Memory Parallel Programming Powerpoint Presentation Free Data parallelism: many problems in scientific computing involve processing of large quantities of data stored on a computer. if this manipulation can be performed in parallel, i.e., by multiple processors working on different parts of the data, we speak of data parallelism. Physical organization of parallel platforms ideal parallel machine called parallel random access machine, or pram. prams consist of p processors and a global memory of unbounded size that is uniformly accessible to all processors. processors share a common clock but may execute different instructions in each cycle. 3. Overview of shared memory architecture: shared memory is a parallel computing architecture that allows multiple processors or threads to access a common memory space. For students and professionals who are just learning about parallel computing, here is a short primer that includes the process's benefits and drawbacks, as well as a description of where it is used. it is the use of several tools, in this case processors, to solve a problem in very simple terms.

Lec3 Smp Lecture On Parallel Computing Platforms And Shared Memory Overview of shared memory architecture: shared memory is a parallel computing architecture that allows multiple processors or threads to access a common memory space. For students and professionals who are just learning about parallel computing, here is a short primer that includes the process's benefits and drawbacks, as well as a description of where it is used. it is the use of several tools, in this case processors, to solve a problem in very simple terms. Threads model this programming model is a type of shared memory programming. in the threads model of parallel programming, a single "heavy weight" process can have multiple "light weight", concurrent execution paths. Unix processes. light weight process thread model all memory is global and can be accessed by all the threads. runtime stack is local but it can be shared. posix thread api pthreads low level & system programming flavor to it. directive model concurrency is specified in terms of high level compiler directives. Introduction to high performance computing page 3 scope of parallelism conventional architectures coarsely comprise of a processor, memory system, and thedatapath. each of these component present significant performance bottlenecks. This shared memory can be centralized or distributed among the processors. these processors operate on a synchronized read memory, write memory and compute cycle.

Parallel Computing Ppt Threads model this programming model is a type of shared memory programming. in the threads model of parallel programming, a single "heavy weight" process can have multiple "light weight", concurrent execution paths. Unix processes. light weight process thread model all memory is global and can be accessed by all the threads. runtime stack is local but it can be shared. posix thread api pthreads low level & system programming flavor to it. directive model concurrency is specified in terms of high level compiler directives. Introduction to high performance computing page 3 scope of parallelism conventional architectures coarsely comprise of a processor, memory system, and thedatapath. each of these component present significant performance bottlenecks. This shared memory can be centralized or distributed among the processors. these processors operate on a synchronized read memory, write memory and compute cycle.

Comments are closed.