Papers Explained 01 Transformer Most Competitive Neural Sequence

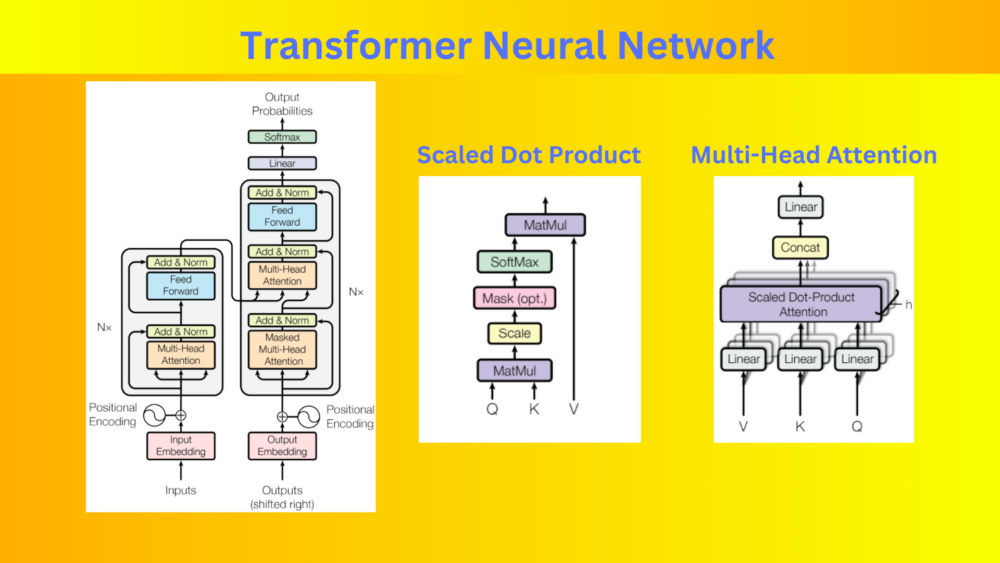

Transformer Pdf Papers explained 01: transformer most competitive neural sequence transduction models have an encoder decoder structure. here, the encoder maps an input sequence of symbol. Most competitive neural sequence transduction models have an encoder decoder structure (vaswani et al, 2017). the encoder is composed of a stack of n=6 identical layers, each with two sub layers: a multi head self attention mechanism, and a simple, position wise fully connected feed forward network.

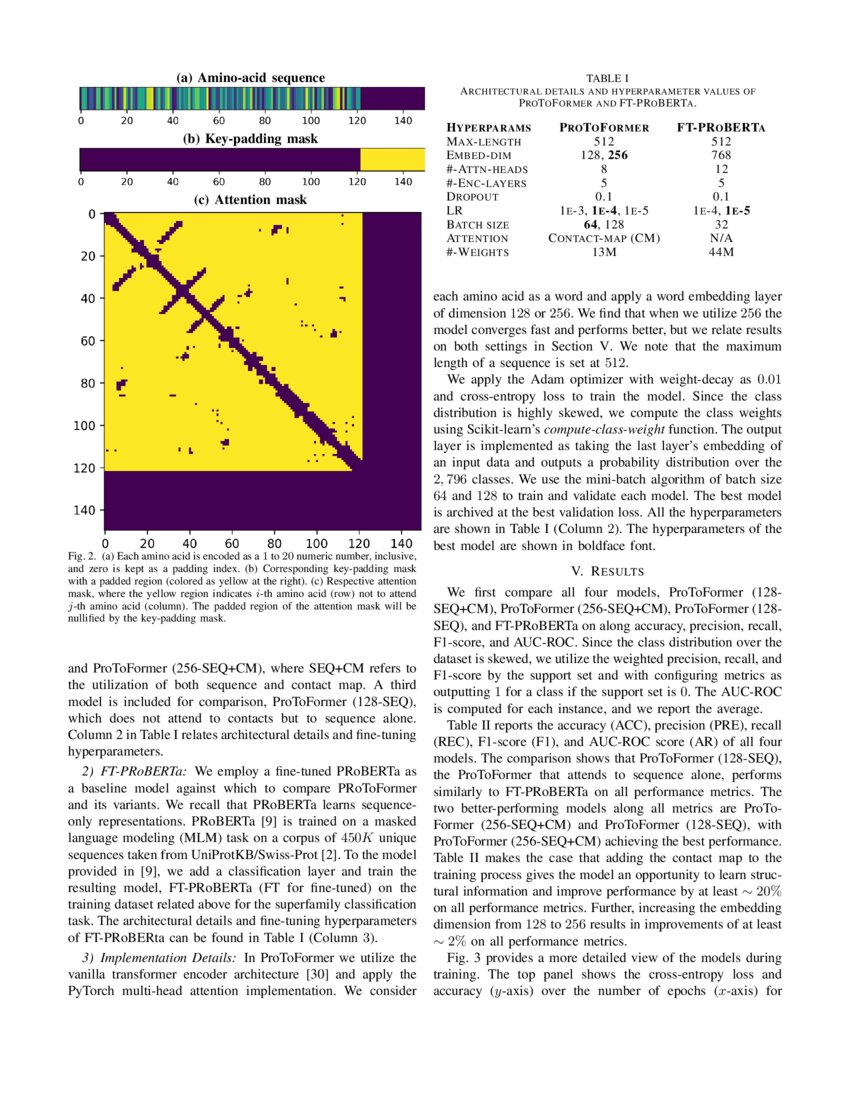

Transformer Neural Networks Attending To Both Sequence And Structure " attention is all you need " [1] is a 2017 research paper in machine learning authored by eight scientists working at google. the paper introduced a new deep learning architecture known as the transformer, based on the attention mechanism proposed in 2014 by bahdanau et al.[2] the transformer approach it describes has become the main architecture of a wide variety of artificial intelligence. In this article, we will explore how transformers work and how they have replaced rnns as the go to model for nlp tasks. Hybrid models that combine transformer architectures with other neural network architectures have gained attention in recent research. these hybrid models aim to leverage the strengths of different architectures to overcome specific limitations or enhance performance in several nlp tasks. In this survey, we provide a comprehensive review of various x formers. we first briefly introduce the vanilla transformer and then propose a new taxonomy of x formers. next, we introduce the various x formers from three perspectives: architectural modification, pre training, and applications.

Transformer Neural Network Hybrid models that combine transformer architectures with other neural network architectures have gained attention in recent research. these hybrid models aim to leverage the strengths of different architectures to overcome specific limitations or enhance performance in several nlp tasks. In this survey, we provide a comprehensive review of various x formers. we first briefly introduce the vanilla transformer and then propose a new taxonomy of x formers. next, we introduce the various x formers from three perspectives: architectural modification, pre training, and applications. In this note we aim for a mathematically precise, intuitive, and clean description of the transformer architecture. we will not discuss training as this is rather standard. In this comprehensive guide, we will dissect the transformer model to its core, thoroughly exploring every key component from its attention mechanism to its encoder decoder structure. The paper notes at the beginning of section 3 on model architecture that "most competitive neural sequence transduction models have an encoder decoder structure.". Among these, we focus on two essential questions in this work: firstly, the approximation rate of the transformer on sequence modeling; secondly, the comparative advantages and disadvantages of the transformer with recurrent neural networks (rnns) on different temporal structures.

Comments are closed.