P1 High Performance Cnn Accelerators Based On Hardware

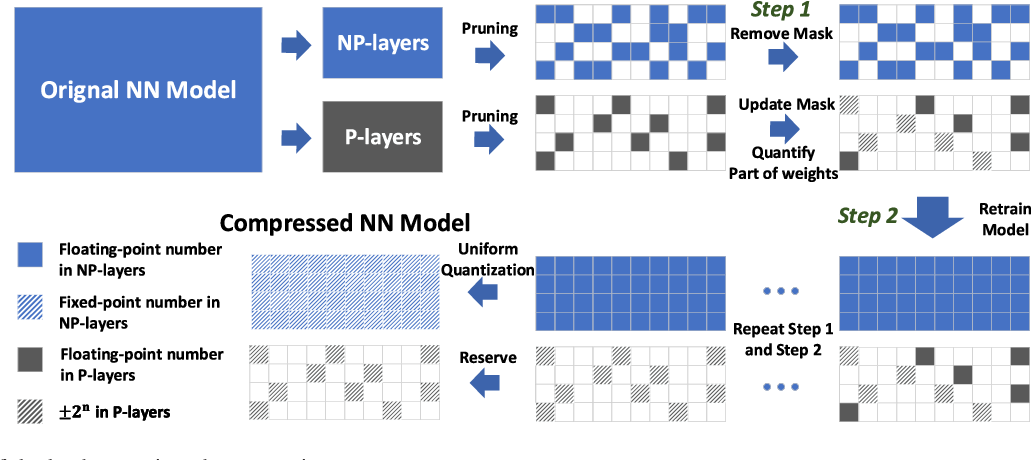

P1 High Performance Cnn Accelerators Based On Hardware Based on the proposed compression strategy and hardware architecture, a hardware algorithm co optimization (haco) approach is proposed for implementing a np − p hybrid compressed cnn model on fpgas. (p1) high performance cnn accelerators based on hardware and algorithm co optimization free download as pdf file (.pdf), text file (.txt) or read online for free.

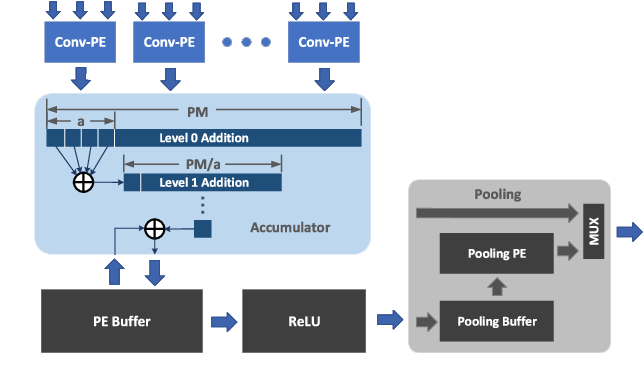

Figure 1 From High Performance Cnn Accelerators Based On Hardware And Under the np p hybrid model, the performance of each module is analyzed. in this section, computation time and hardware utilization are quantitatively evaluated for the pro posed optimization algorithm of section vi. This paper reviews strategies applied in hardware based image classification cnn inference engines. the acceleration strategies are (1) arithmetic logic unit (alu) based, (2) data flow based, and (3) sparsity based are considered here. Field programmable gate arrays (fpgas) have emerged as a leading solution, offering reconfigurability, parallelism, and energy efficiency. this paper provides a comprehensive review of fpga based hardware accelerators specifically designed for cnns. This paper systematically reviews the latest research advances in fpga based cnn hardware accelerators, focusing on the analysis of hardware friendly algorithmic optimizations and efficient hardware architecture designs.

Figure 1 From High Performance Cnn Accelerators Based On Hardware And Field programmable gate arrays (fpgas) have emerged as a leading solution, offering reconfigurability, parallelism, and energy efficiency. this paper provides a comprehensive review of fpga based hardware accelerators specifically designed for cnns. This paper systematically reviews the latest research advances in fpga based cnn hardware accelerators, focusing on the analysis of hardware friendly algorithmic optimizations and efficient hardware architecture designs. In the last decade, several frameworks have been proposed to optimize the global performance of cnn on hardware platforms. this paper presents a survey on hardware architectures generated. Pruning a convolutional neural network (cnn) model can reduce its size with no impact on accuracy; however, when used with a parallel architecture, the resulting model will be slower to execute. a cnn compression method that is hardware centric is introduced in this paper. Pruning a convolutional neural network (cnn) model can reduce its size with no impact on accuracy; however, when used with a parallel architecture, the resulting model will be slower to execute. a cnn compression method that is hardware centric is introduced in this paper. The dataflow architecture of the accelerator is an adaptation of the the bsm (broadcast, stay, migration) dataflow introduced by jihyuck jo et al., which is energy efficient because it reduces the number of redundant accesses to the off chip memory.

Github Shubhdoshi Performance Modeling Of Cnn Accelerators In the last decade, several frameworks have been proposed to optimize the global performance of cnn on hardware platforms. this paper presents a survey on hardware architectures generated. Pruning a convolutional neural network (cnn) model can reduce its size with no impact on accuracy; however, when used with a parallel architecture, the resulting model will be slower to execute. a cnn compression method that is hardware centric is introduced in this paper. Pruning a convolutional neural network (cnn) model can reduce its size with no impact on accuracy; however, when used with a parallel architecture, the resulting model will be slower to execute. a cnn compression method that is hardware centric is introduced in this paper. The dataflow architecture of the accelerator is an adaptation of the the bsm (broadcast, stay, migration) dataflow introduced by jihyuck jo et al., which is energy efficient because it reduces the number of redundant accesses to the off chip memory.

Github Shubhdoshi Performance Modeling Of Cnn Accelerators Pruning a convolutional neural network (cnn) model can reduce its size with no impact on accuracy; however, when used with a parallel architecture, the resulting model will be slower to execute. a cnn compression method that is hardware centric is introduced in this paper. The dataflow architecture of the accelerator is an adaptation of the the bsm (broadcast, stay, migration) dataflow introduced by jihyuck jo et al., which is energy efficient because it reduces the number of redundant accesses to the off chip memory.

Comments are closed.