Overfitting With Classification Trees

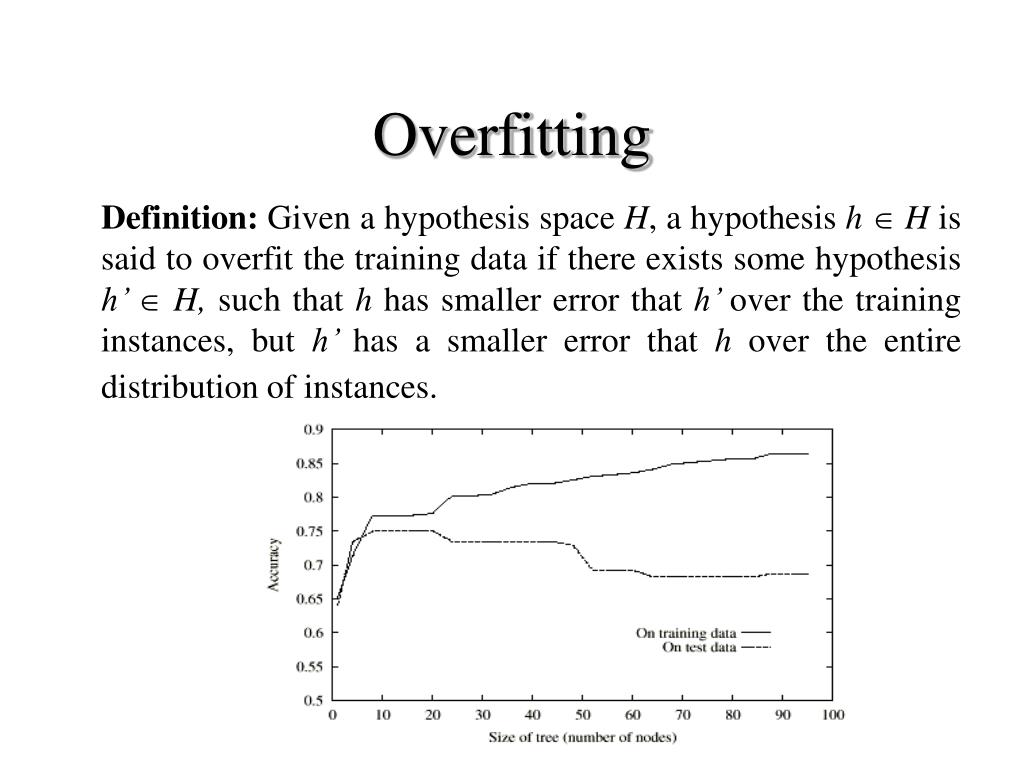

Week7 Decision Trees Overfitting Pdf Decision tree models are capable of learning very detailed decision rules but this often causes them to fit too closely to the training data. as a result, their accuracy drops significantly when evaluated on new, unseen samples. Explaining visually what it means for a decision tree to overfit training data, and using pruning techniques to fix it.

Ppt Classification With Decision Trees And Rules Powerpoint Overfitting is a common problem that needs to be handled while training a decision tree model. overfitting occurs when a model fits too closely to the training data and may become less. In this article, we explored and illustrated three common issues that may lead trained decision tree models to behave poorly: from underfitting to overfitting and irrelevant features. This article delves deep into the causes, consequences, and solutions for overfitting in decision trees, offering actionable insights for professionals seeking to optimize their ai models. This article explains the strategies to decrease overfitting in decision trees models.

Ppt Classification And Regression Trees Powerpoint Presentation Free This article delves deep into the causes, consequences, and solutions for overfitting in decision trees, offering actionable insights for professionals seeking to optimize their ai models. This article explains the strategies to decrease overfitting in decision trees models. Decision tree learners can create over complex trees that do not generalize the data well. this is called overfitting. mechanisms such as pruning, setting the minimum number of samples required at a leaf node or setting the maximum depth of the tree are necessary to avoid this problem. Unlike random forests, gradient boosted trees can overfit. therefore, as for neural networks, you can apply regularization and early stopping using a validation dataset. Overfitting occurs when a decision tree becomes too complex and memorizes the training data instead of learning general patterns. this leads to poor performance on new, unseen data. What you can do now identify when overfitting in decision trees prevent overfitting with early stopping limit tree depth do not consider splits that do not reduce classification error do not split intermediate nodes with only few points.

Comments are closed.