Optimizing Llm Training On Gpus

The Cost Of Hardware In Llm Training What Gpus Do We propose zorse, the first system to unify all these capabilities while incorporating a planner that automatically configures training strategies for a given workload. our evaluation shows that zorse significantly outperforms state of the art systems in heterogeneous training scenarios. There are three primary approaches for larger scale training: using multiple gpus without offloading, using fewer gpus with offloading, or leveraging a single gpu with offloading (if feasible).

Cpu Gpu I O Aware Llm Inference Reduces Latency In Gpus By Optimizing Abstract “training llms larger than the aggregated memory of multiple gpus is increasingly necessary due to the faster growth of llm sizes compared to gpu memory. to this end, multi tier host memory or disk offloading techniques are proposed by state of art. Xinnor has published new benchmarking research addressing one of the most pressing infrastructure challenges in ai today: gpu memory is critically insufficient for training large language models at scale, and simply adding dram is not an economically viable answer. training modern transformer based models demands 18 bytes or more per parameter once optimizer states, gradients, and mixed. A step by step guide for machine learning engineers on how to reduce training times for llms using effective strategies and tools. Explore the techniques we used to improve the training performance on mi300x and mi325x in our mlperf training 5.0 submission.

Optimizing Llm Training Memory Management And Multi Gpu Techniques A step by step guide for machine learning engineers on how to reduce training times for llms using effective strategies and tools. Explore the techniques we used to improve the training performance on mi300x and mi325x in our mlperf training 5.0 submission. This comprehensive guide covers essential multi gpu training techniques, including data parallelism, model parallelism, and key optimizations like zero and flashattention. This guide compiles insights from over 4,000 scaling experiments using up to 512 gpus, focusing on optimizing throughput, gpu utilization, and training efficiency. In this guide, we’ll break down the key factors that influence gpu selection, memory allocation, and the complex relationship between them—empowering you to make smarter decisions when scaling llms for efficient, high quality training. We propose zorse, the first system to unify all these capabilities while incorporating a planner that automatically configures training strategies for a given workload. our evaluation shows that.

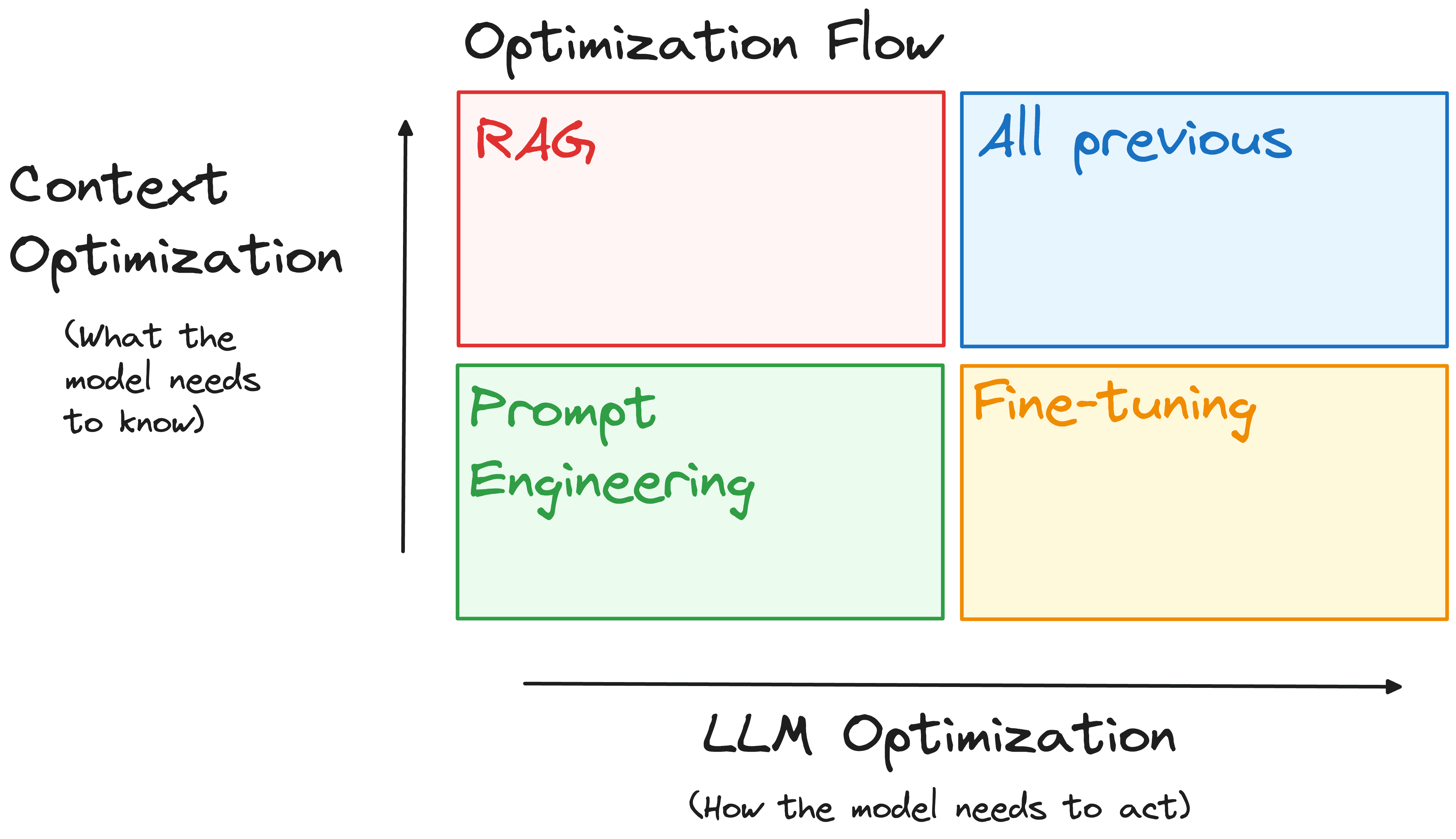

Optimizing Your Llm For Performance And Scalability Kdnuggets This comprehensive guide covers essential multi gpu training techniques, including data parallelism, model parallelism, and key optimizations like zero and flashattention. This guide compiles insights from over 4,000 scaling experiments using up to 512 gpus, focusing on optimizing throughput, gpu utilization, and training efficiency. In this guide, we’ll break down the key factors that influence gpu selection, memory allocation, and the complex relationship between them—empowering you to make smarter decisions when scaling llms for efficient, high quality training. We propose zorse, the first system to unify all these capabilities while incorporating a planner that automatically configures training strategies for a given workload. our evaluation shows that.

Practical Strategies For Optimizing Llm Inference Sizing And In this guide, we’ll break down the key factors that influence gpu selection, memory allocation, and the complex relationship between them—empowering you to make smarter decisions when scaling llms for efficient, high quality training. We propose zorse, the first system to unify all these capabilities while incorporating a planner that automatically configures training strategies for a given workload. our evaluation shows that.

Comments are closed.