Optimizing Large Language Models Through Fine Tuning Moschip

Optimizing Large Language Models Through Fine Tuning Moschip Large language models are transformed from general ai to specialized solutions through fine tuning on domain specific data to establish accuracy, minimize errors, and ensure alignment with real world needs. fine tuning improves the relevance and strength of outputs in huge industry applications. Large language models are transformed from general ai to specialized solutions through fine tuning on domain specific data to establish accuracy, minimize errors, and ensure alignment.

Optimizing Large Language Models Through Fine Tuning Moschip Optimizing large language models through fine tuning large language models are transformed from general ai to specialized solutions through fine tuning on domain specific data to establish accuracy…. This study provides valuable guidance for more efficient fine tuning strategies and opens avenues for further research into optimizing llm fine tuning in resource constrained environments. We share effective strategies for fine tuning very large models with tens or hundreds of billions of parameters and enabling large context lengths during fine tuning. This paper explores the fine tuning of large language models (llms) to achieve optimal efficiency and high performance in various natural language processing tasks.

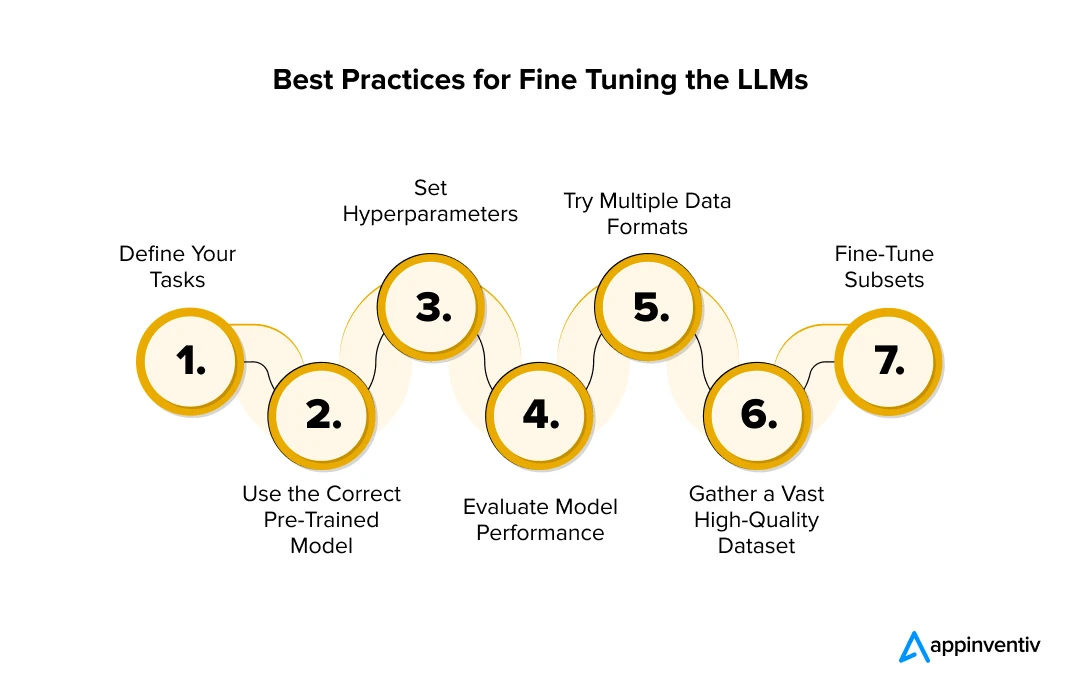

Fine Tuning Large Language Models Llms In 2024 We share effective strategies for fine tuning very large models with tens or hundreds of billions of parameters and enabling large context lengths during fine tuning. This paper explores the fine tuning of large language models (llms) to achieve optimal efficiency and high performance in various natural language processing tasks. In this review, we outline some of the major methodologic approaches and techniques that can be used to fine tune llms for specialized use cases and enumerate the general steps required for carrying out llm fine tuning. Full parameter fine tuning of large language models is constrained by substantial gpu memory requirements. low rank adaptation methods mitigate this challenge by updating only a subset of parameters. however, these approaches often limit model expressiveness and yield lower performance than full parameter fine tuning. This study proposes a large language model optimization method based on the improved lora fine tuning algorithm, aiming to improve the accuracy and computationa. Fine tuning large language models (llms) is essential for domain specific adaptation. this study compares serial and parallel computation paradigms for fine tuning using the evol instruct framework.

Comments are closed.