Optimizing Hardware Reliability For Ai Acceleration

Hardware For Deep Learning Acceleration Pdf Deep Learning Central Discover how cloud ai gpus eliminate hardware failures, reduce costs, and ensure uninterrupted performance for your ai projects. Erlying ai ml hardware becomes our paramount importance. in this paper, we explore a d evaluate the relia bility of different ai ml hardware. the first section outlines the reliability issues in a commercial systolic array based ml accelerator in the presence of faults.

Ai Acceleration Enables Heavy Ml Workloads Alif Semiconductor This guide dives deep into optimizing models with tensorrt, blending theoretical insights with hands on python code to empower developers tackling these real world ai challenges. To address the dual challenge of ensuring high performance ai acceleration while maintaining robust runtime security, particularly in the context of large language models (llms), we propose a modular hardware framework specifically designed to bridge this critical gap. This paper aims to explore the hardware acceleration optimization strategy of deep learning model on ai chip to improve the training and inference performance of the model. Optimizing hardware usage for ai systems is crucial. examine the techniques for optimizing data processing, model deployment, storage, and feature engineering.

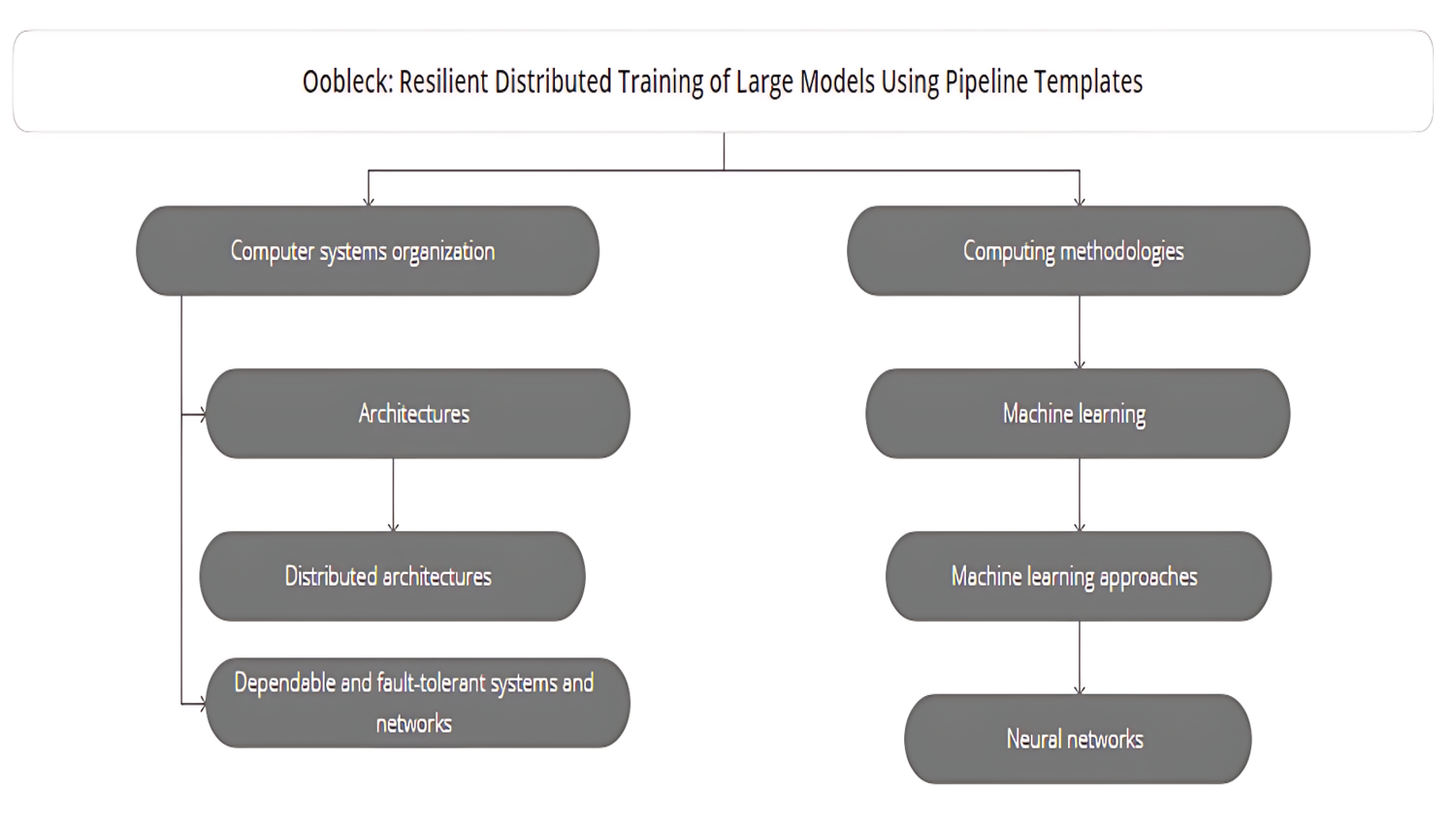

Optimizing Hardware Reliability For Ai Acceleration This paper aims to explore the hardware acceleration optimization strategy of deep learning model on ai chip to improve the training and inference performance of the model. Optimizing hardware usage for ai systems is crucial. examine the techniques for optimizing data processing, model deployment, storage, and feature engineering. In this paper, we describe and discuss such main challenges, along with possible solutions. the paper is organized as follows. We discuss how these research efforts bridge the gap between memristive devices and energy efficient accelerators for ai. We focus on reducing hardware failures during training through detection and diagnostics, and quickly restarting training with healthy servers and accelerators. this involves optimizing fault categorization, device triage, node selection, cluster validation, and checkpoint restore. In this review, we aims to provide comprehensive reviews on workloads for dnns and snns of different topologies and various hardware platforms to accelerate their major operations.

Optimizing Hardware Reliability For Ai Acceleration In this paper, we describe and discuss such main challenges, along with possible solutions. the paper is organized as follows. We discuss how these research efforts bridge the gap between memristive devices and energy efficient accelerators for ai. We focus on reducing hardware failures during training through detection and diagnostics, and quickly restarting training with healthy servers and accelerators. this involves optimizing fault categorization, device triage, node selection, cluster validation, and checkpoint restore. In this review, we aims to provide comprehensive reviews on workloads for dnns and snns of different topologies and various hardware platforms to accelerate their major operations.

Optimizing Hardware Reliability For Ai Acceleration We focus on reducing hardware failures during training through detection and diagnostics, and quickly restarting training with healthy servers and accelerators. this involves optimizing fault categorization, device triage, node selection, cluster validation, and checkpoint restore. In this review, we aims to provide comprehensive reviews on workloads for dnns and snns of different topologies and various hardware platforms to accelerate their major operations.

Comments are closed.