Optimizing Data Analytics Integrating Github Copilot In Databricks

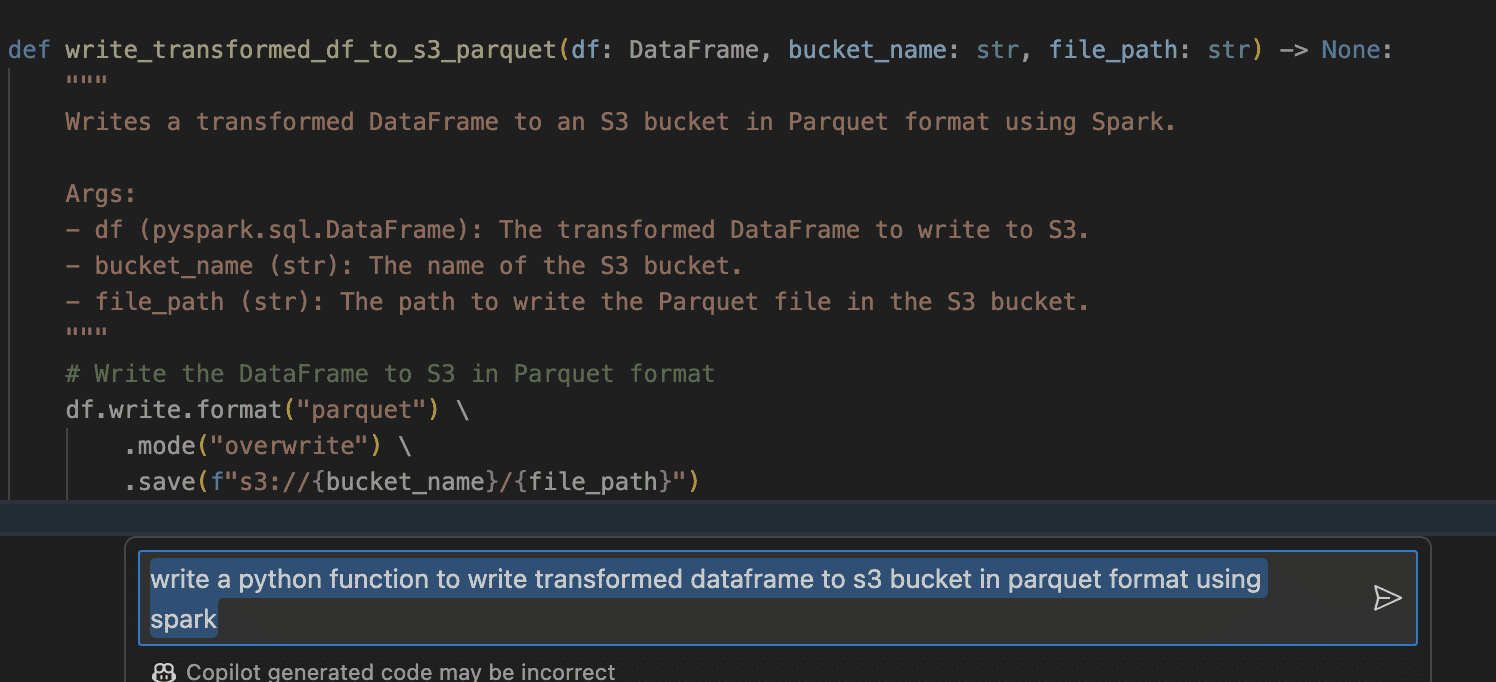

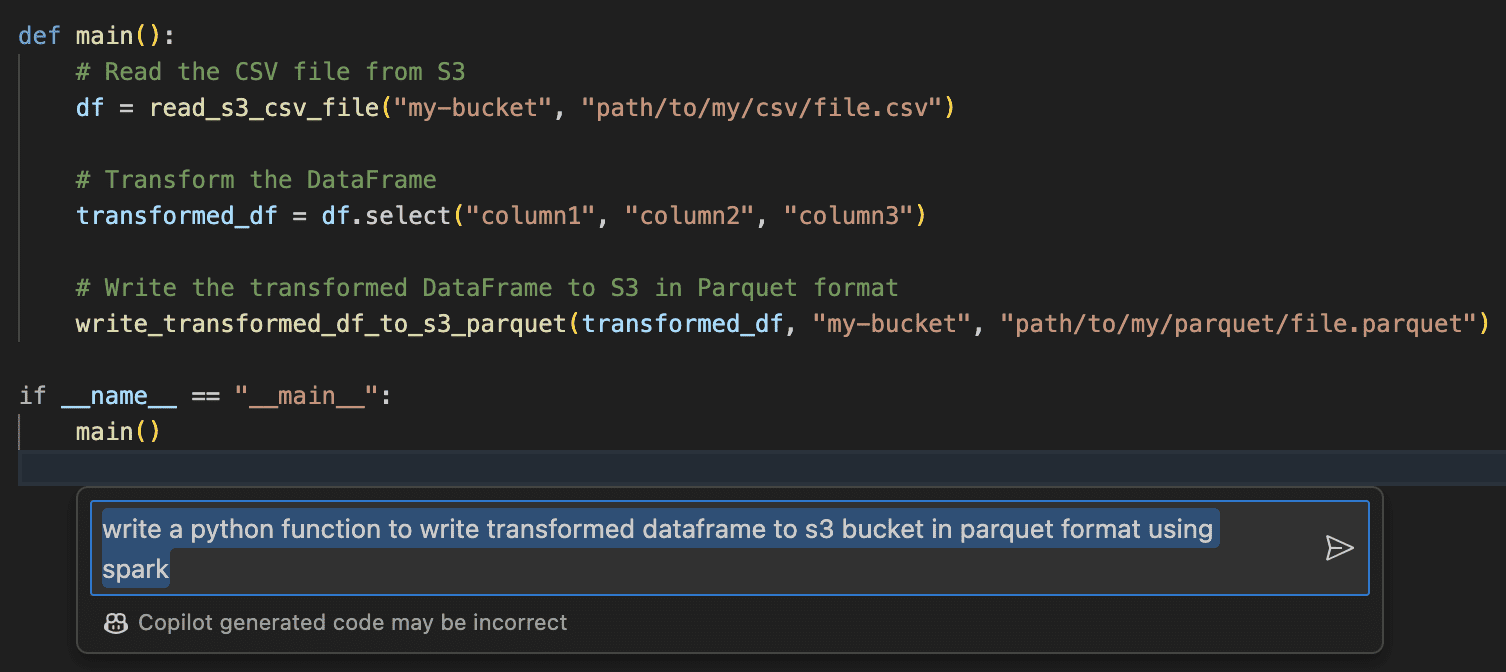

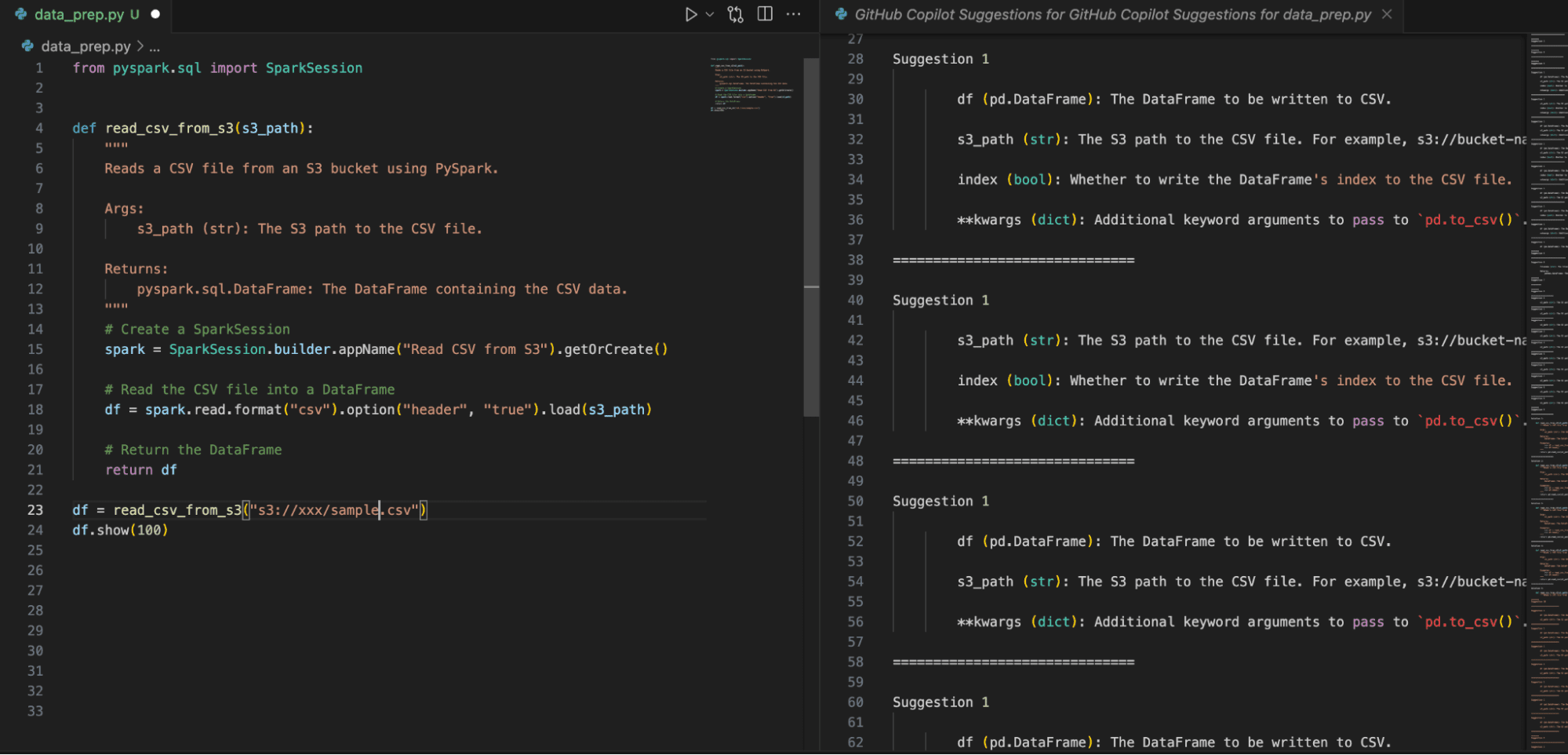

Optimizing Data Analytics Integrating Github Copilot In Databricks Data engineers can utilize github copilot to write data engineering pipelines at fingertips at a faster pace, including documentation, within no time. below are the steps to create a simple data engineering pipeline with prompting techniques. The article provides step by step instructions for integrating github copilot and databricks, as well as examples of how data engineers can use github copilot to create data engineering pipelines more efficiently.

Optimizing Data Analytics Integrating Github Copilot In Databricks Recently, databricks released a preview version of the managed mcp server. upon seeing this, i immediately wanted to integrate databricks genie with vs code and github copilot agent mode. Integrating github copilot with databricks empowers knowledge analytics and machine studying engineers to deploy options effectively and in a time effective method. In my last post, i showed how to build a databricks dev setup using dev containers asset bundles. super powerful — but there’s one catch: 💸 you still need a paid databricks account just to. As a data engineer working with databricks, spark, and python pipelines, i’ve gotten used to juggling between writing production logic, documentation, test coverage, and orchestration yamls.

Optimizing Data Analytics Integrating Github Copilot In Databricks In my last post, i showed how to build a databricks dev setup using dev containers asset bundles. super powerful — but there’s one catch: 💸 you still need a paid databricks account just to. As a data engineer working with databricks, spark, and python pipelines, i’ve gotten used to juggling between writing production logic, documentation, test coverage, and orchestration yamls. The new integrations between genie and copilot studio, as well as genie and microsoft foundry, solve these challenges by providing easy and secure ways to connect each platform. How to integrate databricks in visual studio code using github copilot? boost your data wrangling and analysis efficiency by seamlessly integrating github copilot within your databricks environment through visual studio code. Join discussions on data engineering best practices, architectures, and optimization strategies within the databricks community. exchange insights and solutions with fellow data engineers. In short, the purpose of this project is to demonstrate how plotly's dash can be utilized in tandem with the databricks sdk. more specifically, here we choose to kick off.

Optimizing Data Analytics Integrating Github Copilot In Databricks The new integrations between genie and copilot studio, as well as genie and microsoft foundry, solve these challenges by providing easy and secure ways to connect each platform. How to integrate databricks in visual studio code using github copilot? boost your data wrangling and analysis efficiency by seamlessly integrating github copilot within your databricks environment through visual studio code. Join discussions on data engineering best practices, architectures, and optimization strategies within the databricks community. exchange insights and solutions with fellow data engineers. In short, the purpose of this project is to demonstrate how plotly's dash can be utilized in tandem with the databricks sdk. more specifically, here we choose to kick off.

Comments are closed.