Optimizing Actions Klu Ai

Klu Ai Documentation Optimizing actions is crucial for harnessing the full potential of large language models (llms). klu provides an easy way to fine tune these actions based on your specific needs. this guide offers a comprehensive look at llm optimization techniques, from basic to advanced strategies. Covering multi model support, native rag functionality, prompt management, evaluation and optimization tools, pricing plans, competitive comparisons, and enterprise use cases — everything you need to know about building, deploying, and optimizing llm powered applications in one place.

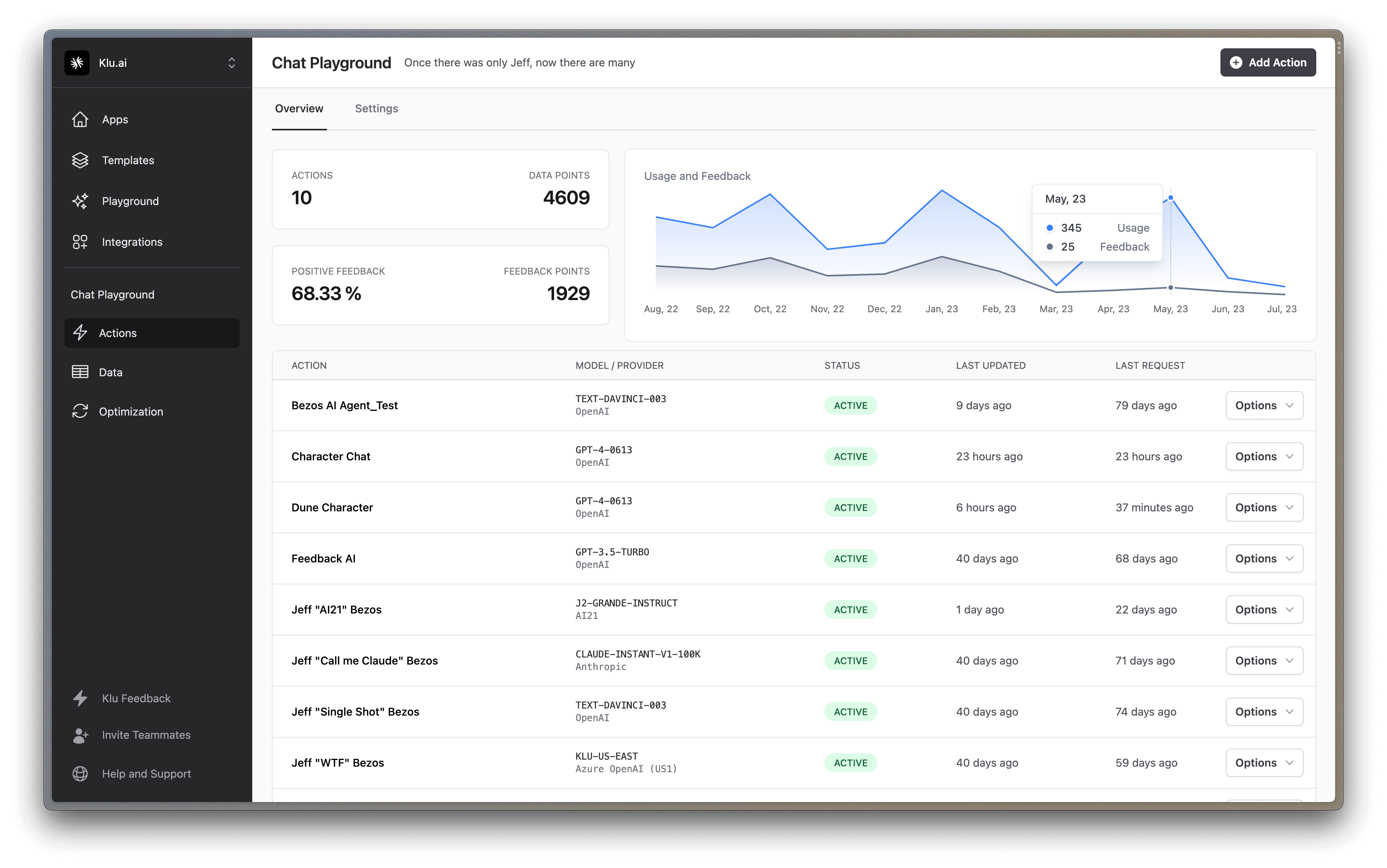

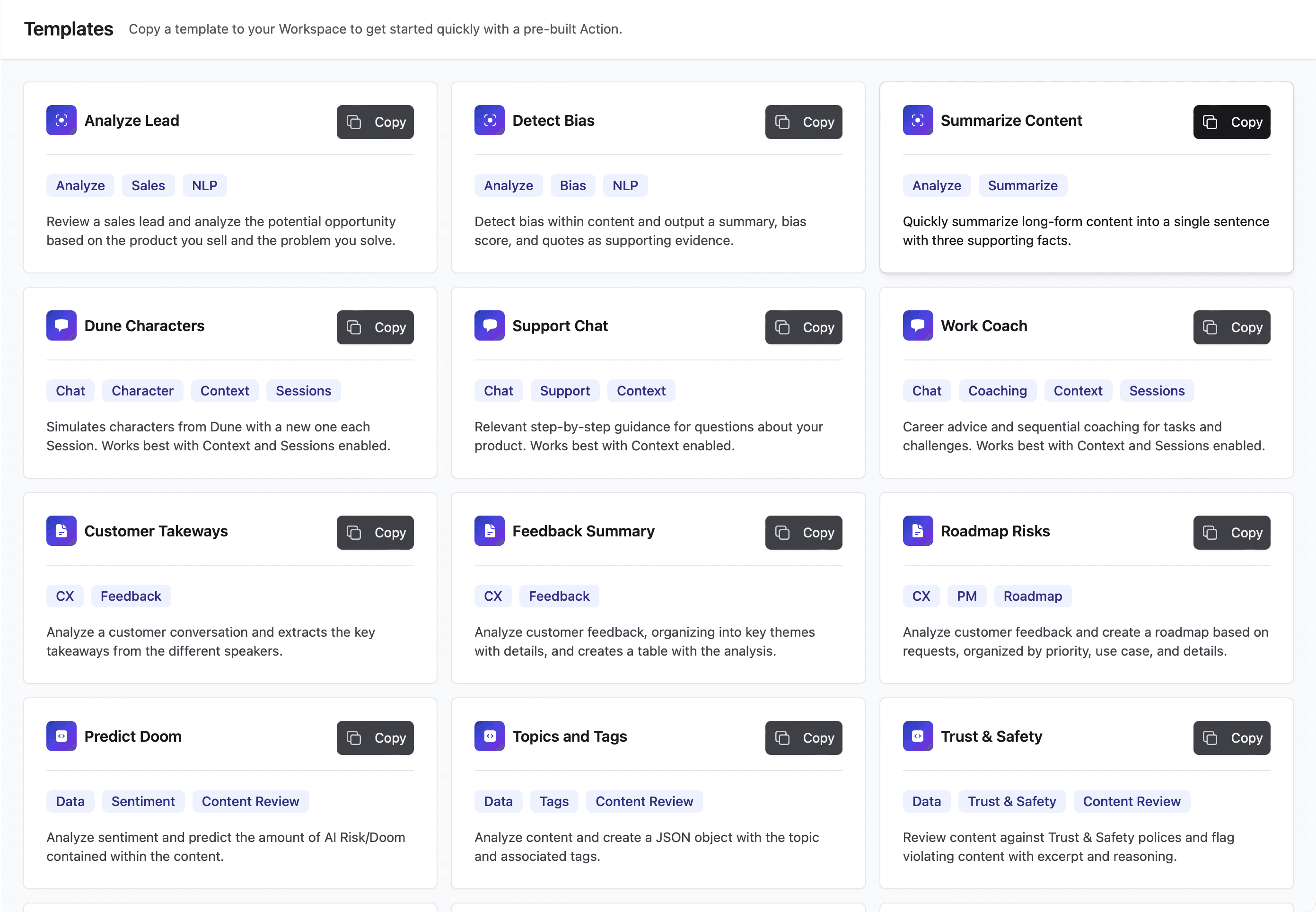

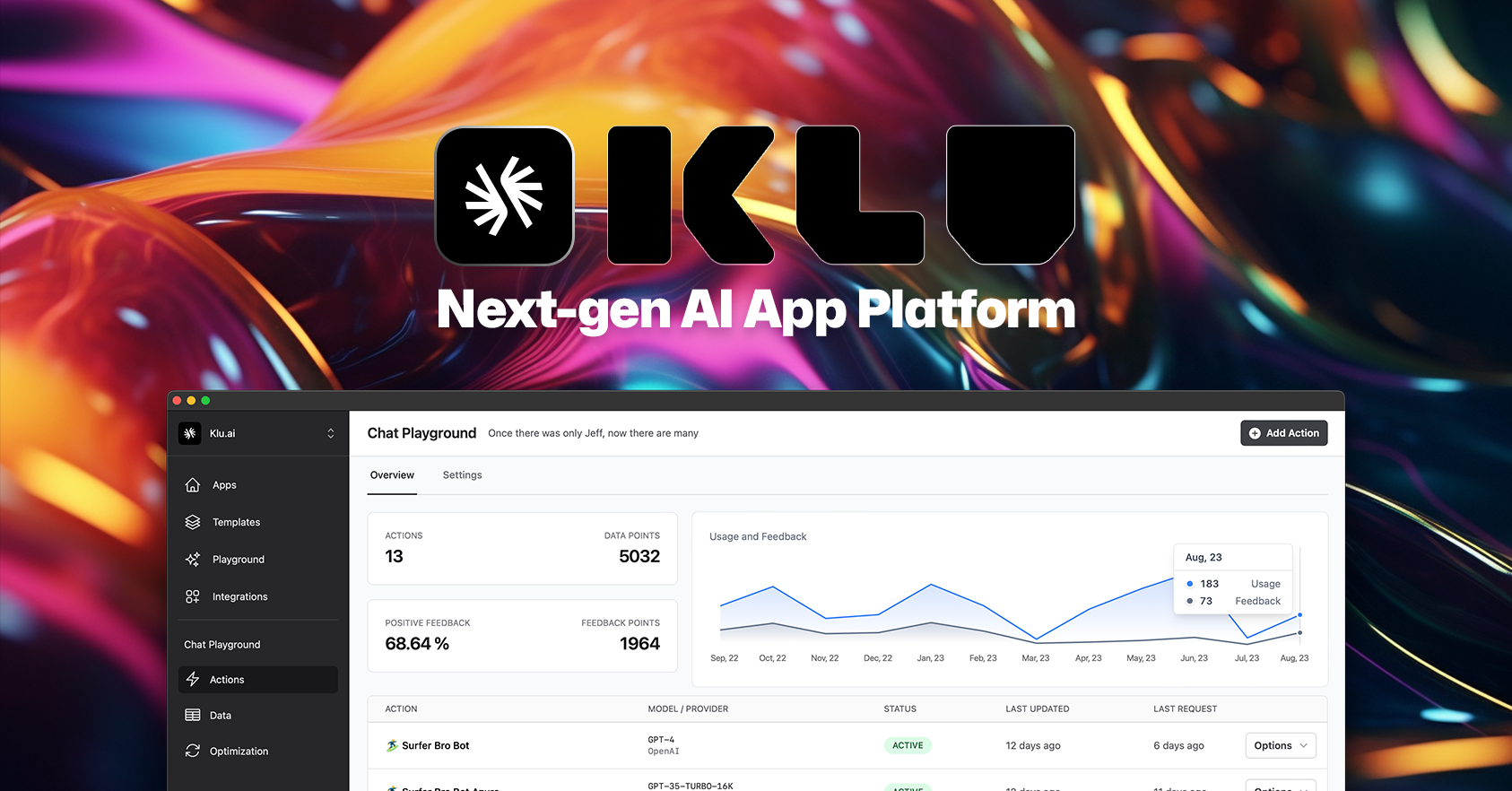

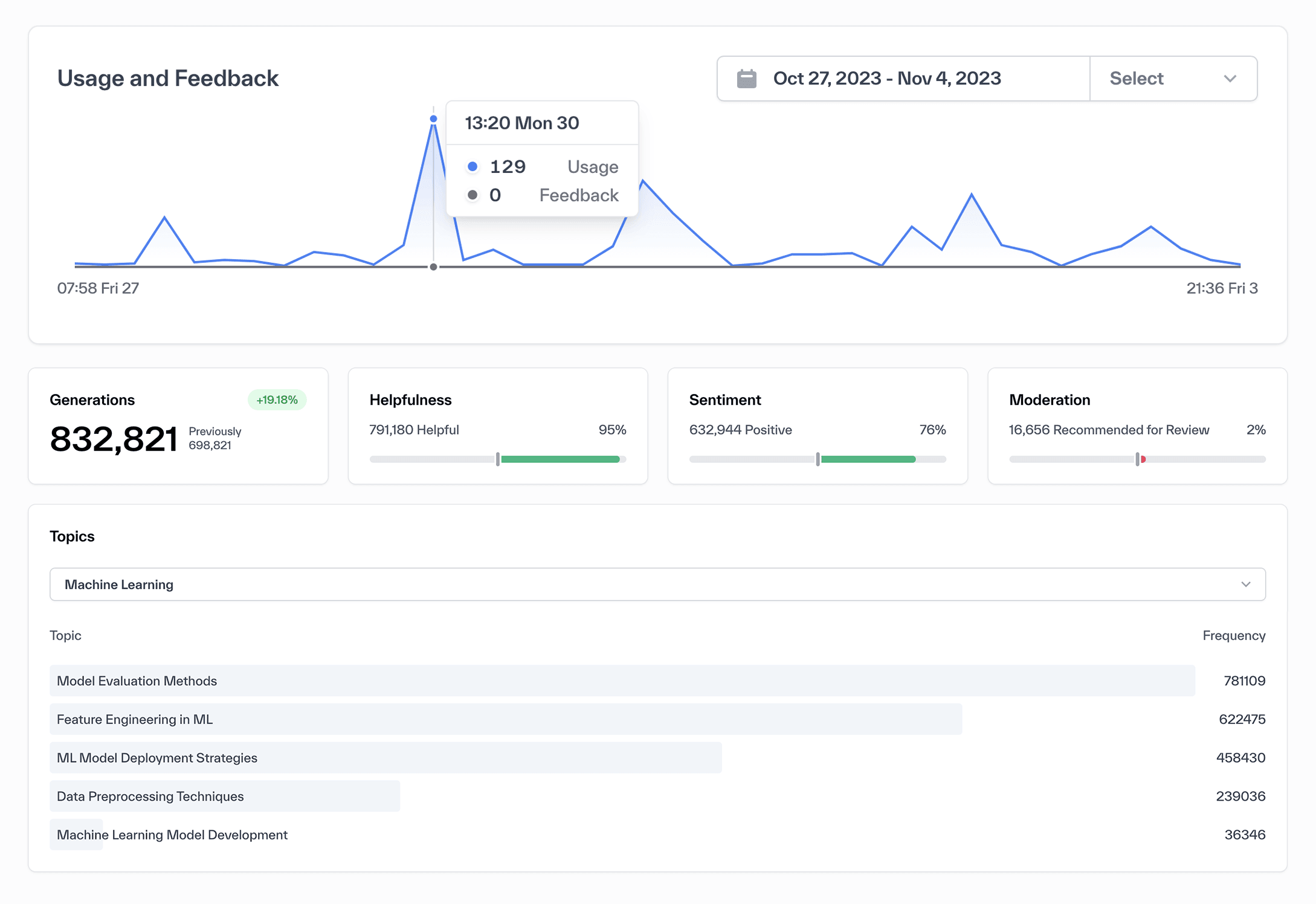

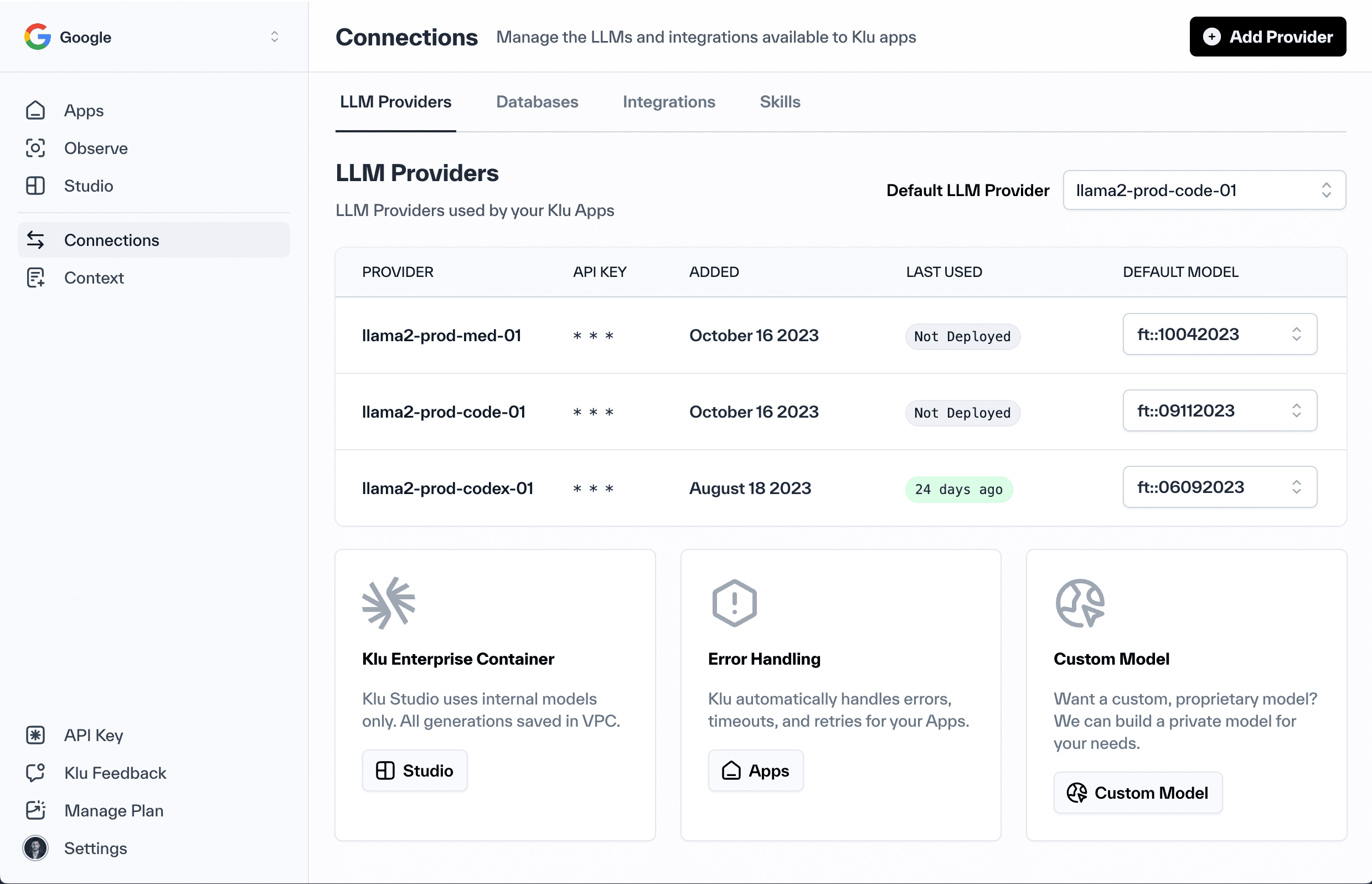

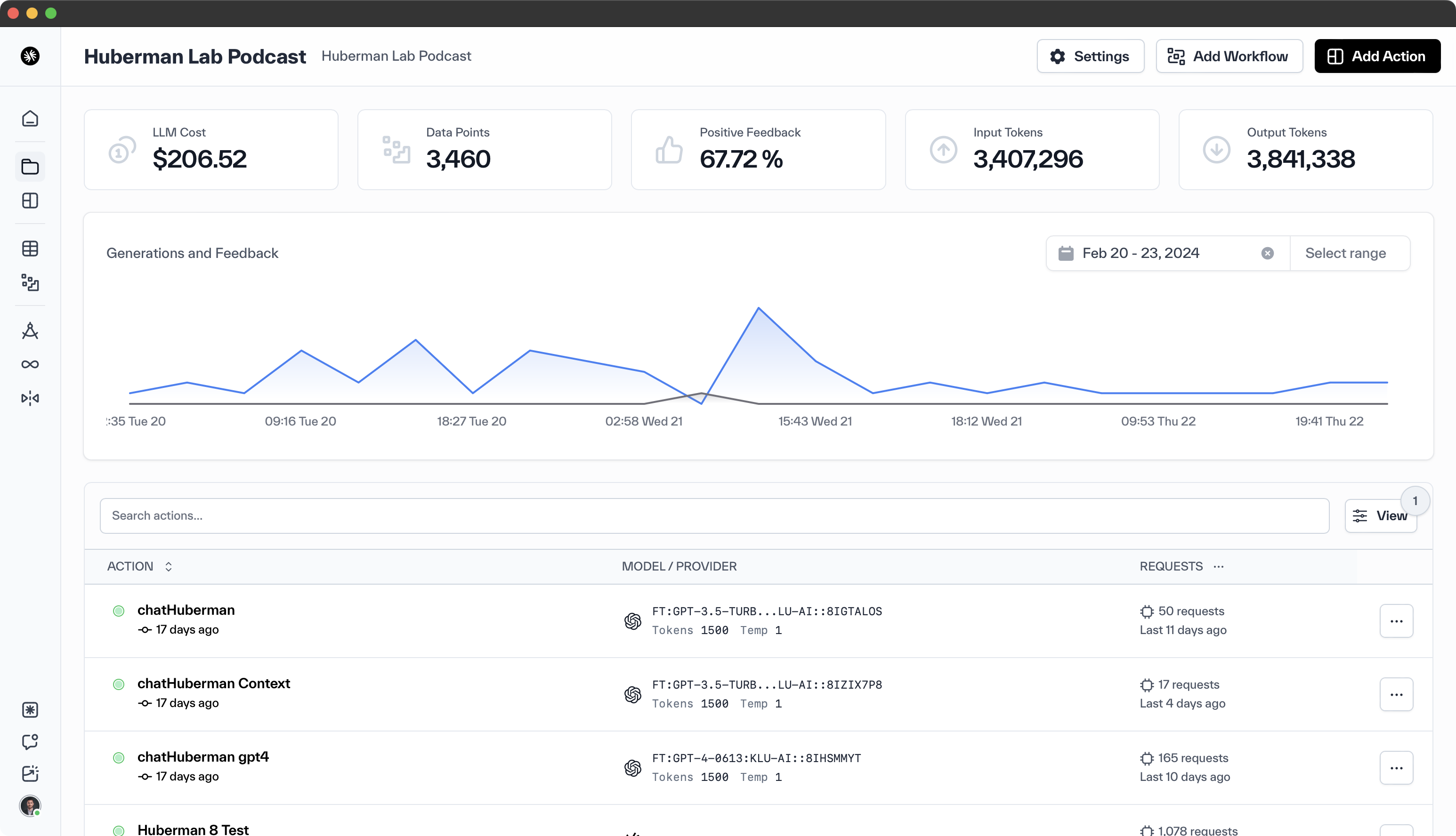

Klu Ai Design Deploy And Optimize Generative Ai Apps Klu is an llm app platform that streamlines building and optimizing ai apps by integrating with leading language models like claude and gpt 4, allowing rapid experimentation and model tuning while optimizing cost and performance through data gathering, user feedback, and automatic prompt engineering. Klu is an all in one llm (language model) app platform that allows ai teams to design, deploy, and optimize generative actions, prompts, chats, workflows, and skills using best in class llm providers. Discover klu.ai, the all in one llm app platform streamlining ai workflows with unified model access, collaborative prompt engineering, and comprehensive analytics. Klu.ai is a comprehensive platform designed to simplify the process of creating, implementing, and refining ai applications. it offers a range of capabilities such as summarizing, business operations workflows, a b testing, and optimization approaches for models like openai’s gpt 3.5 turbo and gpt 4.

Klu Ai Design Deploy And Optimize Generative Ai Apps Discover klu.ai, the all in one llm app platform streamlining ai workflows with unified model access, collaborative prompt engineering, and comprehensive analytics. Klu.ai is a comprehensive platform designed to simplify the process of creating, implementing, and refining ai applications. it offers a range of capabilities such as summarizing, business operations workflows, a b testing, and optimization approaches for models like openai’s gpt 3.5 turbo and gpt 4. Build generative actions for content generation, business operations workflows, content analysis, and summarization. then use a b testing and fine tuning support for models like openai's gpt 3.5 turbo and gpt 4 to optimize further with real customer feedback. Klu is an llm app platform that streamlines building and optimizing ai apps by integrating with leading language models like claude and gpt 4, allowing rapid experimentation and model tuning while optimizing cost and performance through data gathering, user feedback, and automatic prompt engineering. A: klu.ai offers a no code platform for designing, deploying, and optimizing ai features and apps. it also has advanced data engine and tooling for python, typescript, and react ui, making it easy to develop customized ai solutions. 1. what does ai inference performance optimization actually mean in this context? in this article, optimizing ai inference performance means finding the right balance between high throughput (how many tokens, images or embeddings you serve per second) and low latency (how long a user waits for the first useful output).

Design Deploy And Optimize Llm Apps With Klu Klu Ai Build generative actions for content generation, business operations workflows, content analysis, and summarization. then use a b testing and fine tuning support for models like openai's gpt 3.5 turbo and gpt 4 to optimize further with real customer feedback. Klu is an llm app platform that streamlines building and optimizing ai apps by integrating with leading language models like claude and gpt 4, allowing rapid experimentation and model tuning while optimizing cost and performance through data gathering, user feedback, and automatic prompt engineering. A: klu.ai offers a no code platform for designing, deploying, and optimizing ai features and apps. it also has advanced data engine and tooling for python, typescript, and react ui, making it easy to develop customized ai solutions. 1. what does ai inference performance optimization actually mean in this context? in this article, optimizing ai inference performance means finding the right balance between high throughput (how many tokens, images or embeddings you serve per second) and low latency (how long a user waits for the first useful output).

Design Deploy And Optimize Llm Apps With Klu Klu Ai A: klu.ai offers a no code platform for designing, deploying, and optimizing ai features and apps. it also has advanced data engine and tooling for python, typescript, and react ui, making it easy to develop customized ai solutions. 1. what does ai inference performance optimization actually mean in this context? in this article, optimizing ai inference performance means finding the right balance between high throughput (how many tokens, images or embeddings you serve per second) and low latency (how long a user waits for the first useful output).

Klu Ai Documentation

Comments are closed.