Optimizers Working Visualized In Machine Learning Agi Lambda

Agi Lambda Youtube Momentum in optimizers helps speed up learning by carrying forward some of the past gradients to smooth out updates. Sharing ideas, experiments, and long term questions about learning systems, autonomy, and the future of ai. studying artificial intelligence to understand intelligence itself.

Github Kocabiyik Optimizers Visualized рџћёvisualizations Of Ml Common loss functions include log loss, hinge loss and mean square loss. an optimizer improves the model by adjusting its parameters (weights and biases) to minimize the loss function value. examples include rmsprop, adam and sgd (stochastic gradient descent). Click anywhere on the function heatmap to start a minimization. you can toggle the different algorithms (sgd, momentum, rmsprop, adam) by clicking on the circles in the lower bar. the global minimum is on the left. a local minimum is found on the right. Efficient sensor deployment in wireless sensor networks (wsns), a cornerstone of the internet of things (iot), is critical for optimal operation, particularly in environments with abundant obstacles. this paper proposes gamgwo, a multi strategy grey wolf optimizer inspired by generative adversarial networks, to address the challenges of node deployment in such complex physical environments. by. Optimizers help by efficiently navigating the complex landscape of weight parameters, reducing the loss function, and converging toward the global minima — the point with the lowest possible loss.

Exploring Optimizers In Machine Learning Fritz Ai Efficient sensor deployment in wireless sensor networks (wsns), a cornerstone of the internet of things (iot), is critical for optimal operation, particularly in environments with abundant obstacles. this paper proposes gamgwo, a multi strategy grey wolf optimizer inspired by generative adversarial networks, to address the challenges of node deployment in such complex physical environments. by. Optimizers help by efficiently navigating the complex landscape of weight parameters, reducing the loss function, and converging toward the global minima — the point with the lowest possible loss. Compare deep learning optimizers like sgd, momentum, adam, and more. learn their evolution, key features, and when to use each. In each section, i introduce optimizers commonly used in the specific domain, explain how they work, discuss domain specific usage (including typical activation functions and hardware. The ultimate goal of machine learning development is to maximize the usefulness of the deployed model. you can typically use the same basic steps and principles in this section on any ml. An interactive visualization of the most popular optimization algorithms used in machine learning. the goal of this project was to visually learn the differences between the optimization algorithms and which one might be suited for a particular problem.

Exploring Optimizers In Machine Learning Fritz Ai Compare deep learning optimizers like sgd, momentum, adam, and more. learn their evolution, key features, and when to use each. In each section, i introduce optimizers commonly used in the specific domain, explain how they work, discuss domain specific usage (including typical activation functions and hardware. The ultimate goal of machine learning development is to maximize the usefulness of the deployed model. you can typically use the same basic steps and principles in this section on any ml. An interactive visualization of the most popular optimization algorithms used in machine learning. the goal of this project was to visually learn the differences between the optimization algorithms and which one might be suited for a particular problem.

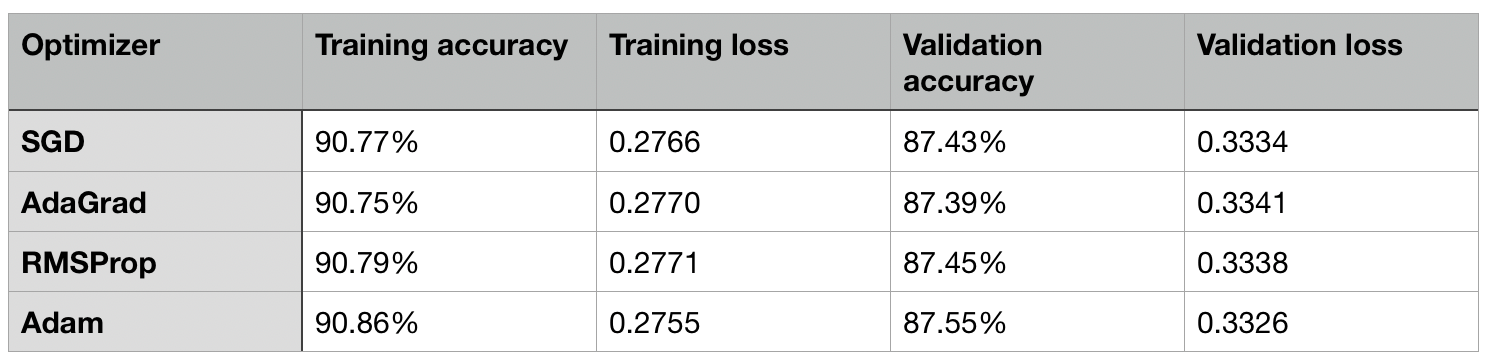

An Empirical Comparison Of Optimizers For Machine Learning Models The ultimate goal of machine learning development is to maximize the usefulness of the deployed model. you can typically use the same basic steps and principles in this section on any ml. An interactive visualization of the most popular optimization algorithms used in machine learning. the goal of this project was to visually learn the differences between the optimization algorithms and which one might be suited for a particular problem.

Comments are closed.