Optimizer Step Explained

Keras Optimizers Explained Adam Optimizer By Okan Yenigün Ai Mind After loss.backward () computes and stores the gradients, optimizer.step () performs the actual learning step. it is called on the optimizer object, which is already configured with the model parameters and a chosen learning rate. The role of an optimizer is to determine how the model’s weights should be updated during training. this involves calculating the direction and magnitude of change to minimize the loss function.

Block Diagram Of The Optimizer 1 And The Optimizer 2 The Optimizer 1 The optimizer.step() function is a key component in the training process of a neural network, and understanding how to use it correctly is essential for achieving good performance. Torch.optim.optimizer.step documentation for pytorch, part of the pytorch ecosystem. We’ll break down what `loss.backward ()` actually does under the hood, how gradients are computed and stored, and exactly how `optimizer.step ()` uses those gradients to update your model’s parameters. All optimizers implement a step() method, that updates the parameters. it can be used in two ways: this is a simplified version supported by most optimizers. the function can be called once the gradients are computed using e.g. backward(). example:.

How Optimizer Works We’ll break down what `loss.backward ()` actually does under the hood, how gradients are computed and stored, and exactly how `optimizer.step ()` uses those gradients to update your model’s parameters. All optimizers implement a step() method, that updates the parameters. it can be used in two ways: this is a simplified version supported by most optimizers. the function can be called once the gradients are computed using e.g. backward(). example:. This blog post will dive deep into the concepts of classification loss, pytorch, and the optimizer.step() method, covering their fundamental concepts, usage, common practices, and best practices. This guide dives deep into how optimizer.step () works, why it’s essential, and how you can leverage its mechanics for advanced workflows. This is the step that actually enables your neural network to learn from data. in this guide, we'll explore how optimizers work in pytorch and how to implement them effectively. After computing the gradients for all tensors in the model, calling optimizer.step() makes the optimizer iterate over all parameters (tensors) it is supposed to update and use their internally stored grad to update their values.

Schematic Optimizer Concepts Download Scientific Diagram This blog post will dive deep into the concepts of classification loss, pytorch, and the optimizer.step() method, covering their fundamental concepts, usage, common practices, and best practices. This guide dives deep into how optimizer.step () works, why it’s essential, and how you can leverage its mechanics for advanced workflows. This is the step that actually enables your neural network to learn from data. in this guide, we'll explore how optimizers work in pytorch and how to implement them effectively. After computing the gradients for all tensors in the model, calling optimizer.step() makes the optimizer iterate over all parameters (tensors) it is supposed to update and use their internally stored grad to update their values.

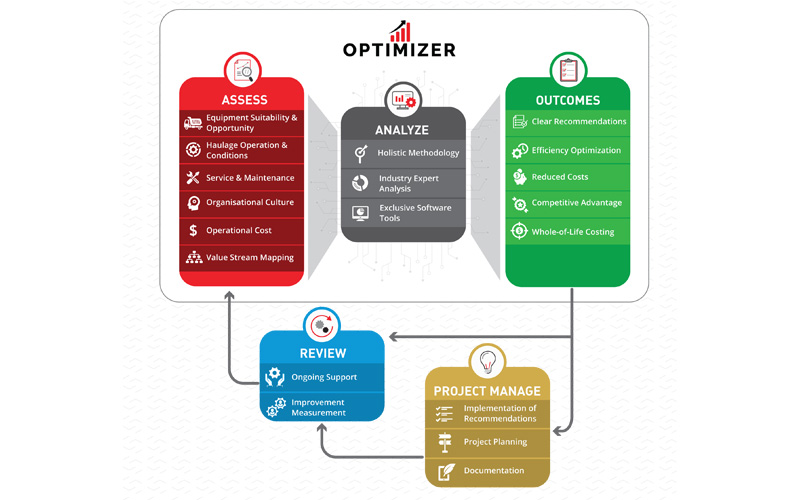

Optimizer A Holistic Productivity Improvement Process For Transport This is the step that actually enables your neural network to learn from data. in this guide, we'll explore how optimizers work in pytorch and how to implement them effectively. After computing the gradients for all tensors in the model, calling optimizer.step() makes the optimizer iterate over all parameters (tensors) it is supposed to update and use their internally stored grad to update their values.

Comments are closed.