Optimize Your Tensorflow Lite Models Session

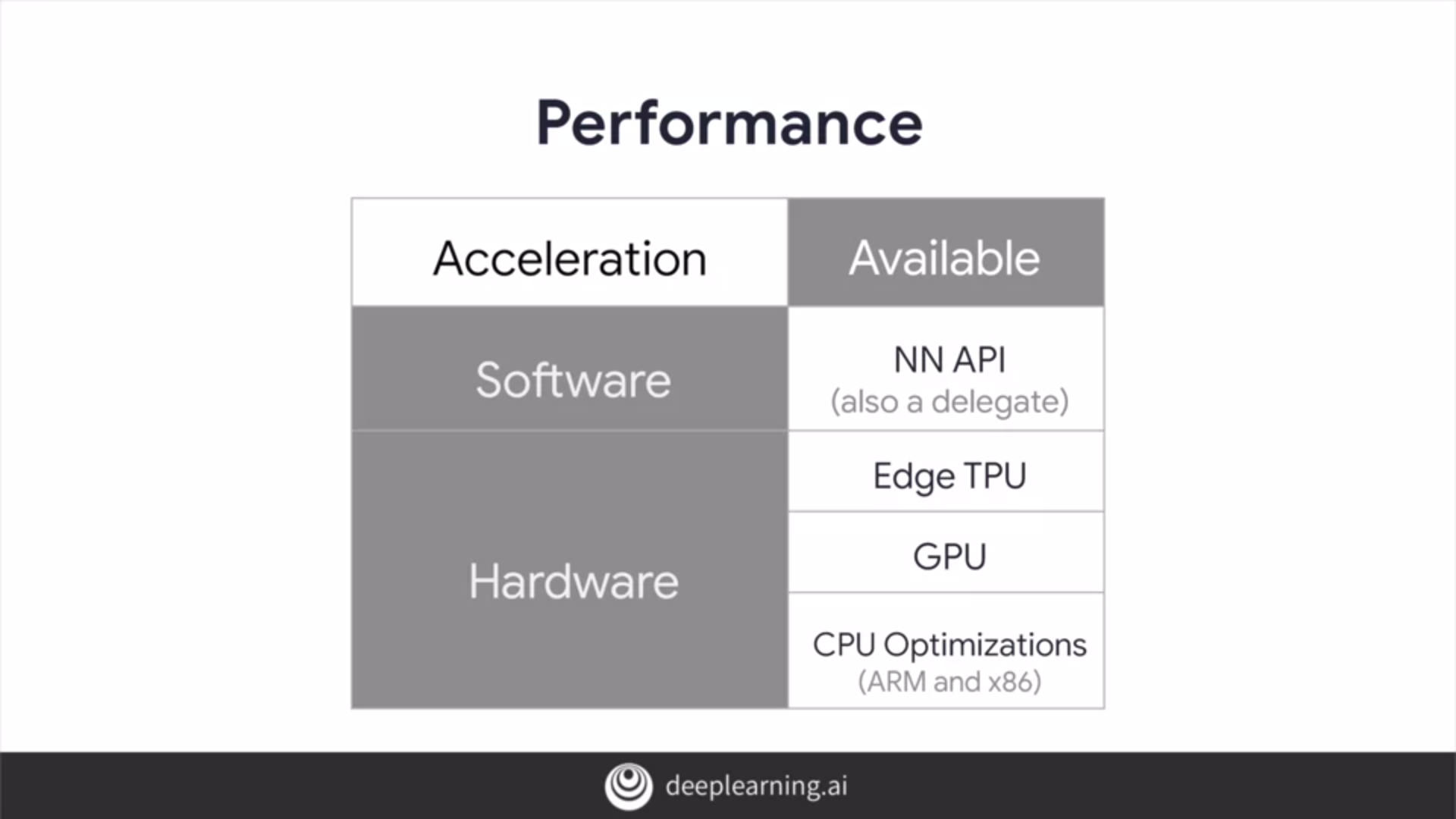

Tensorflow Lite ว าด วยเร องของการ Optimize Models สำหร บงาน Embedded In this session, we cover performance and model optimization tools, techniques, best practices that you can use to improve your model performance, and ensure that your model runs well. In this session, we will cover performance and model optimization tools, techniques and best practices that you can use to improve your model performance, and ensure that your model runs well within these constraints.

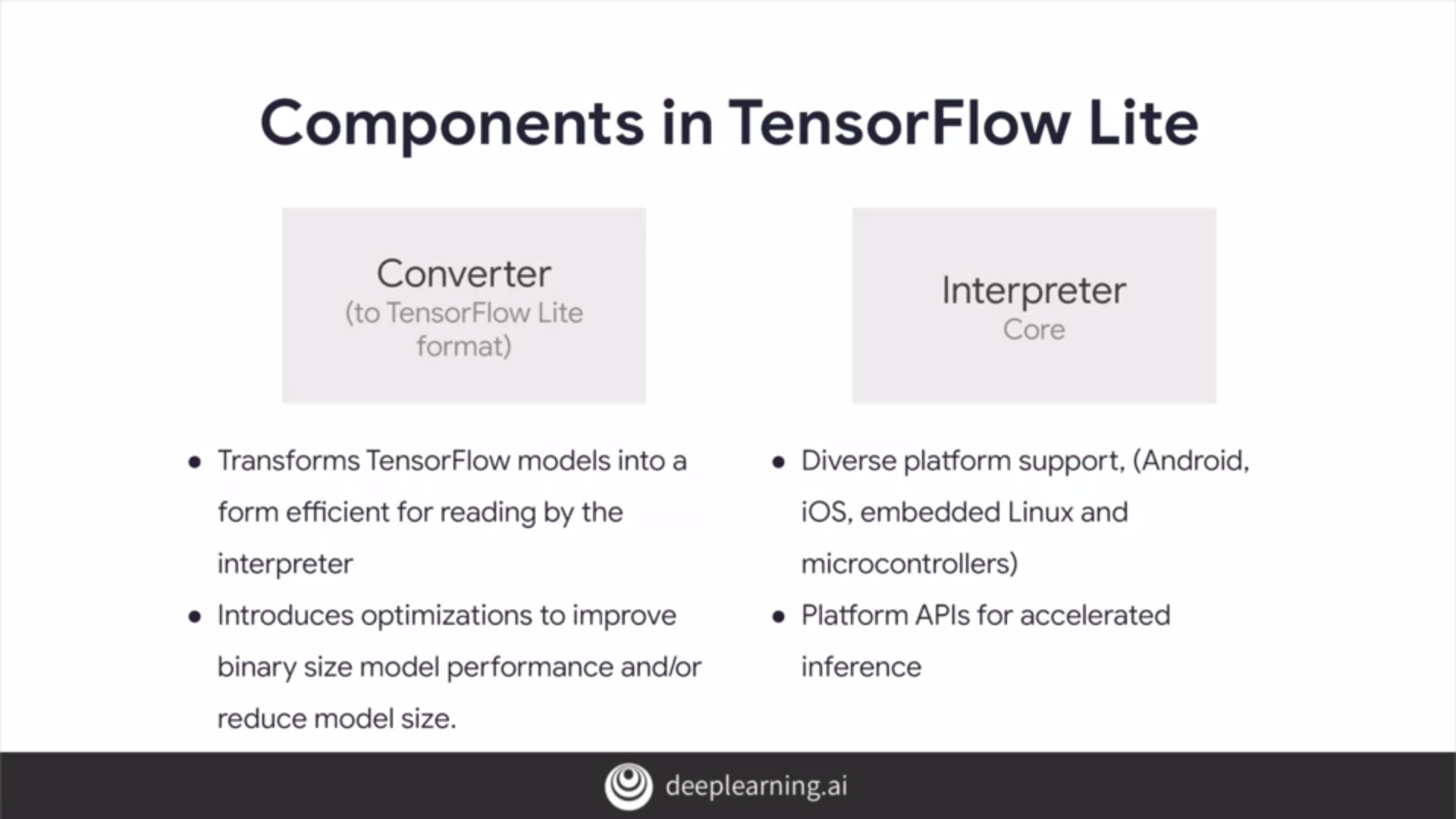

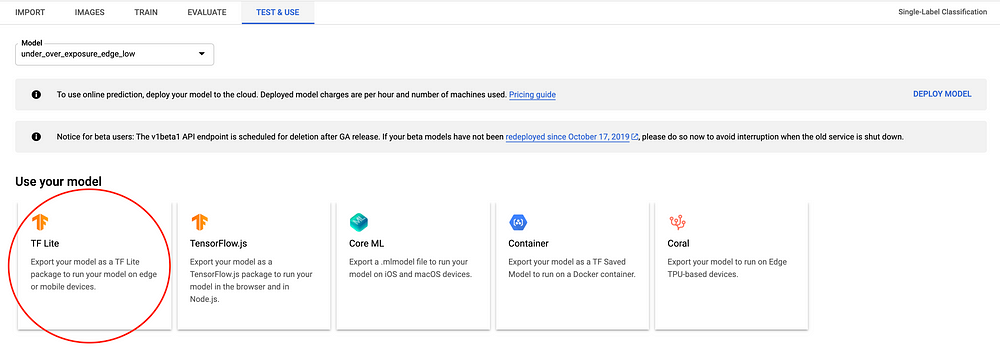

Converting To Tensorflow Lite Models If you cannot use a pre trained model for your application, try using tensorflow lite post training quantization tools during tensorflow lite conversion, which can optimize your already trained tensorflow model. see the post training quantization tutorial to learn more. It's recommended that you consider model optimization during your application development process. this document outlines some best practices for optimizing tensorflow models for deployment to edge hardware. It's recommended that you consider model optimization during your application development process. this document outlines some best practices for optimizing tensorflow models for deployment to edge hardware. These optimizations facilitate the deployment of tensorflow models in real time applications, unlocking new possibilities and driving innovation in the field. in this article we have explored various techniques and best practices for optimizing tensorflow models.

2 Device Based Models With Tensorflow Lite Pallavi Ramicetty It's recommended that you consider model optimization during your application development process. this document outlines some best practices for optimizing tensorflow models for deployment to edge hardware. These optimizations facilitate the deployment of tensorflow models in real time applications, unlocking new possibilities and driving innovation in the field. in this article we have explored various techniques and best practices for optimizing tensorflow models. A production ready tensorflow lite pipeline that converts any tensorflow model into a mobile optimized version. your quantized model is 75% smaller and 3x faster than the original. By systematically applying quantization techniques and carefully measuring the results on target hardware, you can significantly reduce the footprint and increase the speed of your tensorflow lite models, making sophisticated machine learning feasible even on the smallest devices. Tensorflow lite provides tools to optimize the size and performance of your models, often with minimal impact on accuracy. optimized models may require slightly more complex training, conversion, or integration. This in depth article breaks down each optimization method, when to use them, why they matter, and how they work under the hood. if you want a complete understanding of tf lite optimization—including code examples, benefits, drawbacks, and best practices—this guide is for you.

2 Device Based Models With Tensorflow Lite Pallavi Ramicetty A production ready tensorflow lite pipeline that converts any tensorflow model into a mobile optimized version. your quantized model is 75% smaller and 3x faster than the original. By systematically applying quantization techniques and carefully measuring the results on target hardware, you can significantly reduce the footprint and increase the speed of your tensorflow lite models, making sophisticated machine learning feasible even on the smallest devices. Tensorflow lite provides tools to optimize the size and performance of your models, often with minimal impact on accuracy. optimized models may require slightly more complex training, conversion, or integration. This in depth article breaks down each optimization method, when to use them, why they matter, and how they work under the hood. if you want a complete understanding of tf lite optimization—including code examples, benefits, drawbacks, and best practices—this guide is for you.

Running Tensorflow Lite Image Classification Models In Python Comet Tensorflow lite provides tools to optimize the size and performance of your models, often with minimal impact on accuracy. optimized models may require slightly more complex training, conversion, or integration. This in depth article breaks down each optimization method, when to use them, why they matter, and how they work under the hood. if you want a complete understanding of tf lite optimization—including code examples, benefits, drawbacks, and best practices—this guide is for you.

Optimizing Tensorflow Lite Models For Edge Devices Peerdh

Comments are closed.