Optimization Of A Variational Sparse Gaussian Process Animated

Github Prkh2607 Sparse Gaussian Process Regression This video animates the optimization trajectory of the inducing input locations over 1000 epochs, and the resulting posterior predictive distribution, along with that of an exact gaussian. To this end, we propose a novel loss function to train a variational sparse gp model (focalized gp) that emphasizes the fitting of local functional landscapes through weighting the training data.

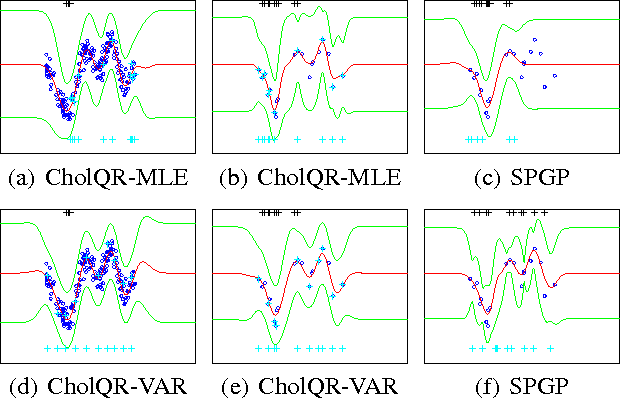

Efficient Optimization For Sparse Gaussian Process Regression The following animation visualizes the optimization of a sparse gaussian process. at each optimization step it shows the updated placement of inducing variables together with predictions made by the approximate posterior. In this paper, we propose the online variational gaussian process (olvgp) algorithm, which introduces a novel approach for dynamically managing the number of inducing points based on the concept of eigenfunction inducing features. This repository includes the official implementation of actually sparse variational gaussian processes, a sparse variational gaussian process approximation, that utilises sparse linear algebra to efficiently scale low dimensional matern gaussian processes to large numbers of datapoints. We propose novel approaches in non parametric policy searches with multiple optimal actions and offer two different algorithms commonly based on a sparse gaussian process prior and variational bayesian inference.

Scalable Bayesian Optimization With Sparse Gaussian Process Models Deepai This repository includes the official implementation of actually sparse variational gaussian processes, a sparse variational gaussian process approximation, that utilises sparse linear algebra to efficiently scale low dimensional matern gaussian processes to large numbers of datapoints. We propose novel approaches in non parametric policy searches with multiple optimal actions and offer two different algorithms commonly based on a sparse gaussian process prior and variational bayesian inference. In this section we describe gaussian process regression and the online sparse gp algorithm introduced in csat ́o (2002). this algorithm uses online updates and a sparse represen tation to reduce the gp training complexity. In conclusion, we have demonstrated how to efficiently condition on new data points with stochastic variational gaussian processes via closed form updates to the variational distribution. In this work, we propose a new class of inter domain variational gp, constructed by projecting a gp onto a set of compactly sup ported b spline basis functions. Variational sparse gp (vsgp) scales gp models to large data sets by summarizing the posterior process with a set of inducing points. in this article, we extend vsgp to handle multiview data. we model each view with a vsgp and augment it with an additional set of inducing points.

Comments are closed.