Openai Debuts Multimodal Gpt 4o Extremetech

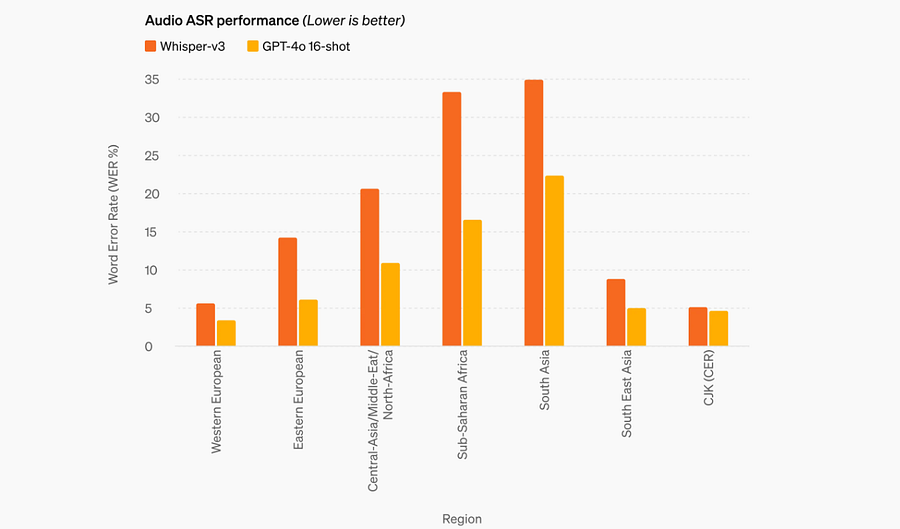

Openai Debuts Multimodal Gpt 4o Extremetech Openai's big announcement monday morning is gpt 4o, a faster, multimodal successor to its famed chatgpt. the new model uses existing large language model (llm) infrastructure to respond. It matches gpt‑4 turbo performance on text in english and code, with significant improvement on text in non english languages, while also being much faster and 50% cheaper in the api. gpt‑4o is especially better at vision and audio understanding compared to existing models.

Openai Debuts Multimodal Gpt 4o Extremetech Openai has introduced gpt 4o, setting a new standard by surpassing previous model limitations. but what makes this model such a big deal? in short: multimodality. unlike its predecessors, gpt 4o can understand images, audio, and text in the same conversation. Gpt 4o marks a significant advancement in ai technology, enhancing multimodal capabilities. openai has launched several gpt models over the years, with gpt 4o being the latest. this paper provides a concise overview of these models, focusing on their key features and technological advancements. In this hands on course you’ll use the openai api to leverage the multimodal capabilities of gpt 4o and function calling to extract text from images, conform the data to json, and call functions to save the extracted data to a spreadsheet. Discover openai gpt 4o in 2025 — multimodal ai that unites text, images, audio & video. learn its features, updates, pricing, and how it compares to google gemini.

Openai Debuts Gpt 4o Multimodal Ai Interaction In this hands on course you’ll use the openai api to leverage the multimodal capabilities of gpt 4o and function calling to extract text from images, conform the data to json, and call functions to save the extracted data to a spreadsheet. Discover openai gpt 4o in 2025 — multimodal ai that unites text, images, audio & video. learn its features, updates, pricing, and how it compares to google gemini. Gpt 4o is openai’s newest multimodal model that can handle text, audio, and image inputs. this model allows for seamless and fluid interactions by integrating these different formats into a single system. On may 13, 2024, openai unveiled gpt 4o, an advanced multimodal artificial intelligence model that marked a significant pivot in the landscape of human computer interaction. Gpt 4o’s key innovation is its ability to handle audio and vision as natively as text. it can understand vocal tone, background noise, multiple speakers, and respond with extremely low latency, enabling much more natural real time voice conversations. **openai** has launched the **deep research api** featuring powerful models **o3 deep research** and **o4 mini deep research** with native support for mcp, search, and code interpreter, enabling advanced agent capabilities including multi agent setups. **google** released **gemma 3n**, a multimodal model optimized for edge devices with only 3gb ram, achieving a top score of 1300 on lmsys arena.

Openai Debuts Gpt 4o Multimodal Ai Interaction Gpt 4o is openai’s newest multimodal model that can handle text, audio, and image inputs. this model allows for seamless and fluid interactions by integrating these different formats into a single system. On may 13, 2024, openai unveiled gpt 4o, an advanced multimodal artificial intelligence model that marked a significant pivot in the landscape of human computer interaction. Gpt 4o’s key innovation is its ability to handle audio and vision as natively as text. it can understand vocal tone, background noise, multiple speakers, and respond with extremely low latency, enabling much more natural real time voice conversations. **openai** has launched the **deep research api** featuring powerful models **o3 deep research** and **o4 mini deep research** with native support for mcp, search, and code interpreter, enabling advanced agent capabilities including multi agent setups. **google** released **gemma 3n**, a multimodal model optimized for edge devices with only 3gb ram, achieving a top score of 1300 on lmsys arena.

Comments are closed.