Nvidia Deepstream Technical Deep Dive Deepstream Inference Options

Erin Rapacki On Linkedin Nvidia Deepstream Technical Deep Dive With native integration to nvidia triton™ inference server, you can deploy models in native frameworks such as pytorch and tensorflow for inference. for high throughput inference, use nvidia tensorrt to achieve the best possible performance. In this video, we will walk through the inference approaches with triton and tensorrt options and deep dive into each inference plugins, batching policies and custom.

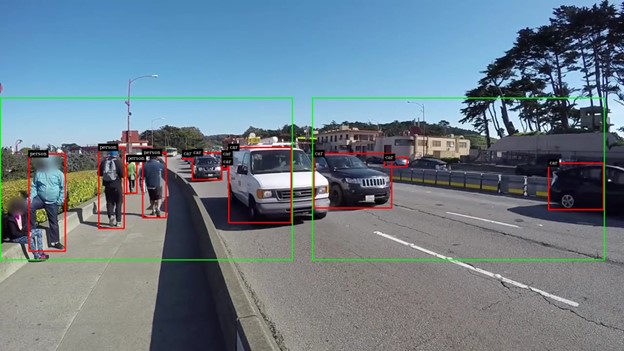

How To Output Inference Information In Deepstream App Deepstream Sdk Nvidia deepstream is a gpu accelerated streaming analytics sdk built on gstreamer. it provides hardware optimised plugins for video decode, multi camera batching, tensorrt inference, object tracking, and cloud messaging assembled into a single pipeline. This page documents the configuration of deepstream inference engines, which execute ai models on video frames and generate object metadata. deepstream provides two inference backends: nvinfer (direct tensorrt api) and nvinferserver (triton inference server backend). The inference can be done using tensorrt, nvidia’s inference accelerator runtime or can be done in the native framework such as tensorflow or pytorch using triton inference server. Learn how to deploy ultralytics yolo26 on nvidia jetson devices using tensorrt and deepstream sdk. explore performance benchmarks and maximize ai capabilities.

Applying Inference Over Specific Frame Regions With Nvidia Deepstream The inference can be done using tensorrt, nvidia’s inference accelerator runtime or can be done in the native framework such as tensorflow or pytorch using triton inference server. Learn how to deploy ultralytics yolo26 on nvidia jetson devices using tensorrt and deepstream sdk. explore performance benchmarks and maximize ai capabilities. It’s an sdk for building real time computer vision applications on nvidia gpus and jetson devices. it’s a framework for constructing gstreamer pipelines that can process video streams like a. Deepstreamtutorials contains a series of python apps and notebooks that explore how to run inference using the nvidia deepstream sdk. i use this repository to learn how to create gstreamer pipeline that include tensorrt models. Explore deepstream reference designs, learn implementation strategies, and discover best practices for advanced video analytics and ai powered solutions in this comprehensive developer resource. Learn the best practices of using #nvidiadeepstream sdk for inference combined with triton & tensorrt. watch the technical deep dive video now.

The Issue Of Using The Deepstream Parallel Inference App Deepstream It’s an sdk for building real time computer vision applications on nvidia gpus and jetson devices. it’s a framework for constructing gstreamer pipelines that can process video streams like a. Deepstreamtutorials contains a series of python apps and notebooks that explore how to run inference using the nvidia deepstream sdk. i use this repository to learn how to create gstreamer pipeline that include tensorrt models. Explore deepstream reference designs, learn implementation strategies, and discover best practices for advanced video analytics and ai powered solutions in this comprehensive developer resource. Learn the best practices of using #nvidiadeepstream sdk for inference combined with triton & tensorrt. watch the technical deep dive video now.

Nvidia Deepstream Technical Deep Dive Multi Object Tracker Edge Ai Explore deepstream reference designs, learn implementation strategies, and discover best practices for advanced video analytics and ai powered solutions in this comprehensive developer resource. Learn the best practices of using #nvidiadeepstream sdk for inference combined with triton & tensorrt. watch the technical deep dive video now.

Comments are closed.