Newton S Method Optimization Notes

Newton S Method Unlocking The Power Of Data Newton’s method is originally a root finding method for nonlinear equations, but in combination with optimality conditions it becomes the workhorse of many optimization algorithms. Among these algorithms, newton's method holds a significant place due to its efficiency and effectiveness in finding the roots of equations and optimizing functions, here in this article we will study more about newton's method and it's use in machine learning.

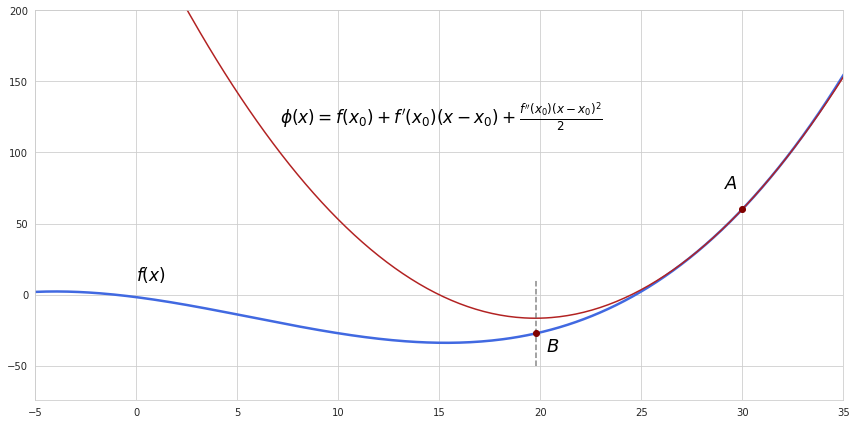

Newton S Method Descargar Gratis Pdf Optimización Matemática (a) using a calculator (or a computer, if you wish), compute five iterations of newton’s method starting at each of the following points, and record your answers:. Explore newton's method for optimization, a powerful technique used in machine learning, engineering, and applied mathematics. learn about second order derivatives, hessian matrix, convergence, and its applications in optimization problems. Lecture 28 multi dimensional optimization: newton based algorithms 28.1 lecture objectives understand the fundamentals of newton’s method. understand the major limitations of newton’s method, which lead to both quasi newton methods as well as the levenberg marquardt modification. Newton's method in optimization a comparison of gradient descent (green) and newton's method (red) for minimizing a function (with small step sizes). newton's method uses curvature information (i.e. the second derivative) to take a more direct route.

Newton Method In Optimization Newton S Method Machine Learning Ajratw Lecture 28 multi dimensional optimization: newton based algorithms 28.1 lecture objectives understand the fundamentals of newton’s method. understand the major limitations of newton’s method, which lead to both quasi newton methods as well as the levenberg marquardt modification. Newton's method in optimization a comparison of gradient descent (green) and newton's method (red) for minimizing a function (with small step sizes). newton's method uses curvature information (i.e. the second derivative) to take a more direct route. From example 4 7 3, we see that newton’s method does not always work. however, when it does work, the sequence of approximations approaches the root very quickly. Newton’s method is a powerful optimization algorithm that leverages both the gradient and the hessian (second derivative) of a function to find its local minima or maxima. Learn how to implement newton's method for optimization problems, including the necessary mathematical derivations and practical considerations. How to employ newton’s method when the hessian is not always positive definite? the simplest one is to construct a hybrid method that employs either a newton step at iterations in which the hessian is positive definite or a gradient step when the hessian is not positive definite.

Comments are closed.