Newton S Method For Optimization A Comprehensive Guide

A Comprehensive Study On Learning Strategies Of Optimization Algorithms Explore newton's method for optimization, a powerful technique used in machine learning, engineering, and applied mathematics. learn about second order derivatives, hessian matrix, convergence, and its applications in optimization problems. Among these algorithms, newton's method holds a significant place due to its efficiency and effectiveness in finding the roots of equations and optimizing functions, here in this article we will study more about newton's method and it's use in machine learning.

Newton S Method Descargar Gratis Pdf Optimización Matemática Learn how to implement newton's method for optimization problems, including the necessary mathematical derivations and practical considerations. Newton’s method is originally a root finding method for nonlinear equations, but in combination with optimality conditions it becomes the workhorse of many optimization algorithms. How to employ newton’s method when the hessian is not always positive definite? the simplest one is to construct a hybrid method that employs either a newton step at iterations in which the hessian is positive definite or a gradient step when the hessian is not positive definite. (a) using a calculator (or a computer, if you wish), compute five iterations of newton’s method starting at each of the following points, and record your answers:.

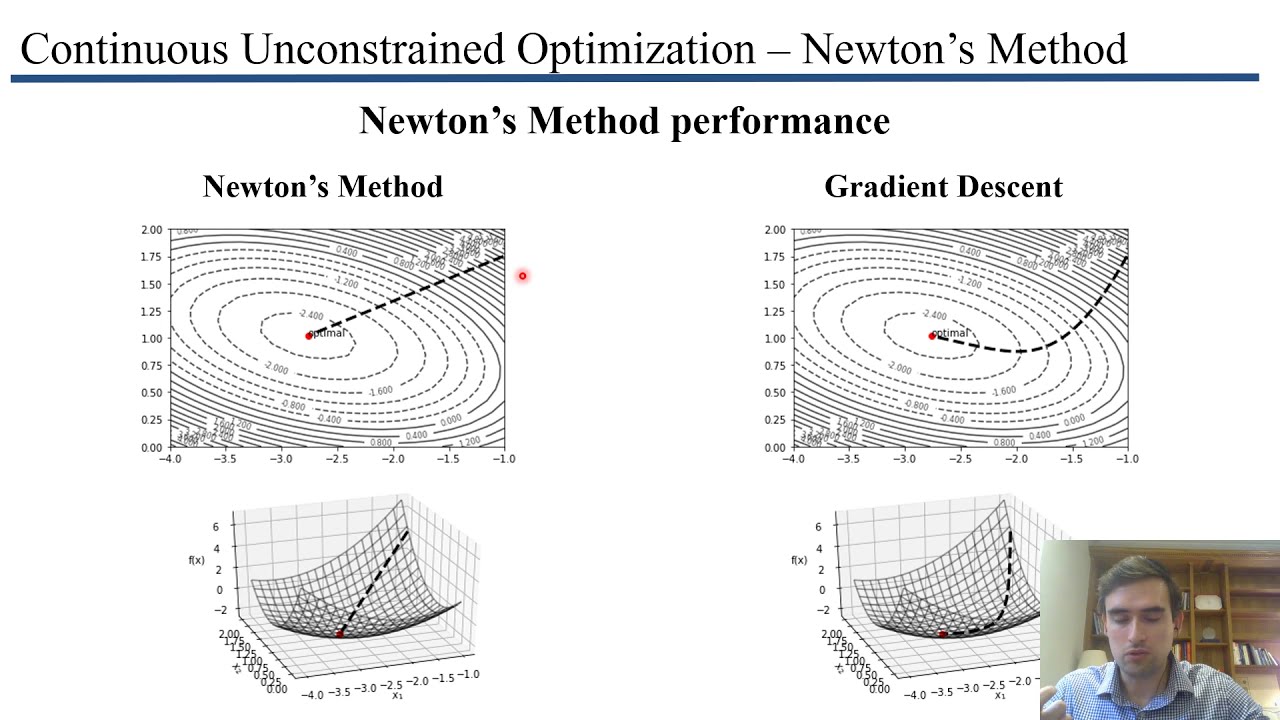

Newton S Method In Optimization Handwiki How to employ newton’s method when the hessian is not always positive definite? the simplest one is to construct a hybrid method that employs either a newton step at iterations in which the hessian is positive definite or a gradient step when the hessian is not positive definite. (a) using a calculator (or a computer, if you wish), compute five iterations of newton’s method starting at each of the following points, and record your answers:. In calculus, newton's method (also called newton–raphson) is an iterative method for finding the roots of a differentiable function , which are solutions to the equation . Newton's method for unconstrained optimization is a powerful technique that uses both gradient and hessian information to find minima or maxima of twice differentiable functions. Important note: when we numerically implement newton’s method, we will not explicitly compute (∇ 2 f (x)) 1. instead, we will solve the linear system,. How is the newton’s method different from the golden section search method? the golden section search method requires explicitly indicating lower and upper boundaries for the search region in which the optimal solution lies.

Newton Method In Optimization Newton S Method Machine Learning Ajratw In calculus, newton's method (also called newton–raphson) is an iterative method for finding the roots of a differentiable function , which are solutions to the equation . Newton's method for unconstrained optimization is a powerful technique that uses both gradient and hessian information to find minima or maxima of twice differentiable functions. Important note: when we numerically implement newton’s method, we will not explicitly compute (∇ 2 f (x)) 1. instead, we will solve the linear system,. How is the newton’s method different from the golden section search method? the golden section search method requires explicitly indicating lower and upper boundaries for the search region in which the optimal solution lies.

Comments are closed.