Neural Tangent Kernels

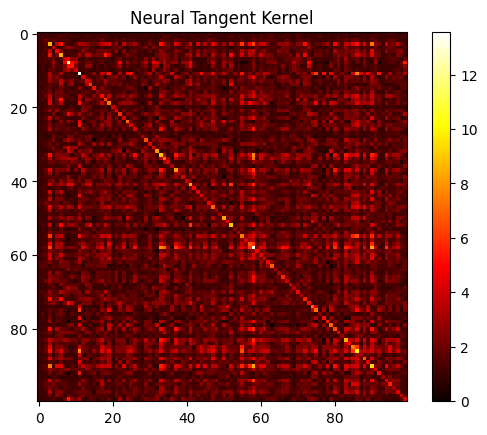

New Insights Into Graph Convolutional Networks Using Neural Tangent Kernels In the study of artificial neural networks (anns), the neural tangent kernel (ntk) is a kernel that describes the evolution of deep artificial neural networks during their training by gradient descent. This kernel is central to describe the generalization features of anns. while the ntk is random at initialization and varies during training, in the infinite width limit it converges to an explicit limiting kernel and it stays constant during training.

Neural Tangent Kernels Julius Data Science Blog But, there are problems that deep learning can solve provably faster than any kernel method. essentially, the ntk regime doesn’t allow for feature learning, since the kernel is fixed. Neural tangents allows you to construct a neural network model from common building blocks like convolutions, pooling, residual connections, nonlinearities, and more, and obtain not only the finite model, but also the kernel function of the respective gp. Here, we go beyond initialisation and look at the neural tangent kernel (ntk) regime, also known as the linear, kernel or “lazy” regime for reasons that will become clear below. the ntk goes one step beyond the nngp result by examining the training dynamics of infinitely wide networks. The neural tangent kernel (ntk) is a kernel that describes how a neural network evolves during training. there has been a lot of research around it in recent years.

Neural Tangent Kernels Julius Data Science Blog Here, we go beyond initialisation and look at the neural tangent kernel (ntk) regime, also known as the linear, kernel or “lazy” regime for reasons that will become clear below. the ntk goes one step beyond the nngp result by examining the training dynamics of infinitely wide networks. The neural tangent kernel (ntk) is a kernel that describes how a neural network evolves during training. there has been a lot of research around it in recent years. 1 a short history of the neural tangent kernel terized network via gradient based optimization. this has been phenomenally successful in practic but leads to a number of theoretical challenges. the first is that neural networks have a highly nonlinear parameterization which leads to. My attempt at distilling the ideas behind the neural tangent kernel that is making waves in recent theoretical deep learning research. Neural tangent kernels (ntks) bridge these two approaches, allowing us to study neural networks through the lens of kernel methods. you might be wondering why this connection even matters. Neural tangents (neural tangents – nt) is a set of tools for constructing and training infinitely wide neural networks (a.k.a. ntk, nngp).

Comments are closed.