Neural Networks Chapter 1 Machine Learning Intro Gradient Descent

Chapter 1 Introduction To Neural Network And Machine Learning Pdf The role of gradient descent in optimizing ml models. this is the first lecture in our neural networks series, designed for beginners who want to build a strong foundation in ai and ml. We can view neural networks from several different perspectives: view 1 : an application of stochastic gradient descent for classication and regression with a potentially very rich hypothesis class.

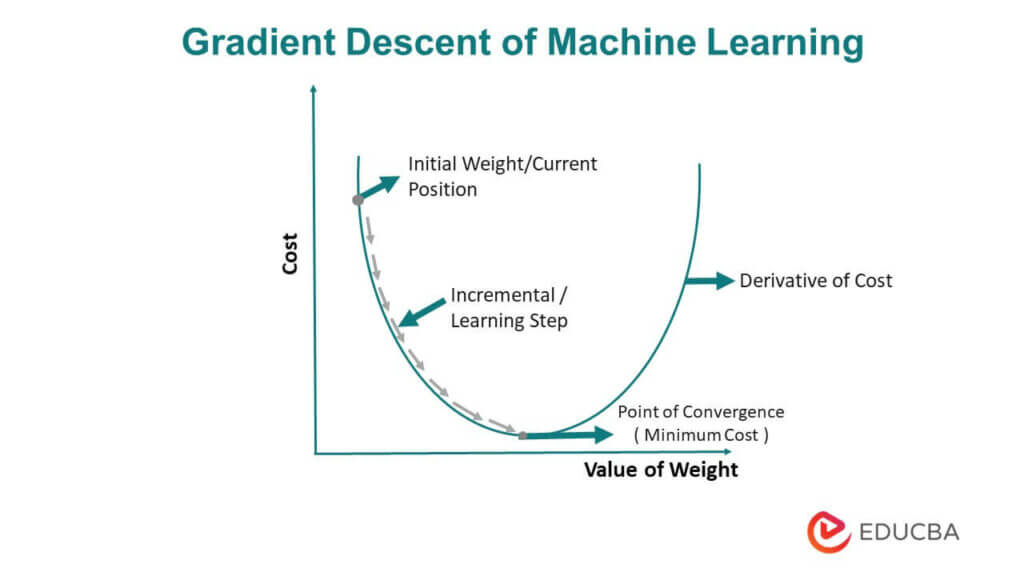

Machine Learning Course 5 Partial Derivative Gradient Descent This is the algorithm of gradient descent, where we update both the coefficients w and b simultaneously. and, while updating the coefficients, we need to make sure that we should use old values in the formula, and assign new values after calculating new values for both coefficients. In practice, stochastic gradient descent is a commonly used and powerful technique for learning in neural networks, and it's the basis for most of the learning techniques we'll develop in this book. Gradient descent is an optimisation algorithm used to reduce the error of a machine learning model. it works by repeatedly adjusting the model’s parameters in the direction where the error decreases the most hence helping the model learn better and make more accurate predictions. Gradient descent # gradient descent is the optimization method that trains neural networks by updating all model coefficients simultaneously. the core idea is: smooth, differentiable functions: neural network operations (like addition and dot products) are smooth. this means a small change in input leads to a small, predictable change in the.

Gradient Descent In Machine Learning Optimized Algorithm Gradient descent is an optimisation algorithm used to reduce the error of a machine learning model. it works by repeatedly adjusting the model’s parameters in the direction where the error decreases the most hence helping the model learn better and make more accurate predictions. Gradient descent # gradient descent is the optimization method that trains neural networks by updating all model coefficients simultaneously. the core idea is: smooth, differentiable functions: neural network operations (like addition and dot products) are smooth. this means a small change in input leads to a small, predictable change in the. Let's go through a simple example to demonstrate how gradient descent works, particularly for minimizing the mean squared error (mse) in a linear regression problem. Gradient descent, how neural networks learn an overview of gradient descent in the context of neural networks. this is a method used widely throughout machine learning for optimizing how a computer performs on certain tasks. Recall goal: train neural network to minimize an objective function learn model parameters that minimize an objective loss function using gradient descent; e.g., (weights, biases). You'll implement forward propagation in neural networks through 2 simple predict functions. the goal here is to bridge the gap between regression models and neural networks, and fully understand how forward propagation works.

What Is Gradient Descent In Machine Learning Aitude Let's go through a simple example to demonstrate how gradient descent works, particularly for minimizing the mean squared error (mse) in a linear regression problem. Gradient descent, how neural networks learn an overview of gradient descent in the context of neural networks. this is a method used widely throughout machine learning for optimizing how a computer performs on certain tasks. Recall goal: train neural network to minimize an objective function learn model parameters that minimize an objective loss function using gradient descent; e.g., (weights, biases). You'll implement forward propagation in neural networks through 2 simple predict functions. the goal here is to bridge the gap between regression models and neural networks, and fully understand how forward propagation works.

Comments are closed.