Natural Language Processing White Space Tokenizer Natural Language Processing Nlp Python

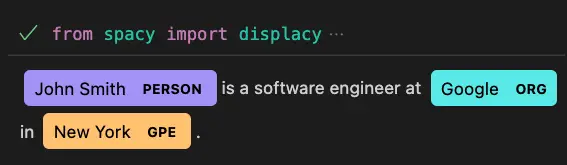

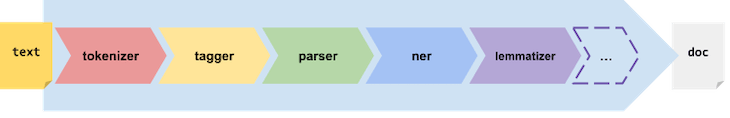

Natural Language Processing With Spacy In Python Real Python The fundamental process in each architecture in nlp goes through tokenization as a pre processing step. from machine learning to deep learning algorithms, all do tokenizations and breaks them into words, character, and pair words (n gram). Spacy is a python library used to process and analyze text efficiently for natural language processing tasks. it provides ready to use models and tools for working with linguistic data.

Natural Language Processing Nlp With Python Examples Pythonprog Natural language processing & tokenization # while pure python is sufficient for many tasks, natural language processing (nlp) libraries allow us to work computationally with the text as language. There are different types of tokenization techniques, so let’s dive in! white space tokenization this is known as one of the simplest tokenization techniques as it uses whitespace within the string as the delimiter of words. wherever the white space is, it will split the data at that point. There are several libraries in python that provide tokenization functionality, including the natural language toolkit (nltk), spacy, and stanford corenlp. these libraries offer customizable tokenization options to fit specific use cases. This notebook provides a comprehensive introduction to text tokenization and preprocessing in nlp using nltk and spacy. it is designed for learners who want to understand both the theory and practical implementation of preparing text for analysis or machine learning.

Natural Language Processing Nlp With Python Examples Pythonprog There are several libraries in python that provide tokenization functionality, including the natural language toolkit (nltk), spacy, and stanford corenlp. these libraries offer customizable tokenization options to fit specific use cases. This notebook provides a comprehensive introduction to text tokenization and preprocessing in nlp using nltk and spacy. it is designed for learners who want to understand both the theory and practical implementation of preparing text for analysis or machine learning. Learn natural language processing with python and nltk, covering text processing, tokenization, and sentiment analysis for beginners in this comprehensive guide. The most common tokenization process is whitespace unigram tokenization. in this process entire text is split into words by splitting them from whitespaces. The first step in a machine learning project is cleaning the data. in this article, you’ll find 20 code snippets to clean and tokenize text data using python. While it may seem simple on the surface, effective tokenization is crucial for building accurate nlp models. in this comprehensive guide, we'll cover everything you need to know about tokenization in nlp.

A Guide To Natural Language Processing With Python Using Spacy Learn natural language processing with python and nltk, covering text processing, tokenization, and sentiment analysis for beginners in this comprehensive guide. The most common tokenization process is whitespace unigram tokenization. in this process entire text is split into words by splitting them from whitespaces. The first step in a machine learning project is cleaning the data. in this article, you’ll find 20 code snippets to clean and tokenize text data using python. While it may seem simple on the surface, effective tokenization is crucial for building accurate nlp models. in this comprehensive guide, we'll cover everything you need to know about tokenization in nlp.

A Guide To Natural Language Processing With Python Using Spacy The first step in a machine learning project is cleaning the data. in this article, you’ll find 20 code snippets to clean and tokenize text data using python. While it may seem simple on the surface, effective tokenization is crucial for building accurate nlp models. in this comprehensive guide, we'll cover everything you need to know about tokenization in nlp.

Comments are closed.