N Grams In Natural Language Processing Byteiota

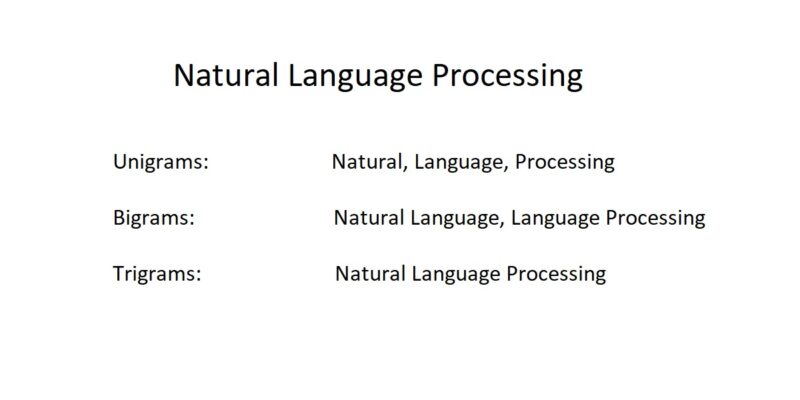

N Grams In Natural Language Processing Byteiota N grams is a statistical language model that refers to a sequence of n words. a single word (natural) is a unigram, two words (natural language) is a bigram, three words (natural language processing) is a tri gram and so on. The value of ’n’ determines the order of the n gram. they are fundamental concept used in various nlp tasks such as language modeling, text classification, machine translation and more.

N Grams Natural Language Processing In the world of natural language processing, phrases are called n grams, where n is the number of words you're looking at. 1 grams are one word, 2 grams are two words, 3 grams are three. Nlp n gram language generator 🤖 hey! so, i've been geeking out on statistical natural language processing lately, and this project is the result. it’s all about teaching a machine how to "talk" by analyzing how words hang out together in a corpus. basically, the engine looks at a mountain of text and tries to guess: "hey, if i just said 'blockchain is', what's the next word most likely to. N grams are the building blocks of language models, helping predict word sequences. they capture local context by analyzing contiguous word or character groups, with higher order n grams providing more context but requiring more data. N grams and probabilities reading ・ 10 mins sequence probabilities video ・ 5 mins sequence probabilities reading ・ 6 mins starting and ending sentences video ・ 8 mins starting and ending sentences reading ・ 6 mins lecture notebook: corpus preprocessing for n grams code example ・ 1 hour the n gram language model video ・ 6 mins the.

Natural Language Processing With Ruby N Grams Sitepoint N grams are the building blocks of language models, helping predict word sequences. they capture local context by analyzing contiguous word or character groups, with higher order n grams providing more context but requiring more data. N grams and probabilities reading ・ 10 mins sequence probabilities video ・ 5 mins sequence probabilities reading ・ 6 mins starting and ending sentences video ・ 8 mins starting and ending sentences reading ・ 6 mins lecture notebook: corpus preprocessing for n grams code example ・ 1 hour the n gram language model video ・ 6 mins the. El: the n gram language model. an n gram is a sequence of n words: a 2 gram (which we’ll call bigram) is a two word sequence of words like the water, or water of, and a 3 gram (a trigram) is a three word sequence of words like th. The n gram language model corrects the noise by using probability knowledge. likewise, this model is used in machine translations for producing more natural statements in target and specified languages. In this blog post, we’ll delve into the world of n grams, exploring their significance, applications, and how they contribute to enhancing language processing tasks. An n gram is a sequence of n adjacent symbols in a particular order. [1] the symbols may be n adjacent letters (including punctuation marks and blanks), syllables, or rarely whole words found in a language dataset; or adjacent phonemes extracted from a speech recording dataset, or adjacent base pairs extracted from a genome.

Stages Of Natural Language Processing Nlp Byteiota El: the n gram language model. an n gram is a sequence of n words: a 2 gram (which we’ll call bigram) is a two word sequence of words like the water, or water of, and a 3 gram (a trigram) is a three word sequence of words like th. The n gram language model corrects the noise by using probability knowledge. likewise, this model is used in machine translations for producing more natural statements in target and specified languages. In this blog post, we’ll delve into the world of n grams, exploring their significance, applications, and how they contribute to enhancing language processing tasks. An n gram is a sequence of n adjacent symbols in a particular order. [1] the symbols may be n adjacent letters (including punctuation marks and blanks), syllables, or rarely whole words found in a language dataset; or adjacent phonemes extracted from a speech recording dataset, or adjacent base pairs extracted from a genome.

Natural Language Processing The N Grams Model Docsity In this blog post, we’ll delve into the world of n grams, exploring their significance, applications, and how they contribute to enhancing language processing tasks. An n gram is a sequence of n adjacent symbols in a particular order. [1] the symbols may be n adjacent letters (including punctuation marks and blanks), syllables, or rarely whole words found in a language dataset; or adjacent phonemes extracted from a speech recording dataset, or adjacent base pairs extracted from a genome.

Understanding Word N Grams And N Gram Probability In Natural Language

Comments are closed.