Mutual Information Clearly Explained

Anderson Chaves On Linkedin Mutual Information Clearly Explained In this article, i’ll guide you through the concept of mutual information, its definition, properties, and usage, as well as comparisons with other dependency measures. What's cool about mutual information is that it works for both continuous and discrete variables. so, in this video, we walk you through how to calculate mutual information step by step.

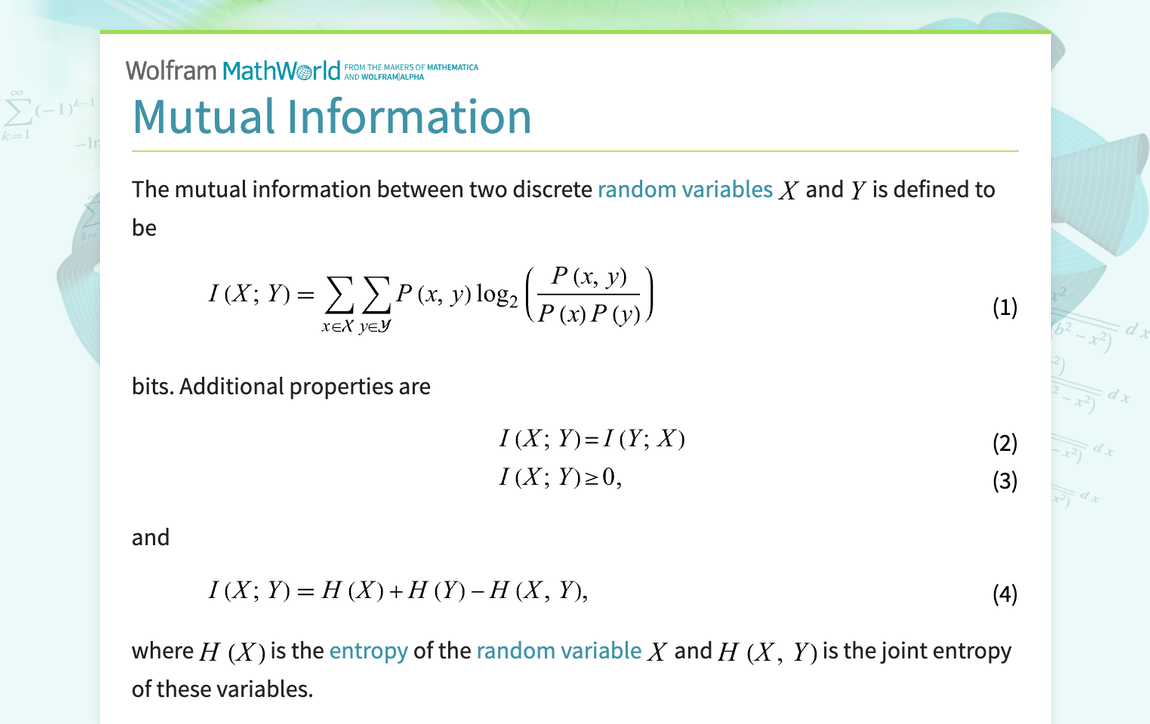

Mutual Information From Wolfram Mathworld Mutual information measures how much two variables share in reducing uncertainty. correlation shows linear relationships; mutual information shows any kind of relationship. In probability theory and information theory, the mutual information (mi) of two random variables is a measure of the mutual dependence between the two variables. I find it surprising and unintuitive that the average amount of information y encodes about x is exactly the same as the average amount of information that x encodes about y, but we can see it clearly in the following. Mutual information (mi) is defined as a method to measure the degree of dependence between different variables, using concepts from information theory to quantify the information that one random variable contains about another.

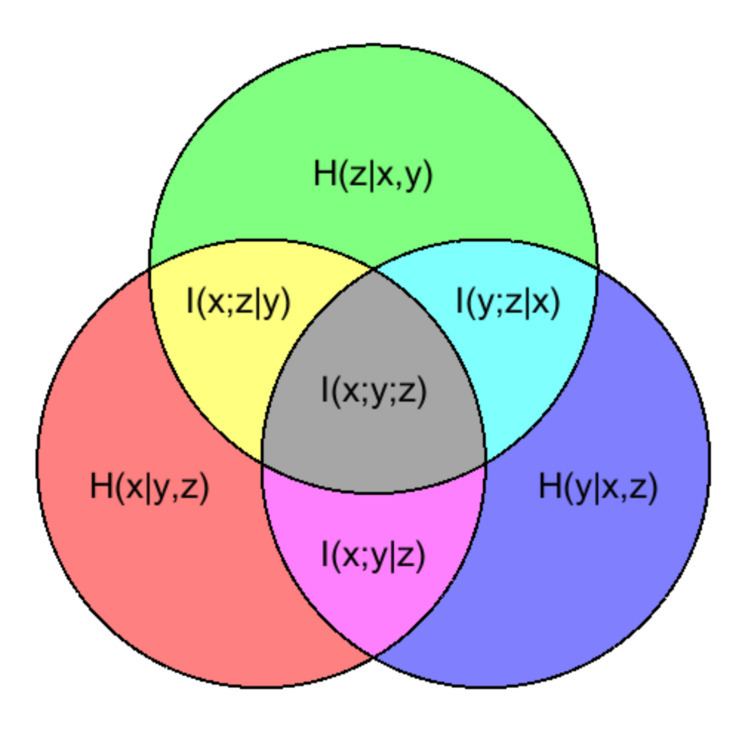

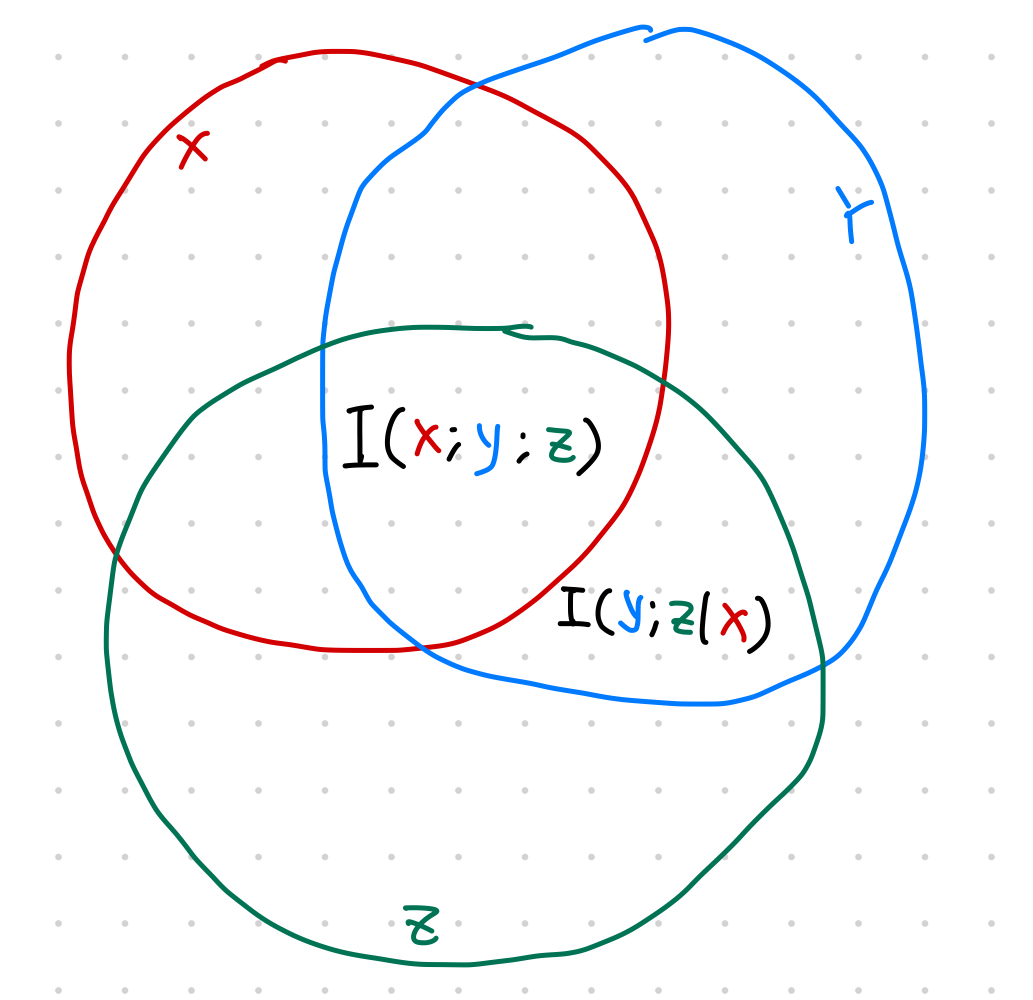

Conditional Mutual Information Alchetron The Free Social Encyclopedia I find it surprising and unintuitive that the average amount of information y encodes about x is exactly the same as the average amount of information that x encodes about y, but we can see it clearly in the following. Mutual information (mi) is defined as a method to measure the degree of dependence between different variables, using concepts from information theory to quantify the information that one random variable contains about another. In probability theory and information theory, the mutual information (mi) of two random variable s is a measure of the mutual dependence between the two variables. Mutual information (mi) is a fundamental concept in information theory that quantifies the amount of information obtained about one random variable through another random variable. Scouring the web to understand further, i’ve realized that while there were excellent mathematical and statistical explanations, there weren’t many traces of intuitive insights on how and why mutual information works. Mutual information is a fundamental concept in information theory that measures the dependence between two random variables. it quantifies the amount of information that one variable contains about another.

Mutual Information Datumorphism L Ma In probability theory and information theory, the mutual information (mi) of two random variable s is a measure of the mutual dependence between the two variables. Mutual information (mi) is a fundamental concept in information theory that quantifies the amount of information obtained about one random variable through another random variable. Scouring the web to understand further, i’ve realized that while there were excellent mathematical and statistical explanations, there weren’t many traces of intuitive insights on how and why mutual information works. Mutual information is a fundamental concept in information theory that measures the dependence between two random variables. it quantifies the amount of information that one variable contains about another.

Mutual Information Ppt Scouring the web to understand further, i’ve realized that while there were excellent mathematical and statistical explanations, there weren’t many traces of intuitive insights on how and why mutual information works. Mutual information is a fundamental concept in information theory that measures the dependence between two random variables. it quantifies the amount of information that one variable contains about another.

Comments are closed.