Move Hypernetworks And Model Selection Underneath Generate

Generate Network Model Structure Download Scientific Diagram You can also use the same hypernetworks on current sd1.4 models with significant transformation of the results. the vae can also be applied to sd1.4 and result in the same color shift observed earlier. This document explains hypernetworks in stable diffusion windows, including their architecture, how they modify model behavior during generation, and how to select and use them.

Network Configurations Involved In Model Selection Download Uhn instead fixes the generator parameterization θ and moves target specificity into deterministic conditioning inputs, enabling a single uhn to support single model, multi model, multi task, and recursive settings without modifying the generator itself. Hypernetworks are a fine tuning technique that enhance the results of your stable diffusion generations. you use hypernetwork files in addition to checkpoint models to push your results towards a theme or aesthetic. Learn how to use hypernetworks in stable diffusion webui with our step by step guide. installation, training, troubleshooting, and lora comparison included. Underneath the generate button, there is a red button with a white circle over a black square, press that. that's where you'll see your loras and embeddings and hypernetworks.

Pdf Fast Unsupervised Deep Outlier Model Selection With Hypernetworks Learn how to use hypernetworks in stable diffusion webui with our step by step guide. installation, training, troubleshooting, and lora comparison included. Underneath the generate button, there is a red button with a white circle over a black square, press that. that's where you'll see your loras and embeddings and hypernetworks. It loads a specified hypernetwork and applies it to the model, potentially altering its behavior or performance based on the strength parameter. this process allows for dynamic adjustments to the model’s architecture or parameters, enabling more flexible and adaptive ai systems. Now, simply click the “generate” button after inputting your desired image generation settings and voila! you’ve used a hypernetwork in the stable diffusion webui for the very first time!. Hypernetworks 2 is a novel concept for fine tuning models without touching any weights. this technique is widely used in drawing style mimicry, and generalizes better compared to textual inversion. this page is mainly a guide for you to do it in practice. This technique was first demonstrated using latent diffusion models, but it has gracefully expanded to include other model variants like stable diffusion. the learned ideas advance to the next level, improving the ability to create visuals out of words.

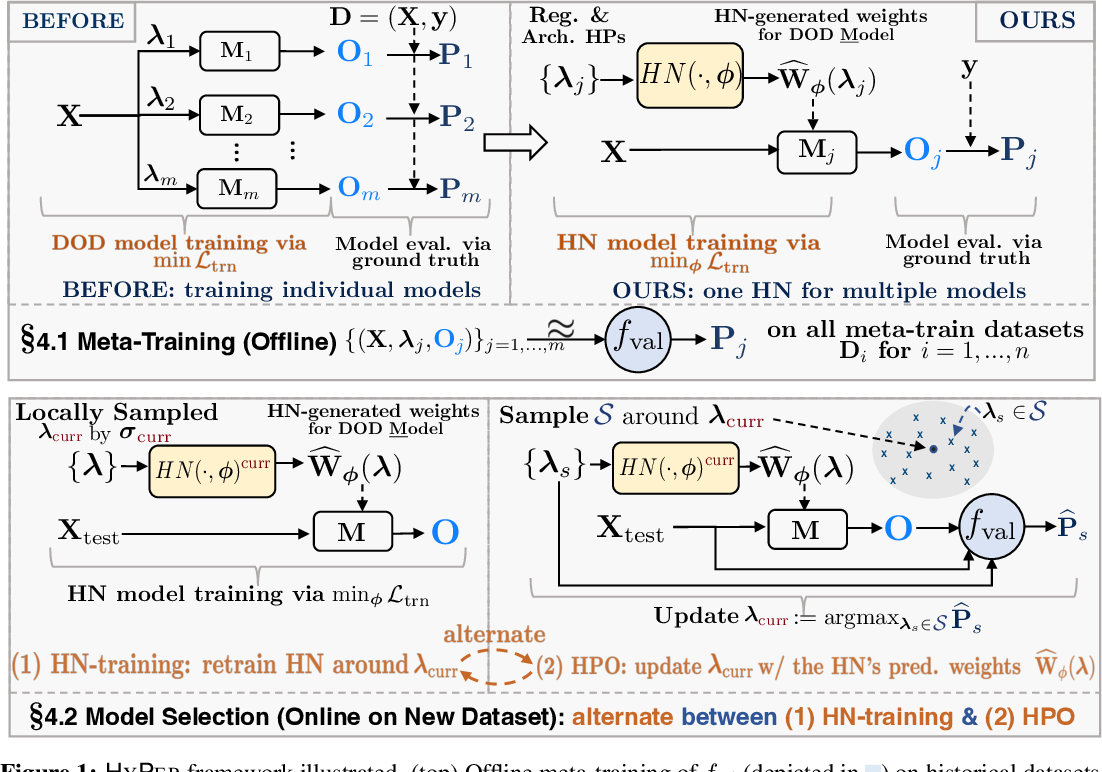

Figure 1 From Fast Unsupervised Deep Outlier Model Selection With It loads a specified hypernetwork and applies it to the model, potentially altering its behavior or performance based on the strength parameter. this process allows for dynamic adjustments to the model’s architecture or parameters, enabling more flexible and adaptive ai systems. Now, simply click the “generate” button after inputting your desired image generation settings and voila! you’ve used a hypernetwork in the stable diffusion webui for the very first time!. Hypernetworks 2 is a novel concept for fine tuning models without touching any weights. this technique is widely used in drawing style mimicry, and generalizes better compared to textual inversion. this page is mainly a guide for you to do it in practice. This technique was first demonstrated using latent diffusion models, but it has gracefully expanded to include other model variants like stable diffusion. the learned ideas advance to the next level, improving the ability to create visuals out of words.

Comments are closed.