Most Gpu Programs Are Memory Limited Intro To Parallel Programming

Introduction To Gpgpu And Parallel Computing Gpu Architecture And Cuda For distributed memory machines, a process based parallel programming model is employed. the processes are independent execution units which have their own memory address spaces. they are created when the parallel program is started and they are only terminated at the end. This section contains complete coverage of specific cuda features such as cuda graphs, dynamic parallelism, interoperability with graphics apis, and unified memory.

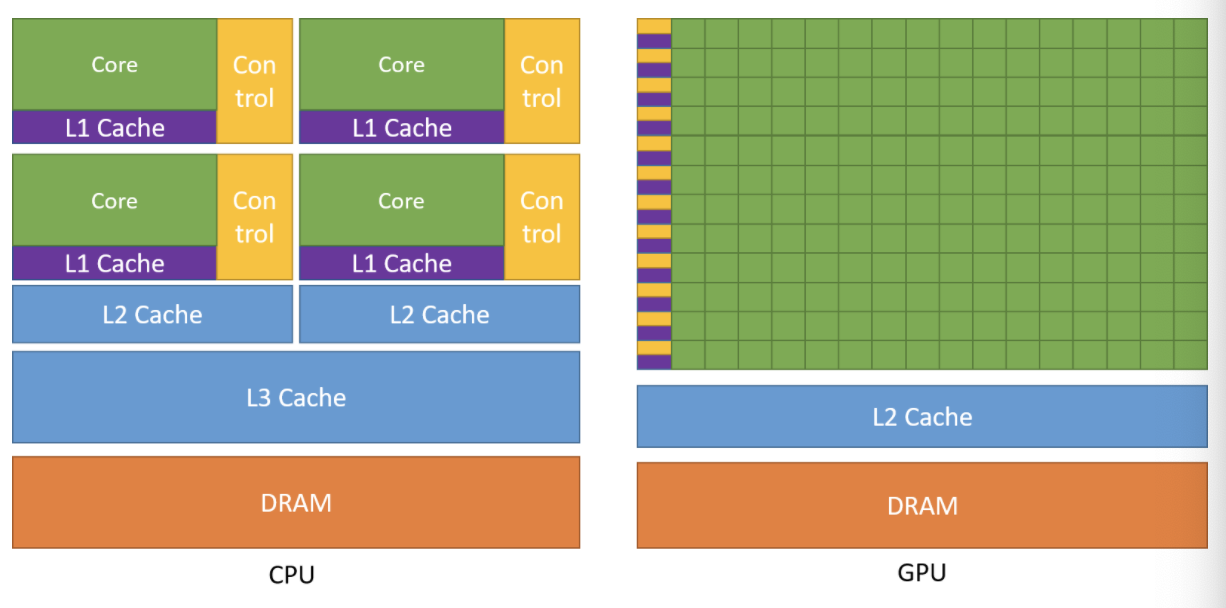

Lecture 30 Gpu Programming Loop Parallelism Pdf Graphics Processing While the cpu is optimized to do a single operation as fast as it can (low latency operation), the gpu is optimized to do a large number of slow operations (high throughput operation). gpus are composed of multiple streaming multiprocessors (sms), an on chip l2 cache, and high bandwidth dram. In this article, we will talk about gpu parallelization with cuda. firstly, we introduce concepts and uses of the architecture. we then present an algorithm for summing elements in an array, to then optimize it with cuda using many different approaches. From parallel computing principles to programming for cpu and gpu architectures for early ml engineers and data scientists, to understand memory fundamentals, parallel execution, and how code is written for cpu and gpu. A gpu is essentially a massively parallel processor, with its own dedicated memory. it is much slower and simpler than a cpu when it comes to single threaded operations, but can have tens or even hundreds of thousands of individual threads.

Intro Parallel Programming From parallel computing principles to programming for cpu and gpu architectures for early ml engineers and data scientists, to understand memory fundamentals, parallel execution, and how code is written for cpu and gpu. A gpu is essentially a massively parallel processor, with its own dedicated memory. it is much slower and simpler than a cpu when it comes to single threaded operations, but can have tens or even hundreds of thousands of individual threads. Intro parallel programming for gpus with cuda. contribute to omidasudeh gpu programming tutorial development by creating an account on github. There are several standards and numerous programming languages to start building gpu accelerated programs, but we have chosen cuda and python to illustrate our example. Cuda and opencl are frameworks that enable parallel programming on gpus to accelerate computations. understanding gpu memory hierarchy (global, shared, local) is essential for optimizing performance. This course will help prepare students for developing code that can process large amounts of data in parallel on graphics processing units (gpus). it will learn on how to implement software that can solve complex problems with the leading consumer to enterprise grade gpus available using nvidia cuda.

Pdf Parallel Programming Introduction To Gpu Pdf Fileparallel Intro parallel programming for gpus with cuda. contribute to omidasudeh gpu programming tutorial development by creating an account on github. There are several standards and numerous programming languages to start building gpu accelerated programs, but we have chosen cuda and python to illustrate our example. Cuda and opencl are frameworks that enable parallel programming on gpus to accelerate computations. understanding gpu memory hierarchy (global, shared, local) is essential for optimizing performance. This course will help prepare students for developing code that can process large amounts of data in parallel on graphics processing units (gpus). it will learn on how to implement software that can solve complex problems with the leading consumer to enterprise grade gpus available using nvidia cuda.

Comments are closed.