Mobile Robot Navigation Via Model Based Safe Reinforcement Learning

Deep Reinforcement Learning Based Mobile Robot Navigation A Review This paper presents experimental results of mobile robot navigation using two predictive controllers – a conventional model predictive control and a q learning. In this paper, we propose a new technique for controlling mrs using reinforcement learning (rl). our approach involves mathematical model generation and later training a neural network (nn).

Pdf Towards Continuous Control For Mobile Robot Navigation A Unlike conventional approaches, this paper proposes an end to end approach that uses deep reinforcement learning for autonomous mobile robot navigation in an unknown environment. A practical autonomous robot navigation algorithm named conflict averse safe reinforcement learning (casrl) is proposed, which outperforms the vanilla algorithm by an 8.2% success rate and fully illustrates the effectiveness of the proposed two innovations. Deep reinforcement learning for mobile robot navigation in ros gazebo simulator. using twin delayed deep deterministic policy gradient (td3) neural network, a robot learns to navigate to a random goal point in a simulated environment while avoiding obstacles. This paper presents an improved deep reinforcement learning (drl) framework based on the twin delayed deep deterministic policy gradient (td3) algorithm for adaptive mapless navigation.

Safe Reinforcement Learning On Autonomous Vehicles Deepai Deep reinforcement learning for mobile robot navigation in ros gazebo simulator. using twin delayed deep deterministic policy gradient (td3) neural network, a robot learns to navigate to a random goal point in a simulated environment while avoiding obstacles. This paper presents an improved deep reinforcement learning (drl) framework based on the twin delayed deep deterministic policy gradient (td3) algorithm for adaptive mapless navigation. To face the challenge, this paper proposes a safe deep reinforcement learning algorithm named conflict‐averse safe reinforcement learning (casrl) for autonomous robot navigation. This paper proposes radar bpo (risk aware, dynamic, adaptive regulation barrier policy optimization), a novel safe reinforcement learning framework integrating ppo with cbf based safety filters to mitigate collision risks in mobile robot navigation. The scf approach enables mobile robots to navigate adeptly in unpredictable and dynamic environments, ensuring optimal planning control while being safe and robust. experiments in gazebo simulation environment and real world confirm the effectiveness of our proposed method. This study investigates the application of deep reinforcement learning to train a mobile robot for autonomous navigation in a complex environment. the robot utilizes lidar sensor data and a deep neural network to generate control signals guiding it toward a specified target while avoiding obstacles.

Reinforcement Learning Based Approach For Mobile Robot Navigation Pdf To face the challenge, this paper proposes a safe deep reinforcement learning algorithm named conflict‐averse safe reinforcement learning (casrl) for autonomous robot navigation. This paper proposes radar bpo (risk aware, dynamic, adaptive regulation barrier policy optimization), a novel safe reinforcement learning framework integrating ppo with cbf based safety filters to mitigate collision risks in mobile robot navigation. The scf approach enables mobile robots to navigate adeptly in unpredictable and dynamic environments, ensuring optimal planning control while being safe and robust. experiments in gazebo simulation environment and real world confirm the effectiveness of our proposed method. This study investigates the application of deep reinforcement learning to train a mobile robot for autonomous navigation in a complex environment. the robot utilizes lidar sensor data and a deep neural network to generate control signals guiding it toward a specified target while avoiding obstacles.

Pdf A Novel Mobile Robot Navigation Method Based On Deep The scf approach enables mobile robots to navigate adeptly in unpredictable and dynamic environments, ensuring optimal planning control while being safe and robust. experiments in gazebo simulation environment and real world confirm the effectiveness of our proposed method. This study investigates the application of deep reinforcement learning to train a mobile robot for autonomous navigation in a complex environment. the robot utilizes lidar sensor data and a deep neural network to generate control signals guiding it toward a specified target while avoiding obstacles.

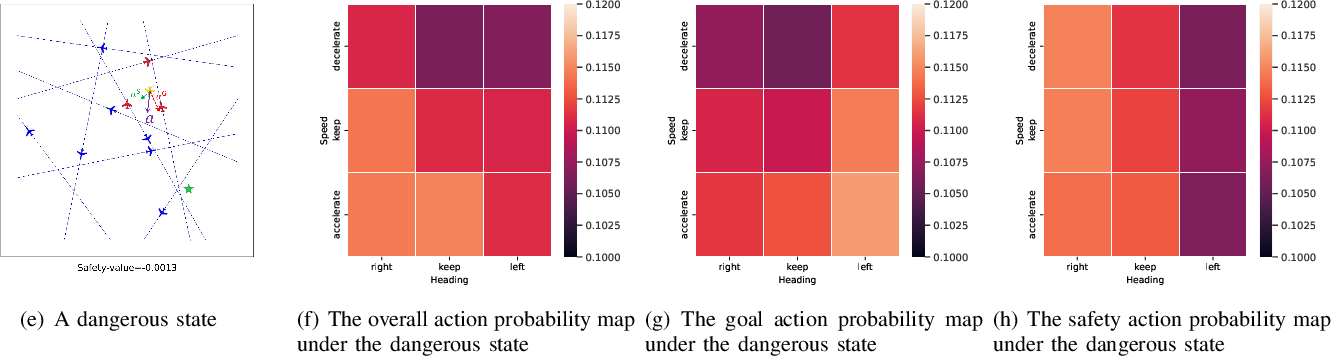

Explainable And Safe Reinforcement Learning For Autonomous Air Mobility

Comments are closed.