Mlt __init__ Session 17 Llm Int8

Mlt On Linkedin Mlt Init Session 17 Llm Int8 2022年9月17日 土 11 Mlt init session #1 – xception: deep learning with depthwise separable convolutions 8 bit methods for efficient deep learning tim dettmers (university of washington). We develop a procedure for int8 matrix multiplication for feed forward and attention projection layers in transformers, which cut the memory needed for inference by half while retaining full precision performance.

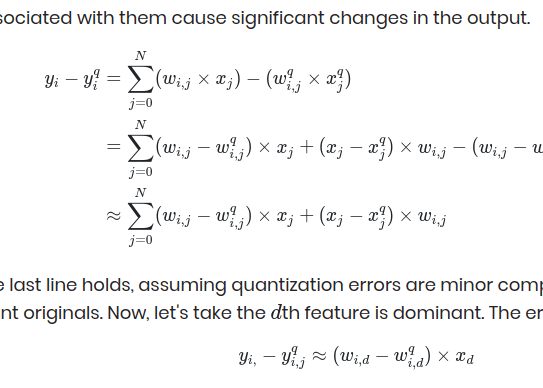

Failed To Build Trt Llm Engine For Quantized Int8 Bert Model Issue In this paper, we present the first multi billion scale int8 quantization procedure for transformers that does not incur any performance degradation. Mlt init is a monthly event led by jayson cunanan and j. miguel valverde where, similarly to a traditional journal club, a paper is first presented by a volunteer and then discussed among all attendees. • load the weight from fp16 32 checkpoints • quantize it to int8 and send to gpus • when fp16 matmul is needed, weight matrix is dequantized to fp16 36 55 evaluation • question: how well llm.int8()performs as the model size scales. Mlt init session #1 – xception: deep learning with depthwise separable convolutions mlt artificial intelligence • 3k views • 4 years ago.

Understanding Llm Int8 Quantization Picovoice • load the weight from fp16 32 checkpoints • quantize it to int8 and send to gpus • when fp16 matmul is needed, weight matrix is dequantized to fp16 36 55 evaluation • question: how well llm.int8()performs as the model size scales. Mlt init session #1 – xception: deep learning with depthwise separable convolutions mlt artificial intelligence • 3k views • 4 years ago. We develop a procedure for int8 matrix multiplication for feed forward and attention projection layers in transformers, which cut the memory needed for inference by half while retaining full precision performance. Wanna learn how to make huge transformers even more accessible? 🤔🤯 🚨new mlt init session🚨 🗓️ sept 17th at 11am (jst) sept 16th at 7pm (pdt) tim dettmers will present "llm.int8. With this blog post, we offer llm.int8 () integration for all hugging face models which we explain in more detail below. if you want to read more about our research, you can read our paper, llm.int8 (): 8 bit matrix multiplication for transformers at scale.

How It Works Llm Workflow Engine 0 22 20 Documentation We develop a procedure for int8 matrix multiplication for feed forward and attention projection layers in transformers, which cut the memory needed for inference by half while retaining full precision performance. Wanna learn how to make huge transformers even more accessible? 🤔🤯 🚨new mlt init session🚨 🗓️ sept 17th at 11am (jst) sept 16th at 7pm (pdt) tim dettmers will present "llm.int8. With this blog post, we offer llm.int8 () integration for all hugging face models which we explain in more detail below. if you want to read more about our research, you can read our paper, llm.int8 (): 8 bit matrix multiplication for transformers at scale.

What Is An Llm Plus With this blog post, we offer llm.int8 () integration for all hugging face models which we explain in more detail below. if you want to read more about our research, you can read our paper, llm.int8 (): 8 bit matrix multiplication for transformers at scale.

Comments are closed.