Mixed Precision Towards Data Science

Mixed Precision Towards Data Science The idea of mixed precision training was first proposed in the 2018 iclr paper "mixed precision training", which converts deep learning models into half precision floating point during training without losing model accuracy or modifying hyper parameters. Broadly, we find that mixed precision methods can have a large impact on computational science in terms of time to solution and energy consumption. this is true not only for a few arithmetic dominated applications but also, to a more moderate extent, to the many memory bandwidth bound applications.

Mixed Precision Training Pdf Deep Learning Speech Recognition Mixed precision quantization can be broadly classified into two main approaches: automatic bit width search methods and sensitivity based bit width allocation approach. As the name suggests, the idea is to employ lower precision float16 (wherever feasible, like in convolutions and matrix multiplications) along with float32 — that is why the name “mixed precision.” this is a list of some models i found that were trained using mixed precision:. In our work, we propose a simple practice to improve the energy efficiency of neural networks, i.e. training them with mixed precision and deploying them on edge tpu. This article will show (with code examples) the sort of gains that can actually be attained, whilst also going over the requirements to use mixed precision training in your own models.

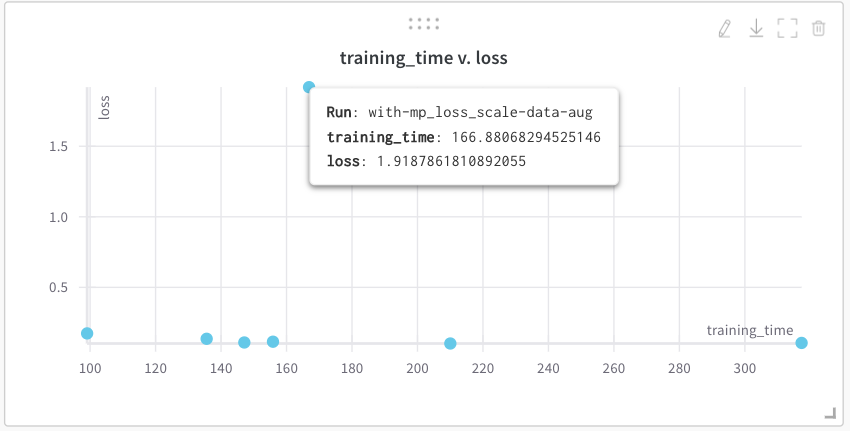

Mixed Precision Training For Tf Keras Models By Sayak Paul Towards In our work, we propose a simple practice to improve the energy efficiency of neural networks, i.e. training them with mixed precision and deploying them on edge tpu. This article will show (with code examples) the sort of gains that can actually be attained, whilst also going over the requirements to use mixed precision training in your own models. The mixed precision tuning techniques aim to choose the smallest data type which still provides sufficient accuracy in order to save valuable resources like time, memory or energy. In this article, i’ll introduce the automatic mixed precision technique. In this paper, we propose a novel data quality aware mixed precision quantization framework, dubbed dqmq, to dynamically adapt quantization bit widths to different data qualities. In this post, i will show you, how you can speed up your training on a suitable gpu or tpu using mixed precision bit representation. first, i will briefly introduce different floating point formats.

Comments are closed.