Mitigating Data Set Bias In Natural Language Models

Mitigate Position Bias In Large Language Models Via Scaling A Single Large language models (llms) have revolutionized natural language processing, but their susceptibility to biases poses significant challenges. this comprehensive review examines the landscape of bias in llms, from its origins to current mitigation strategies. Our algorithm and metrics can be used to quantify and mitigate large language model bias in various industries, ranging from education to agriculture.

Mbias Mitigating Bias In Large Language Models While Retaining Context We present a new debiasing framework called fairflow that mitigates dataset biases by learning to be undecided in its predictions for data samples or representations associated with known or unknown biases. This report from the brookings institution’s artificial intelligence and emerging technology (aiet) initiative is part of “ai and bias,” a series that explores ways to mitigate possible. To this end, we train a biased model without prior human knowledge of dataset bias by constraining the training environment and then use it with a reweighting based debiasing method that decreases the weights assigned to losses from biased examples. To address bias issue, this study proposes a sys tematic methodology for bias detection and mitigation in llms, particularly in classification settings. we assess multiple llm variants—including llama, phi, mixtral, and deepseek—using the super naturalinstructions benchmark.

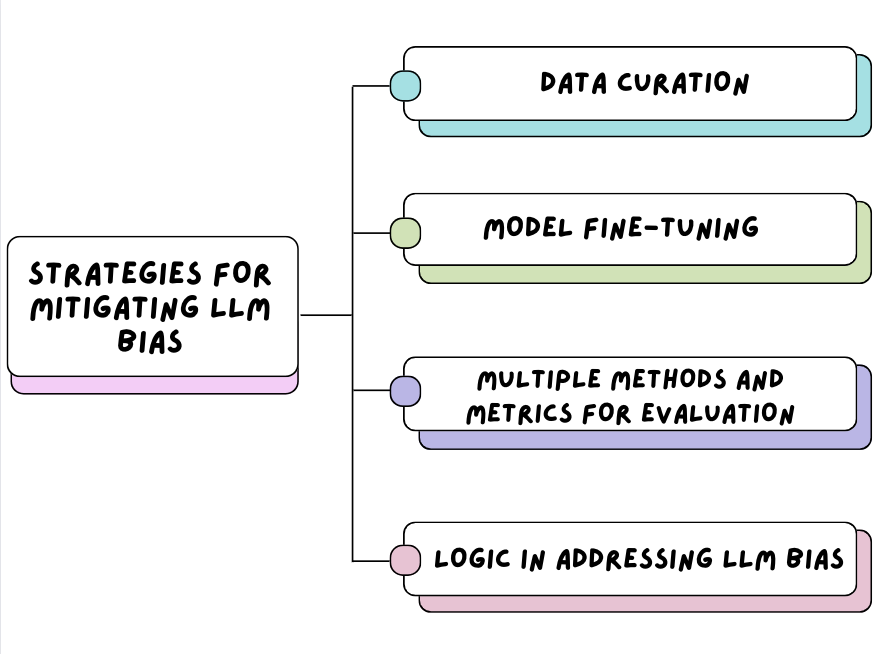

Understanding And Mitigating Bias In Large Language Models Llms To this end, we train a biased model without prior human knowledge of dataset bias by constraining the training environment and then use it with a reweighting based debiasing method that decreases the weights assigned to losses from biased examples. To address bias issue, this study proposes a sys tematic methodology for bias detection and mitigation in llms, particularly in classification settings. we assess multiple llm variants—including llama, phi, mixtral, and deepseek—using the super naturalinstructions benchmark. Pre processing mitigation techniques aim to remove bias and unfairness early on in the dataset or model inputs, whereas in training mitigation techniques focus on reducing bias and unfairness during the model training. In this paper, we introduce and discuss the pervasive issue of bias in the large language models that are currently at the core of mainstream approaches to natural language processing. Here, the authors introduce a diagnostic paradigm—shortcut hull learning—that identifies shortcuts from datasets, enabling unbiased evaluation of model capabilities. We build a new chinese dataset, chbias, for evaluating and mitigating biases in chinese conversational models, which includes under explored biases in the existing works, such as age and appearance.

Detecting And Mitigating Bias In Natural Language Processing Brookings Pre processing mitigation techniques aim to remove bias and unfairness early on in the dataset or model inputs, whereas in training mitigation techniques focus on reducing bias and unfairness during the model training. In this paper, we introduce and discuss the pervasive issue of bias in the large language models that are currently at the core of mainstream approaches to natural language processing. Here, the authors introduce a diagnostic paradigm—shortcut hull learning—that identifies shortcuts from datasets, enabling unbiased evaluation of model capabilities. We build a new chinese dataset, chbias, for evaluating and mitigating biases in chinese conversational models, which includes under explored biases in the existing works, such as age and appearance.

Understand And Mitigate Bias In Llms Datacamp Here, the authors introduce a diagnostic paradigm—shortcut hull learning—that identifies shortcuts from datasets, enabling unbiased evaluation of model capabilities. We build a new chinese dataset, chbias, for evaluating and mitigating biases in chinese conversational models, which includes under explored biases in the existing works, such as age and appearance.

Comments are closed.