Mitigating Bias In Large Language Models

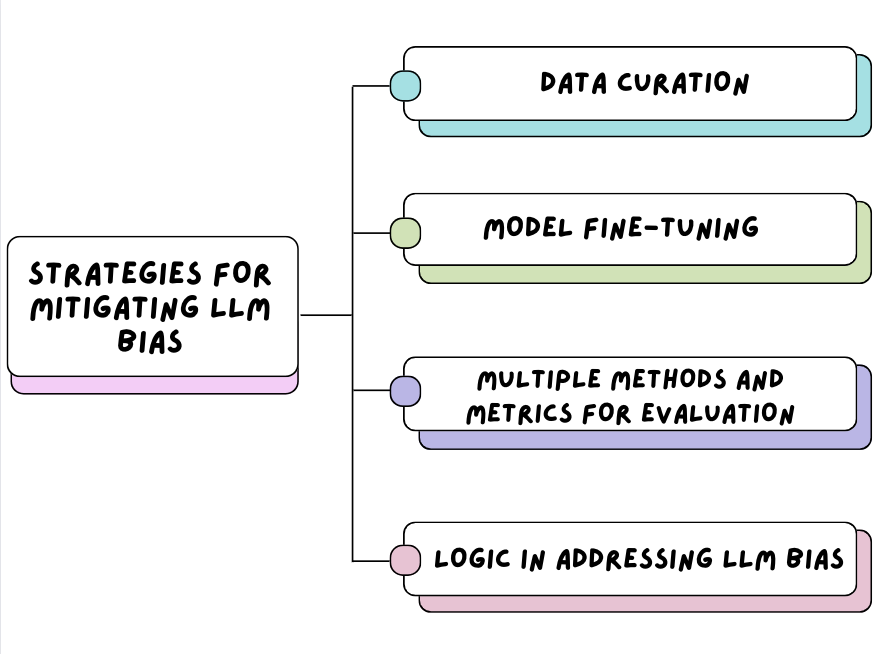

Mitigate Position Bias In Large Language Models Via Scaling A Single Large language models (llms) have revolutionized natural language processing, but their susceptibility to biases poses significant challenges. this comprehensive review examines the landscape of bias in llms, from its origins to current mitigation strategies. With the growing recognition of the biases embedded in llms has emerged an abundance of works proposing techniques to measure or remove social bias, primarily organized by (1) metrics for bias evaluation, (2) datasets for bias evaluation, and (3) techniques for bias mitigation.

Mbias Mitigating Bias In Large Language Models While Retaining Context Mitigating bias in large language models (llms) is essential, as biased outputs can perpetuate harmful stereotypes and negatively influence decision making [1]. Large language models (llms) have revolutionized natural language processing, but their susceptibility to biases poses significant challenges. this comprehensive review examines the. Large language models (llms) have revolutionized natural language processing, but their susceptibility to biases poses significant challenges. this comprehensive review examines the landscape of bias in llms, from its origins to current mitigation strategies. To address these challenges, we introduce mbias, an llm framework carefully instruction fine tuned on a custom dataset designed specifically for safety interventions. mbias is designed to significantly reduce biases and toxic elements in llm outputs while preserving the main information.

Understanding And Mitigating Bias In Large Language Models Llms Large language models (llms) have revolutionized natural language processing, but their susceptibility to biases poses significant challenges. this comprehensive review examines the landscape of bias in llms, from its origins to current mitigation strategies. To address these challenges, we introduce mbias, an llm framework carefully instruction fine tuned on a custom dataset designed specifically for safety interventions. mbias is designed to significantly reduce biases and toxic elements in llm outputs while preserving the main information. In this paper, we propose a multi objective approach within a multi agent framework (moma) to mitigate social bias in llms without significantly compromising their performance. This paper introduces a novel two stage bias mitigation approach utilizing llm’s empathy ability, reinforcement learning, and human in the loop mechanisms to identify and correct age related biases without altering model parameters. there are two modes for our bias mitigation strategy. To address bias issue, this study proposes a sys tematic methodology for bias detection and mitigation in llms, particularly in classification settings. we assess multiple llm variants—including llama, phi, mixtral, and deepseek—using the super naturalinstructions benchmark. Our algorithm and metrics can be used to quantify and mitigate large language model bias in various industries, ranging from education to agriculture.

论文评述 Mitigating Propensity Bias Of Large Language Models For In this paper, we propose a multi objective approach within a multi agent framework (moma) to mitigate social bias in llms without significantly compromising their performance. This paper introduces a novel two stage bias mitigation approach utilizing llm’s empathy ability, reinforcement learning, and human in the loop mechanisms to identify and correct age related biases without altering model parameters. there are two modes for our bias mitigation strategy. To address bias issue, this study proposes a sys tematic methodology for bias detection and mitigation in llms, particularly in classification settings. we assess multiple llm variants—including llama, phi, mixtral, and deepseek—using the super naturalinstructions benchmark. Our algorithm and metrics can be used to quantify and mitigate large language model bias in various industries, ranging from education to agriculture.

Understand And Mitigate Bias In Llms Datacamp To address bias issue, this study proposes a sys tematic methodology for bias detection and mitigation in llms, particularly in classification settings. we assess multiple llm variants—including llama, phi, mixtral, and deepseek—using the super naturalinstructions benchmark. Our algorithm and metrics can be used to quantify and mitigate large language model bias in various industries, ranging from education to agriculture.

Comments are closed.