Mistral 7b Instruct Raspberry Pi 5 Llama Cpp Cmd 2x

Running Llm Llama Cpp Natively On Raspberry Pi Raspberry pi 5 llama.cpp setup with corrected build commands, gguf model selection, performance tuning, server mode, and honest token speed benchmarks. How to run llm (mistral 7b) on raspberry pi 5 step by step guide on how to run large language model on a raspberry pi 5 (might work on 4 too, haven't tested it yet).

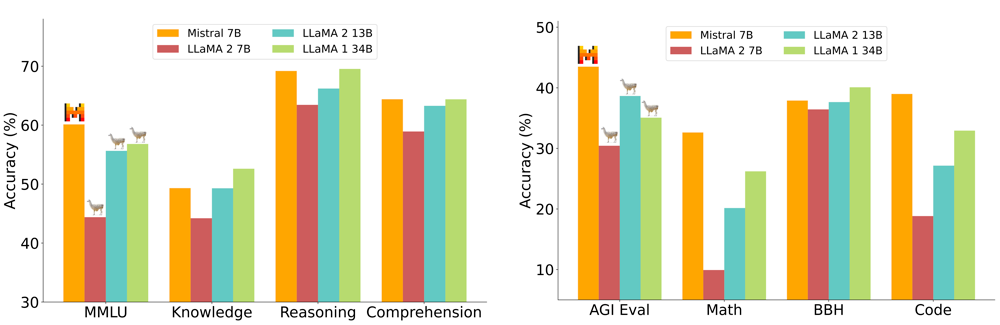

Running Llm Llama Cpp Natively On Raspberry Pi In 2025, the answer is yes. thanks to llama.cpp, quantized gguf models, and the power boost of the raspberry pi 5, it’s now practical to deploy 1b–7b models locally. this guide shows you how to set it up, what models to choose, and what performance to expect on both the pi 5 and older pi 4 or 4 gb ram boards. Comprehensive raspberry pi tutorials, guides, and tips for beginners to experts. learn hardware setup, programming, troubleshooting, and advanced projects. Running `thebloke mistral 7b instruct v0.1 gguf` (q4 k m) on raspberry pi 5 using llama.cpp (cmd) at 2x speed. This guide will walk you through the steps of running the mistral model on your pi 5, turning it into a compact and efficient ai powerhouse. why run mistral on your pi 5?.

Inferencing Mistral 7b Instruct Gguf With Llama Cpp By Data Equity Running `thebloke mistral 7b instruct v0.1 gguf` (q4 k m) on raspberry pi 5 using llama.cpp (cmd) at 2x speed. This guide will walk you through the steps of running the mistral model on your pi 5, turning it into a compact and efficient ai powerhouse. why run mistral on your pi 5?. We need to start on the linux based pc, this is because we need to convert the raw model to ggpl format, which requires more memory that raspberry has. if we actually try to it on raspi it will. We will be using these commands to download and build the llama project, and to download and quantize a model that can run on the raspberry pi. A practical, step by step guide to building a private, energy efficient llm home server on raspberry pi 5 using quantized mistral models—no cloud, no subscriptions, full local control. In this tutorial, i will demonstrate how to run a large language model on a brand new raspberry pi 5, a low cost and portable computer that can fit in your pocket.

Project Llama Cpp The Ruby Toolbox We need to start on the linux based pc, this is because we need to convert the raw model to ggpl format, which requires more memory that raspberry has. if we actually try to it on raspi it will. We will be using these commands to download and build the llama project, and to download and quantize a model that can run on the raspberry pi. A practical, step by step guide to building a private, energy efficient llm home server on raspberry pi 5 using quantized mistral models—no cloud, no subscriptions, full local control. In this tutorial, i will demonstrate how to run a large language model on a brand new raspberry pi 5, a low cost and portable computer that can fit in your pocket.

Llama C Server A Quick Start Guide A practical, step by step guide to building a private, energy efficient llm home server on raspberry pi 5 using quantized mistral models—no cloud, no subscriptions, full local control. In this tutorial, i will demonstrate how to run a large language model on a brand new raspberry pi 5, a low cost and portable computer that can fit in your pocket.

Llama Cpp Python Quick Guide To Efficient Usage

Comments are closed.