Memory Level Parallelism Semantic Scholar

Memory Level Parallelism Semantic Scholar Memory level parallelism (mlp) is a term in computer architecture referring to the ability to have pending multiple memory operations, in particular cache misses or translation lookaside buffer (tlb) misses, at the same time. We now examine the hardware mechanisms for handling cache misses and memory level parallelism in dram and cpus. the achievable mlp is further limited by the size of the bufers connecting memory hierarchy layers.

Memory Level Parallelism Semantic Scholar Abstract: obtaining high instruction throughput on modern cpus requires generating a high degree of memory level parallelism (mlp). mlp is typically reported as a quantitative metric at the dram level. More specifically, it tries to maximize both cache level parallelism (clp) and memory level parallelism (mlp). this paper presents different incarnations of our approach, and evaluates them using a set of 12 multithreaded applications. Abstract—recently proposed processor microarchitectures that generate high memory level parallelism (mlp) promise substantial performance gains. Abstract: recently proposed processor micro architecture that generates high memory level parallelism promise substantial performance gains. the performance of memory bound commercial applications such as databases is limited by increasing memory latencies.

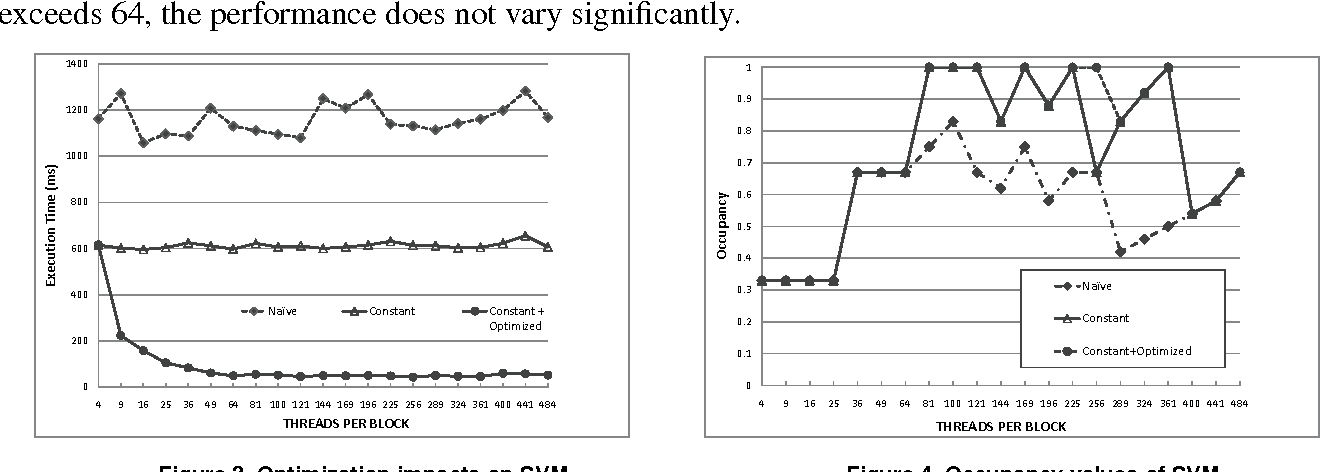

Memory Level Parallelism Semantic Scholar Abstract—recently proposed processor microarchitectures that generate high memory level parallelism (mlp) promise substantial performance gains. Abstract: recently proposed processor micro architecture that generates high memory level parallelism promise substantial performance gains. the performance of memory bound commercial applications such as databases is limited by increasing memory latencies. Memory level parallelism (mlp) can significantly improve performance. today’s out of order processors are therefore equipped with complex hard ware that allows them to look into the future and to select independent loads that can be overlapped. I am writing a script to extract the values for up to 12 sequential memory accesses (as opposed to parallel in this case) and feed the data to an smt solver, which can solve the above equation and give us more precise results. To provide insights into the performance bottlenecks of parallel applications on gpu architectures, we propose a simple analytical model that estimates the execution time of massively parallel programs. To provide insights into the performance bottlenecks of parallel applications on gpu architectures, we propose a simple analytical model that estimates the execution time of massively parallel programs.

Bit Level Parallelism Semantic Scholar Memory level parallelism (mlp) can significantly improve performance. today’s out of order processors are therefore equipped with complex hard ware that allows them to look into the future and to select independent loads that can be overlapped. I am writing a script to extract the values for up to 12 sequential memory accesses (as opposed to parallel in this case) and feed the data to an smt solver, which can solve the above equation and give us more precise results. To provide insights into the performance bottlenecks of parallel applications on gpu architectures, we propose a simple analytical model that estimates the execution time of massively parallel programs. To provide insights into the performance bottlenecks of parallel applications on gpu architectures, we propose a simple analytical model that estimates the execution time of massively parallel programs.

Degree Of Parallelism Semantic Scholar To provide insights into the performance bottlenecks of parallel applications on gpu architectures, we propose a simple analytical model that estimates the execution time of massively parallel programs. To provide insights into the performance bottlenecks of parallel applications on gpu architectures, we propose a simple analytical model that estimates the execution time of massively parallel programs.

Figure 4 From Memory Level And Thread Level Parallelism Aware Gpu

Comments are closed.