Memory Efficient Adaptive Optimization Pdf

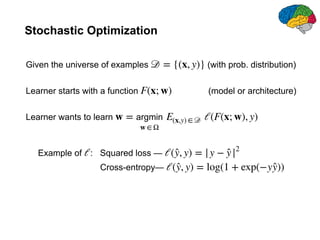

An Adaptive Optimization Technique For Dynamic Environments Pdf Motivated by these challenges, we describe an adaptive optimization method that retains the benefits of standard per parameter adaptivity while significantly reducing memory overhead. our construction is general and flexible, and very simple to implement. View a pdf of the paper titled memory efficient adaptive optimization, by rohan anil and 3 other authors.

Memory Efficient Adaptive Optimization Pdf In this paper, we first study a confidence guided strategy to reduce the instability of existing memory efficient optimizers. Through extensive research, design, and evaluation, the system demonstrates its ability to optimize memory utilization, enhance application responsiveness, and reduce unnecessary disk activity, especially on low memory devices. In behemoth size applications, this memory overhead restricts the size of the model being used as well as the number of examples in a mini batch. we describe a novel, simple, and flexible adaptive optimization method with a sublinear memory cost that retains the benefits of per parameter adaptivity while allowing for larger models and mini batches. In this paper, we first study a confidence guided strategy to reduce the instability of existing memory efficient optimizers. based on this strategy, we propose came to simultaneously achieve two goals: fast convergence as in traditional adaptive methods, and low memory usage as in memory efficient methods.

Memory Efficient Adaptive Optimization Pdf In behemoth size applications, this memory overhead restricts the size of the model being used as well as the number of examples in a mini batch. we describe a novel, simple, and flexible adaptive optimization method with a sublinear memory cost that retains the benefits of per parameter adaptivity while allowing for larger models and mini batches. In this paper, we first study a confidence guided strategy to reduce the instability of existing memory efficient optimizers. based on this strategy, we propose came to simultaneously achieve two goals: fast convergence as in traditional adaptive methods, and low memory usage as in memory efficient methods. The document discusses sm3, a memory efficient adaptive optimization algorithm for machine learning that enhances stochastic gradient descent (sgd) by using adaptive preconditioning techniques. Despite the success of our came optimizer in training large language models with memory efi ciency, there are still some limitations that need to be addressed in the future. This work proposes alada, an adaptive momentum method for stochastic optimization over large scale matrices. alada employs a rank one factorization approach to estimate the second moment of gradients, where factors are updated alternatively to minimize the estimation error. Tes of popular algorithms like adam consuming substantial memory. this paper generalizes existing high probability convergence analysis for adagrad and adagrad nor.

Comments are closed.