Maximum Likelihood Pdf Normal Distribution Estimation Theory

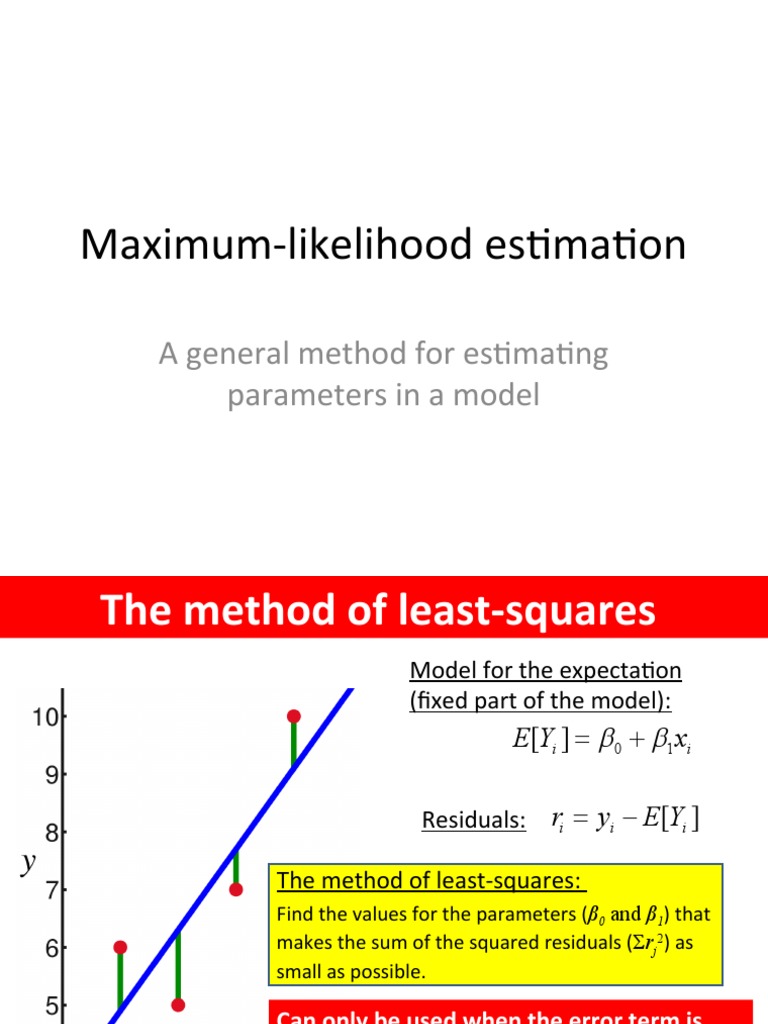

Maximum Likelihood Estimation Pdf Errors And Residuals Least Squares To use a maximum likelihood estimator, first write the log likelihood of the data given your parameters. then chose the value of parameters that maximize the log likelihood function. Article begins by defining the likelihood function and its transformation to the log likelihood function for simplification. the properties of mle, including consistency, efficiency, and.

Maximum Likelihood Estimation Explained With Coin Toss And Normal In this white paper we will use maximum likelihood estimation to estimate the mean and variance of a normal distribution. to that end we will work through the following hypothetical problem. In an effort to combine the underlying logic and practice of ml estima tion, i provide a general modeling framework utilizing the tools of maximum likelihood methods. Maximum likelihood estimation (mle) is trying to find the best parameters for a specific dataset, d. specifically, we want to find the parameters ˆθmle that maximize the likelihood for d. Maximum likelihood theory tells us that, asymptotically, the variance covariance matrix of our estimated parameters is equal to the inverse of the negative of the information matrix:.

Lecture 03 Maximum Likelihood Estimation Pdf Estimation Theory Maximum likelihood estimation (mle) is trying to find the best parameters for a specific dataset, d. specifically, we want to find the parameters ˆθmle that maximize the likelihood for d. Maximum likelihood theory tells us that, asymptotically, the variance covariance matrix of our estimated parameters is equal to the inverse of the negative of the information matrix:. Maximum likelihood estimation (fisher 1922, 1925) is a classic method that finds the value of the estimator “most likely to have generated the observed data, assuming the model specification is correct.”. Maximum likelihood estimation can be applied to a vector valued parameter. for a simple random sample of n normal random variables, we can use the properties of the exponential function to simplify the likelihood function. Recall that maximum likelihood estimators are a special case of m estimators. in order for maximum likelihood estimators to be consistent, it must be the case that certain reg ularity conditions are met and that the mle objective function identi es the population parameters. Maximum likelihood is by far the most pop ular general method of estimation. its wide spread acceptance is seen on the one hand in the very large body of research dealing with its theoretical properties, and on the other in the almost unlimited list of applications.

Comments are closed.