Maximum Likelihood Pdf Estimation Theory Statistical Theory

Maximum Likelihood Estimation Pdf Estimation Theory Logarithm Through this thorough exposition, article elucidates the important role of mle in modern statistical practice and its application across diverse scientific disciplines. Parameter estimation story so far at this point: if you are provided with a model and all the necessary probabilities, you can make predictions! but how do we infer the probabilities for a given model? ~poi 5.

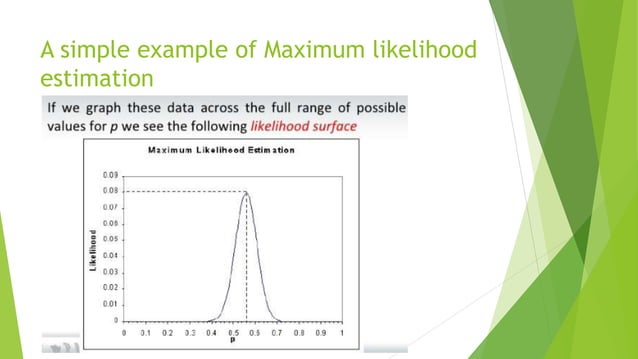

Maximum Likelihood Pdf Estimation Theory Statistical Theory Maximum likelihood estimation (fisher 1922, 1925) is a classic method that finds the value of the estimator “most likely to have generated the observed data, assuming the model specification is correct.”. X is the likelihood function of the random variable. usually, we denote the likelihood function by l(θ) = l(θ; x) and its logarithm is l(θ) = log l(θ). the maximum likelihood estimator (mle) of θ is the solution of θ which maximizes the likelihood function, i.e. Maximum likelihood is by far the most pop ular general method of estimation. its wide spread acceptance is seen on the one hand in the very large body of research dealing with its theoretical properties, and on the other in the almost unlimited list of applications. Much of the attraction of maximum likelihood estimators is based on their properties for large sample sizes. we summarizes some the important properties below, saving a more technical discussion of these properties for later.

Maximum Likelihood Estimation Pptx Maximum likelihood is by far the most pop ular general method of estimation. its wide spread acceptance is seen on the one hand in the very large body of research dealing with its theoretical properties, and on the other in the almost unlimited list of applications. Much of the attraction of maximum likelihood estimators is based on their properties for large sample sizes. we summarizes some the important properties below, saving a more technical discussion of these properties for later. To clarify the situation we present a few known facts which should be kept in mind as one proceeds along through the various proofs of consistency, asymptotic nor mality or asymptotic optimality of maximum likelihood estimates. Recall that maximum likelihood estimators are a special case of m estimators. in order for maximum likelihood estimators to be consistent, it must be the case that certain reg ularity conditions are met and that the mle objective function identi es the population parameters. In maximum likelihood estimation, these models correspond to models of parameters governing a specified pdf or pf. as is conventional in most statistical texts, a parame ter is simply defined as any constant in a pf or pdf that can take on some arbitrary value within a specified range. In summary, the likelihood function is a fundamental concept in statistical inference that quantifies the plausibility of diferent parameter values given a set of observed data.

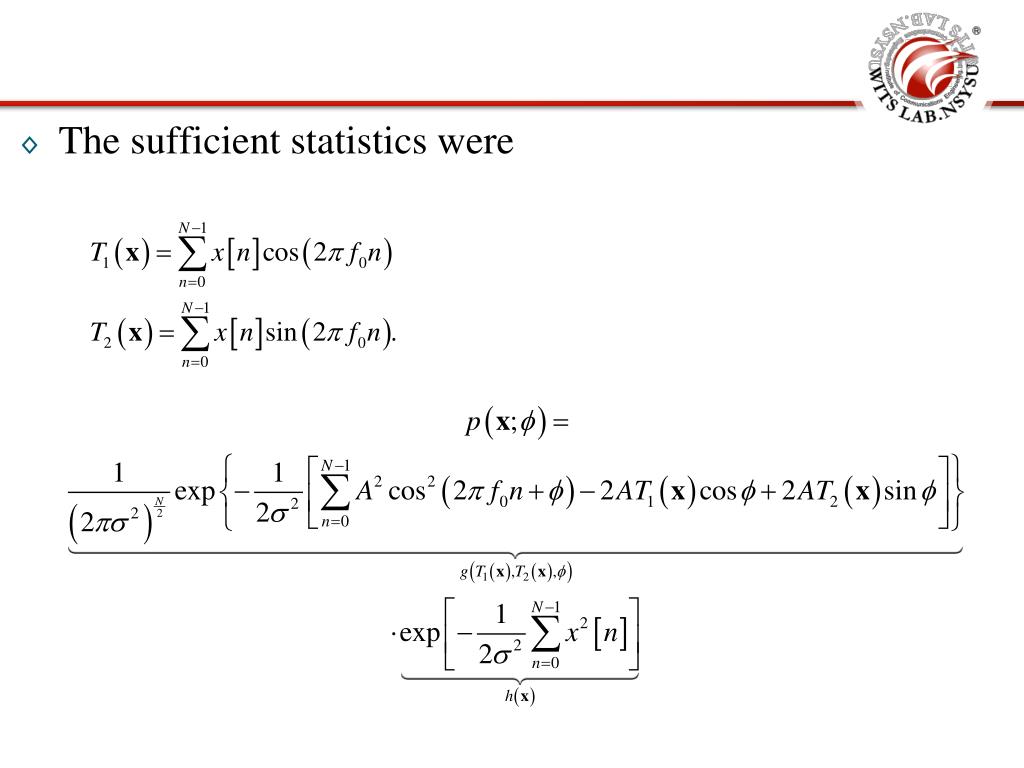

Ppt 2011 Summer Training Course Estimation Theory Chapter 7 Maximum To clarify the situation we present a few known facts which should be kept in mind as one proceeds along through the various proofs of consistency, asymptotic nor mality or asymptotic optimality of maximum likelihood estimates. Recall that maximum likelihood estimators are a special case of m estimators. in order for maximum likelihood estimators to be consistent, it must be the case that certain reg ularity conditions are met and that the mle objective function identi es the population parameters. In maximum likelihood estimation, these models correspond to models of parameters governing a specified pdf or pf. as is conventional in most statistical texts, a parame ter is simply defined as any constant in a pf or pdf that can take on some arbitrary value within a specified range. In summary, the likelihood function is a fundamental concept in statistical inference that quantifies the plausibility of diferent parameter values given a set of observed data.

Maximum Likelihood Estimation Pdf Estimation Theory Bias Of An In maximum likelihood estimation, these models correspond to models of parameters governing a specified pdf or pf. as is conventional in most statistical texts, a parame ter is simply defined as any constant in a pf or pdf that can take on some arbitrary value within a specified range. In summary, the likelihood function is a fundamental concept in statistical inference that quantifies the plausibility of diferent parameter values given a set of observed data.

Maximum Likelihood Pdf Estimator Estimation Theory

Comments are closed.