Maximum Likelihood Estimation Explained With Coin Toss And Normal

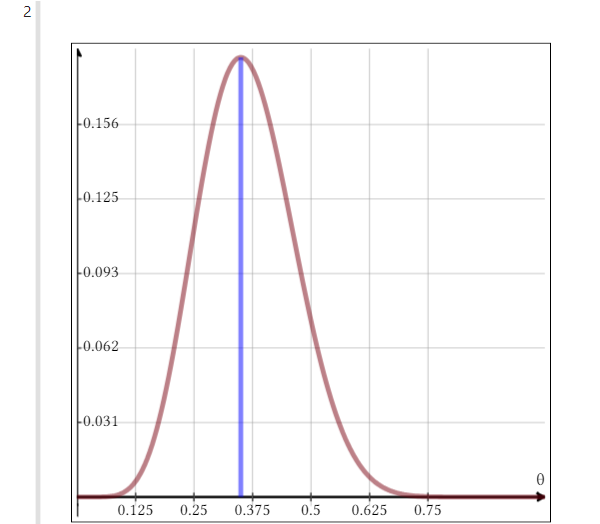

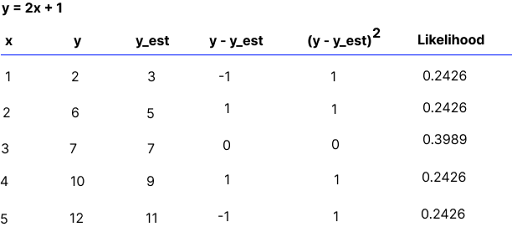

Maximum Likelihood Estimation Explained With Coin Toss And Normal The normal distribution has a concave log likelihood, so there's a single global maximum. but more complex models (mixture models, neural networks) may have multiple local maxima. We model a set of observations as a random sample from an unknown joint probability distribution which is expressed in terms of a set of parameters. the goal of maximum likelihood estimation is to determine the parameters for which the observed data have the highest joint probability.

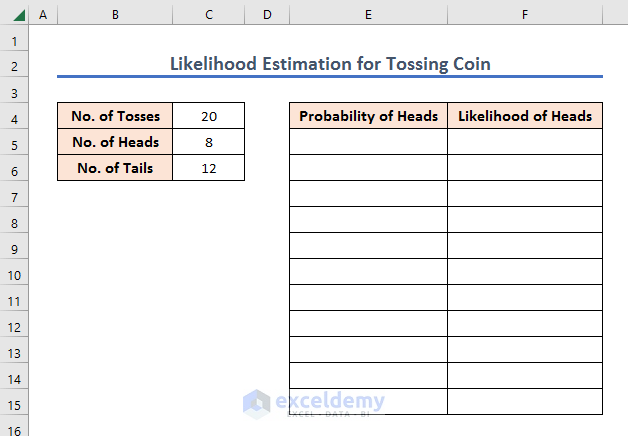

Maximum Likelihood Estimate Example Coin Flip Given that there were 55 heads, find the maximum likelihood estimate for the probability of heads on a single toss. before actually solving the problem, let’s establish some notation and terms. we can think of counting the number of heads in 100 tosses as an experiment. We’re going to use all of the principles from maximum likelihood estimation but first, we need to point out a subtle difference that can cause some confusion both here and when we get to more complicated probabilistic models later. Learn what maximum likelihood estimation (mle) is, understand its mathematical foundations, see practical examples, and discover how to implement mle in python. In deriving likelihood, we will typically treat x as given; i.e., we focus on inference about the relationship between x and y, rather than the distribution of x.

Probability Concepts Explained Maximum Likelihood Estimation Learn what maximum likelihood estimation (mle) is, understand its mathematical foundations, see practical examples, and discover how to implement mle in python. In deriving likelihood, we will typically treat x as given; i.e., we focus on inference about the relationship between x and y, rather than the distribution of x. We want to find the likelihood of obtaining the observed data given the tree. the tree that makes the data the most probable is the maximum likelihood estimate of the phylogeny. They define em algorithm as an iterative estimation algorithm that can derive the maximum likelihood (ml) estimates in the presence of missing hidden data (“incomplete data”). "maximum likelihood estimation (mle) is a method of estimating the parameters of an assumed probability distribution, given some observed data." from again:. Formally, we are trying to estimate a parameter of the experiment (here: the probability of a coin flip being heads). we will choose መ = argmax l( ; ) argmax is the argument that produces the maximum so the causes l( ; ) to be maximized.

How To Find Maximum Likelihood Estimation In Excel We want to find the likelihood of obtaining the observed data given the tree. the tree that makes the data the most probable is the maximum likelihood estimate of the phylogeny. They define em algorithm as an iterative estimation algorithm that can derive the maximum likelihood (ml) estimates in the presence of missing hidden data (“incomplete data”). "maximum likelihood estimation (mle) is a method of estimating the parameters of an assumed probability distribution, given some observed data." from again:. Formally, we are trying to estimate a parameter of the experiment (here: the probability of a coin flip being heads). we will choose መ = argmax l( ; ) argmax is the argument that produces the maximum so the causes l( ; ) to be maximized.

Understanding Maximum Likelihood Estimation Mle Built In "maximum likelihood estimation (mle) is a method of estimating the parameters of an assumed probability distribution, given some observed data." from again:. Formally, we are trying to estimate a parameter of the experiment (here: the probability of a coin flip being heads). we will choose መ = argmax l( ; ) argmax is the argument that produces the maximum so the causes l( ; ) to be maximized.

Comments are closed.