Matryoshka Representation Learning Mrl For Ml Tasks And Vector Compression

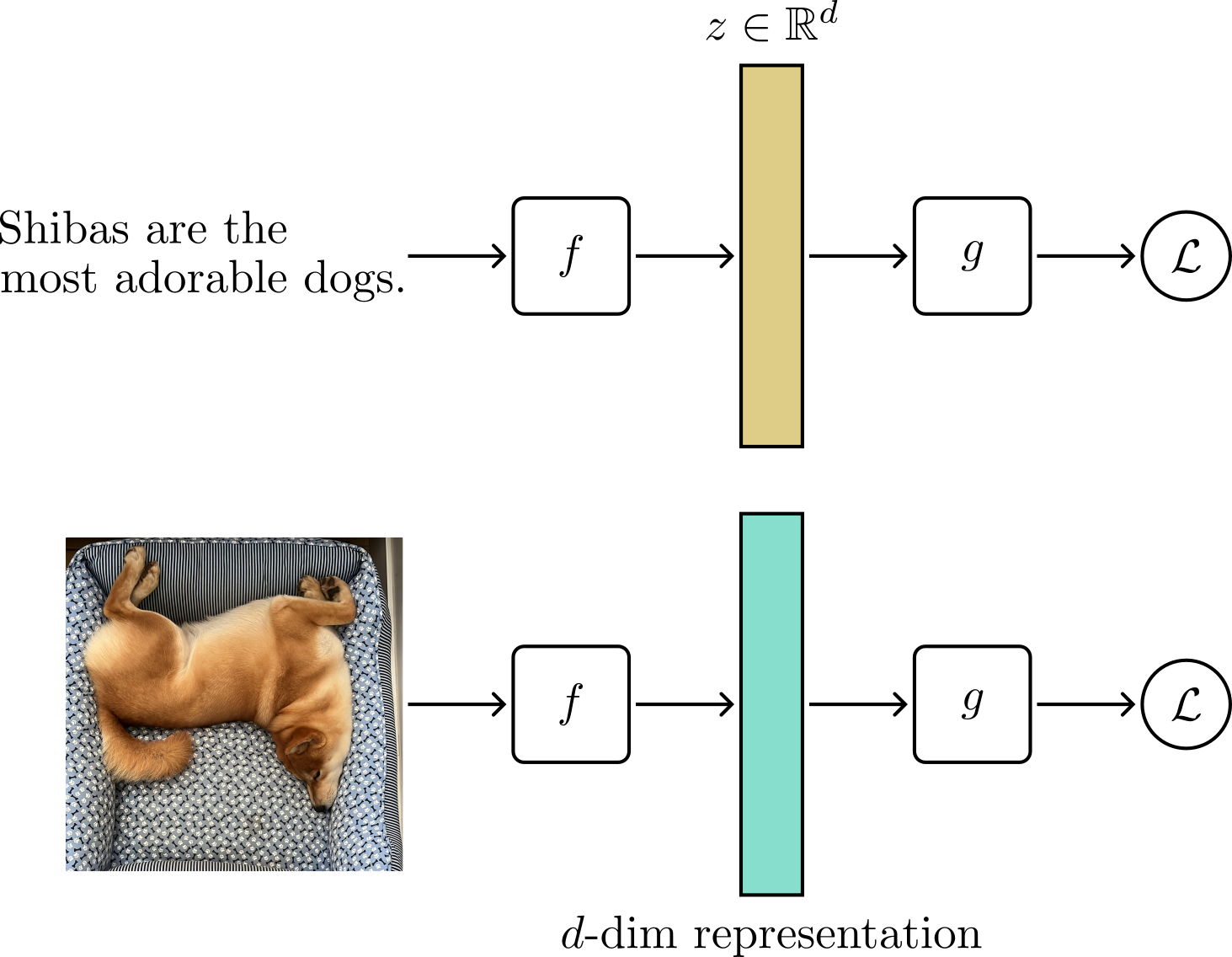

Matryoshka Representation Learning Mrl Our main contribution is matryoshka representation learning (mrl) which encodes information at different granularities and allows a single embedding to adapt to the computational constraints of downstream tasks. Our main contribution is matryoshka representation learning (mrl) which encodes information at different granularities and allows a single embedding to adapt to the computational constraints of downstream tasks.

What Is Matryoshka Representation Learning Mrl Luminary Blog What is matryoshka representation learning (mrl)? matryoshka representation learning is a novel technique used to create vector embeddings with the same model, but with varying sizes. This repository contains code to train, evaluate, and analyze matryoshka representations with a resnet50 backbone. the training pipeline utilizes efficient ffcv dataloaders modified for mrl. Our main contribution is matryoshka representation learning (mrl) which encodes information at different granularities and allows a single embedding to adapt to the computational constraints of downstream tasks. This article will explore how mrl works, its implementation, and how it allows for scalable and efficient machine learning models. let’s start with the motivation behind mrl.

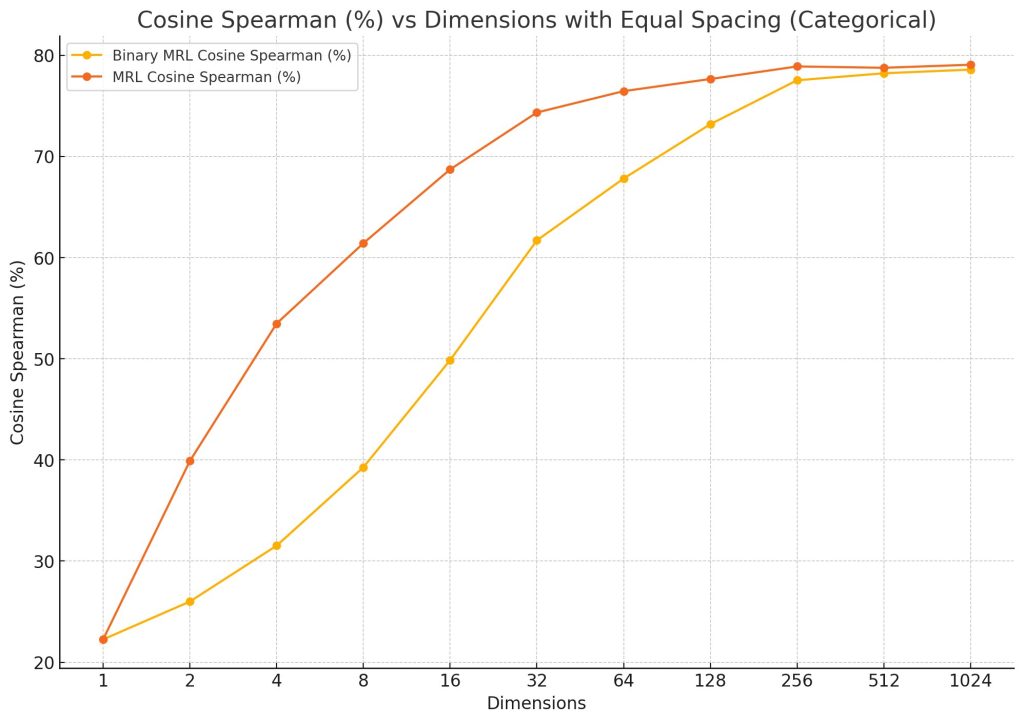

What Is Matryoshka Representation Learning Mrl Luminary Blog Our main contribution is matryoshka representation learning (mrl) which encodes information at different granularities and allows a single embedding to adapt to the computational constraints of downstream tasks. This article will explore how mrl works, its implementation, and how it allows for scalable and efficient machine learning models. let’s start with the motivation behind mrl. Matryoshka representation learning (mrl), introduced by kusupati et al., presents this paradigm shift: what if we could encode information hierarchically within nested subspaces of a single embedding vector?. This is matryoshka representation learning (mrl) in action. it's a training technique that lets you slice embeddings to any size and still get useful representations. i recently implemented this for a semantic search system and cut our vector search latency by 80% while barely touching accuracy. Matryoshka representation learning (mrl) adapts learned representations to varying computational constraints, offering smaller embedding sizes, faster retrievals, and enhanced accuracy in diverse tasks and modalities. Our main contribution is matryoshka representation learning (mrl) which encodes information at different granularities and allows a single embedding to adapt to the computational constraints of downstream tasks.

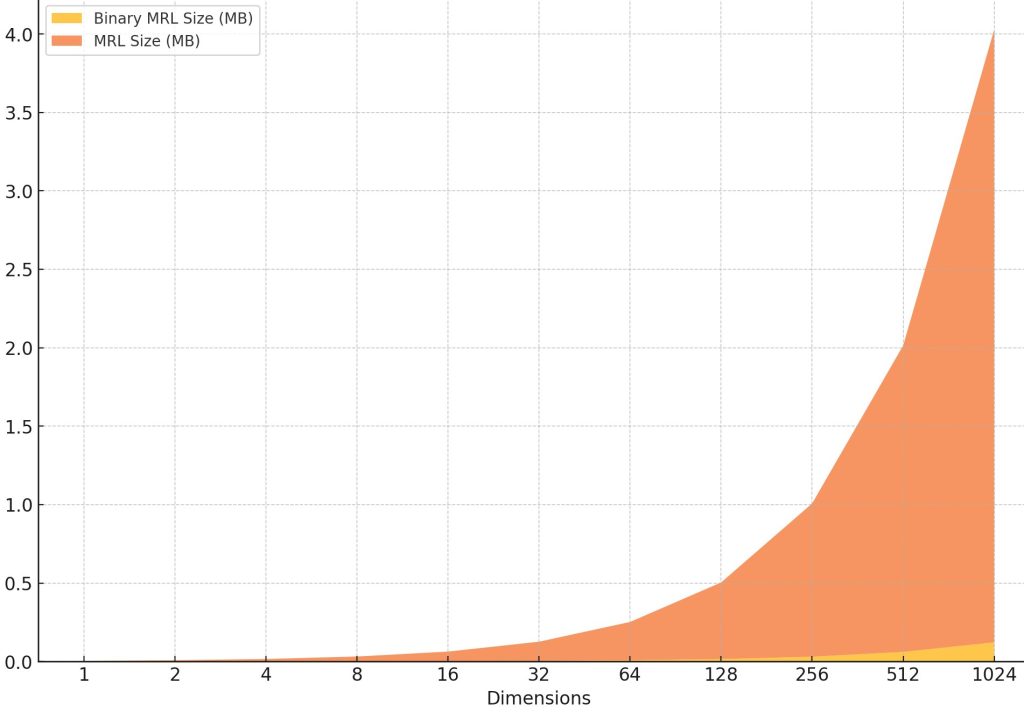

Resource Efficient Binary Vector Embeddings With Matryoshka Matryoshka representation learning (mrl), introduced by kusupati et al., presents this paradigm shift: what if we could encode information hierarchically within nested subspaces of a single embedding vector?. This is matryoshka representation learning (mrl) in action. it's a training technique that lets you slice embeddings to any size and still get useful representations. i recently implemented this for a semantic search system and cut our vector search latency by 80% while barely touching accuracy. Matryoshka representation learning (mrl) adapts learned representations to varying computational constraints, offering smaller embedding sizes, faster retrievals, and enhanced accuracy in diverse tasks and modalities. Our main contribution is matryoshka representation learning (mrl) which encodes information at different granularities and allows a single embedding to adapt to the computational constraints of downstream tasks.

Resource Efficient Binary Vector Embeddings With Matryoshka Matryoshka representation learning (mrl) adapts learned representations to varying computational constraints, offering smaller embedding sizes, faster retrievals, and enhanced accuracy in diverse tasks and modalities. Our main contribution is matryoshka representation learning (mrl) which encodes information at different granularities and allows a single embedding to adapt to the computational constraints of downstream tasks.

Matryoshka Representation Learning Thalles Blog

Comments are closed.