Matryoshka Representation Learning And Adaptive Semantic Search

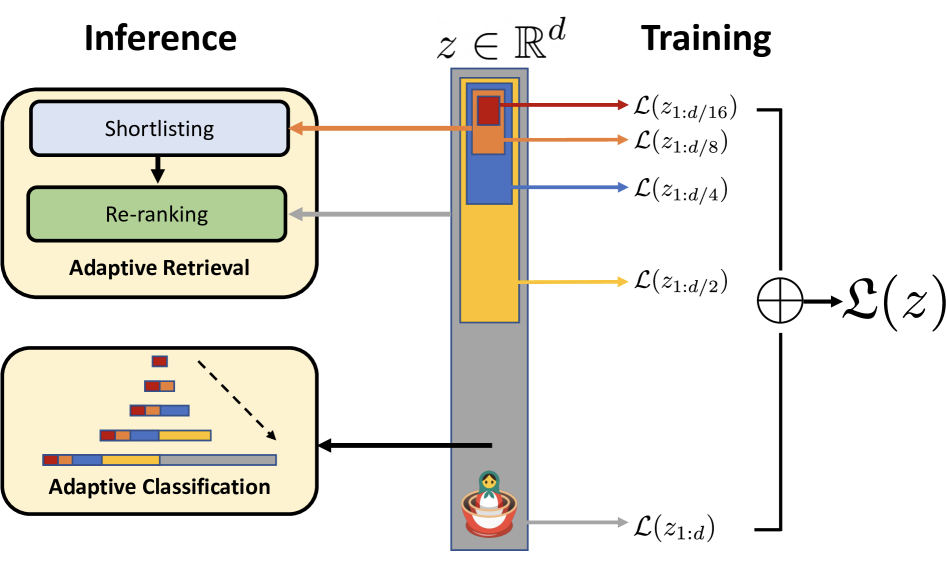

What Is Matryoshka Representation Learning Mrl Luminary Blog Our main contribution is matryoshka representation learning (mrl) which encodes information at different granularities and allows a single embedding to adapt to the computational constraints of downstream tasks. Mrl adanns: matryoshka representation learning for web scale adaptive semantic search.

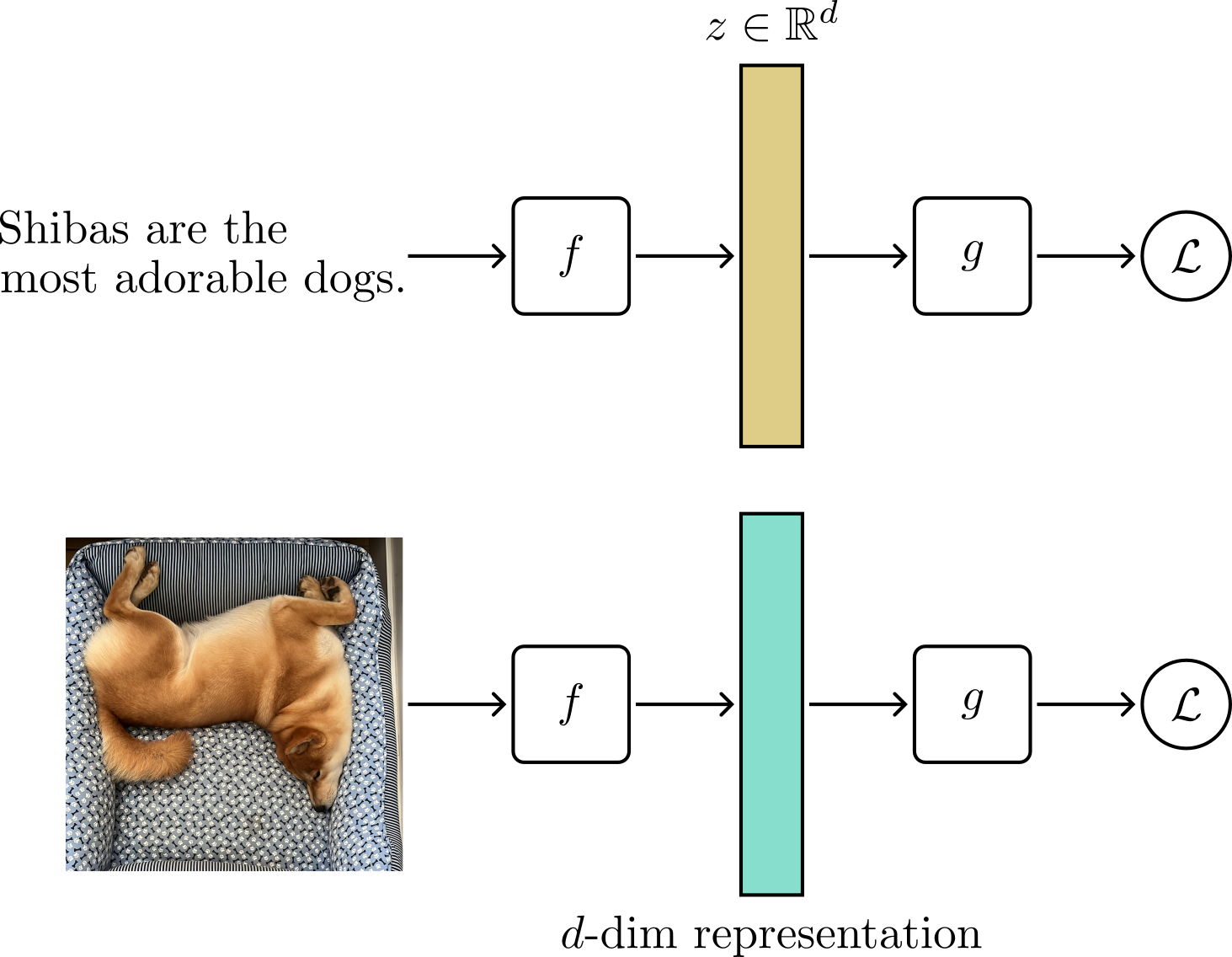

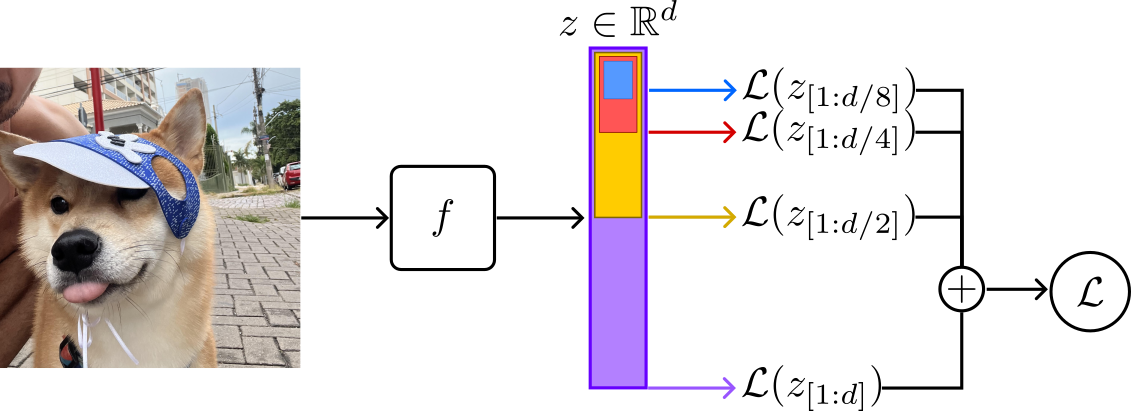

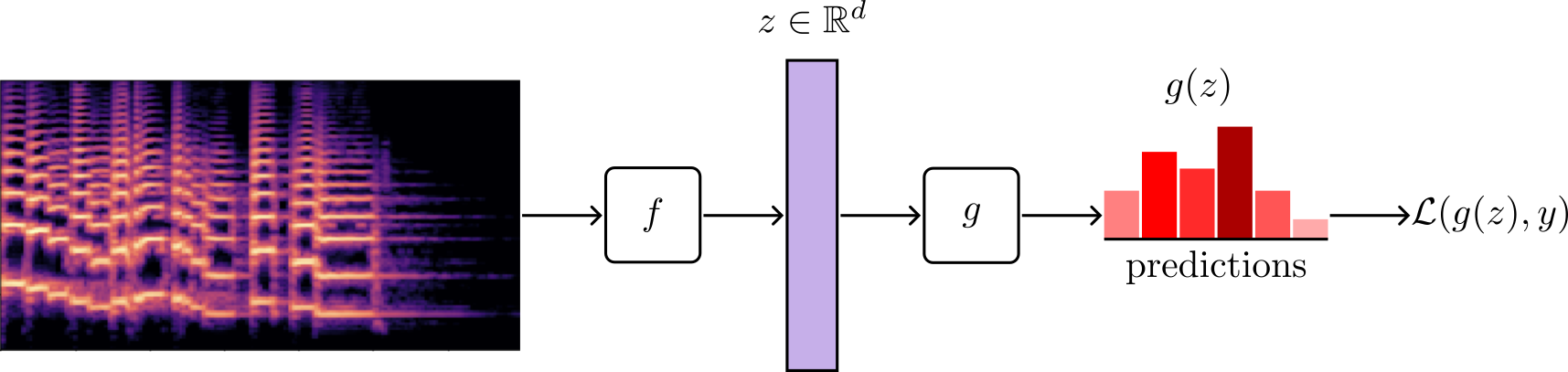

Matryoshka Representation Learning Thalles Blog Our main contribution is matryoshka representation learning (mrl) which encodes information at different granularities and allows a single embedding to adapt to the computational constraints of downstream tasks. This repository contains code to train, evaluate, and analyze matryoshka representations with a resnet50 backbone. the training pipeline utilizes efficient ffcv dataloaders modified for mrl. In conclusion, we presented matryoshka representation learning (mrl), a flexible represen tation learning approach that encodes information at multiple granularities in a single embedding vector. In this thesis, we propose matryoshka representation learning (mrl) [64] to address this challenge, which learns coarse to fine representations with minimal overhead to existing representation learning frameworks at no additional training or inference cost.

Matryoshka Representation Learning Thalles Blog In conclusion, we presented matryoshka representation learning (mrl), a flexible represen tation learning approach that encodes information at multiple granularities in a single embedding vector. In this thesis, we propose matryoshka representation learning (mrl) [64] to address this challenge, which learns coarse to fine representations with minimal overhead to existing representation learning frameworks at no additional training or inference cost. Mrl is particularly effective for tasks like semantic search, information retrieval, multilingual processing, and any application requiring nuanced representations of data across different. Matryoshka representation learning revisits this idea, and proposes a solution to train embedding models whose embeddings are still useful after truncation to much smaller sizes. this allows for considerably faster (bulk) processing. With matryoshka representation learning, we do not train separate models for each dimension. we produce a single full vector and structure it so we can slice it to different sizes. early dimensions carry the core semantics; later dimensions add finer detail. To this end we propose matryoshka representation learning which learns coarse to fine nested representations at no training or inference overhead to existing frameworks.

Matryoshka Representation Learning Thalles Blog Mrl is particularly effective for tasks like semantic search, information retrieval, multilingual processing, and any application requiring nuanced representations of data across different. Matryoshka representation learning revisits this idea, and proposes a solution to train embedding models whose embeddings are still useful after truncation to much smaller sizes. this allows for considerably faster (bulk) processing. With matryoshka representation learning, we do not train separate models for each dimension. we produce a single full vector and structure it so we can slice it to different sizes. early dimensions carry the core semantics; later dimensions add finer detail. To this end we propose matryoshka representation learning which learns coarse to fine nested representations at no training or inference overhead to existing frameworks.

Paper Review Matryoshka Representation Learning With matryoshka representation learning, we do not train separate models for each dimension. we produce a single full vector and structure it so we can slice it to different sizes. early dimensions carry the core semantics; later dimensions add finer detail. To this end we propose matryoshka representation learning which learns coarse to fine nested representations at no training or inference overhead to existing frameworks.

Comments are closed.