Markov Decision Process

Markov Decision Process Articles Intuitionlabs A markov decision process (mdp) is a model for sequential decision making under uncertainty. learn the definition, optimization objective, algorithms, and examples of mdps in various fields. Learn the definition, elements, and value functions of markov decision processes (mdps), a mathematical framework for sequential decision making problems. see examples, diagrams, and formulas for finite and infinite horizons.

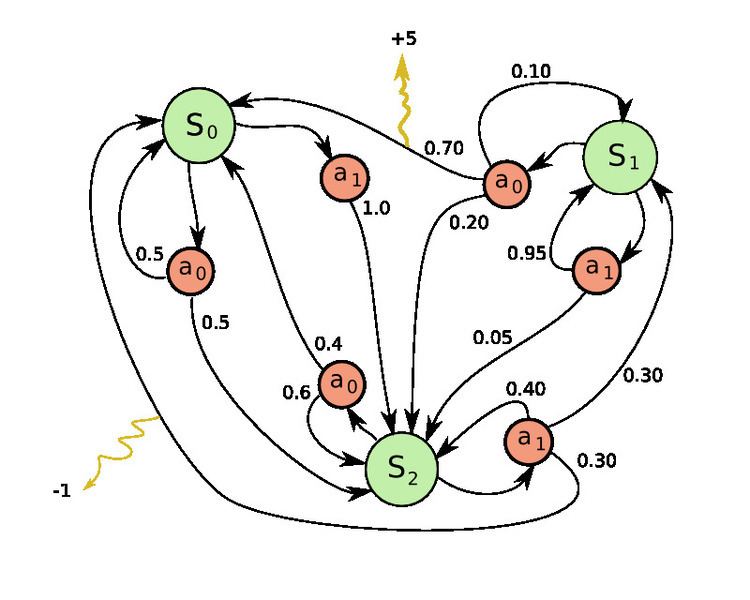

Markov Decision Process Alchetron The Free Social Encyclopedia It contains well written, well thought and well explained computer science and programming articles, quizzes and practice competitive programming company interview questions. Learn the definition and properties of markov decision processes (mdps), a formal model for reinforcement learning. see examples of mdps, markov reward processes (mrps) and value functions, and how to compute them. Definition markov decision process (mdp) is a probabilistic temporal model of an agent interacting with its environment. it consists of the following: set of states, s, set of actions a, transition function t (s; a; s0), reward function r(s), discount factor . A markov decision process (mdp) is a stochastic (randomly determined) mathematical tool based on the markov property concept. it is used to model decision making problems where outcomes are partially random and partially controllable, and to help make optimal decisions within a dynamic system.

Markov Decision Process Download Scientific Diagram Definition markov decision process (mdp) is a probabilistic temporal model of an agent interacting with its environment. it consists of the following: set of states, s, set of actions a, transition function t (s; a; s0), reward function r(s), discount factor . A markov decision process (mdp) is a stochastic (randomly determined) mathematical tool based on the markov property concept. it is used to model decision making problems where outcomes are partially random and partially controllable, and to help make optimal decisions within a dynamic system. Markov decision processes are “markovian” in the sense that they satisfy the markov property, or memoryless property, which states that the future and the past are conditionally independent, given the present. Learn the basics of markov decision process (mdp), a stochastic sequential decision making method based on the markov property. find out how to define the policy, value function, and return, and how to solve mdps using different methods. A markov decision process (mdp) provides a formal framework to model sequential decision making in reinforcement learning. it defines how an agent interacts with an environment through states, actions and rewards to learn optimal behavior over time. Chapter10 markov decision processes so far, most of the learning problems we have looked at have been supervised , that is, for each training input x(i), we are told which value y(i)should be the output.

Comments are closed.