Marginal Probability Mass Function

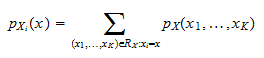

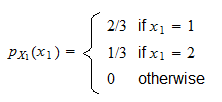

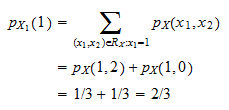

Probability Mass Function Pdf Probability Distribution Random Learn the definition and how to derive the marginal probability mass function of a discrete random variable from the joint probability mass function of a random vector. see an example and a link to a lecture on random vectors. Let x and y be two discrete random variables with the joint probability mass function p (x = x, y = y). the marginal probability mass function of x is obtained by summing over all possible values of y:.

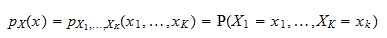

Marginal Probability Mass Function Definition marginal probability mass function given a known joint distribution of two discrete random variables, say, x and y, the marginal distribution of either variable – x for example – is the probability distribution of x when the values of y are not taken into consideration. You can find the marginal probability mass function (pmf) of x by summing the joint probabilities using the above formula. for example, suppose you had an urn of ten balls with four blue, three black and three red balls. Learn how to define and compute joint and marginal mass functions for discrete random variables and joint and marginal density functions for continuous random variables. see examples, exercises and formulas for expectation and covariance. Joint probability joint probability mass function the joint probability mass function of two discrete random variables x and y is the function p : r2 → [0, 1], defined by: px,y(a, b) = p [ x = a, y = b ] for −∞ < a, b < ∞.

Marginal Probability Mass Function Learn how to define and compute joint and marginal mass functions for discrete random variables and joint and marginal density functions for continuous random variables. see examples, exercises and formulas for expectation and covariance. Joint probability joint probability mass function the joint probability mass function of two discrete random variables x and y is the function p : r2 → [0, 1], defined by: px,y(a, b) = p [ x = a, y = b ] for −∞ < a, b < ∞. Given the joint pdf (, ) of two continuous random variables, the marginal probability density function (p), or simply the marginal density, of and , can be obtained by integrating the joint pdf over the other variable. If we wish to obtain information about two or more (discrete) random variables simultaneously, we must introduce the concept of compound (or joint) probability mass functions. for instance, for two random variables x and y, their compound pmf is given by is said to be the marginal pmf for x i. Assume that each customer purchases a drink with probability $p$, independently from other customers, and independently from the value of $n$. let $x$ be the number of customers who purchase drinks. We can represent probability mass functions numerically with a table, graphically with a histogram, or analytically with a formula. the following example demonstrates the numerical and graphical representations.

Marginal Probability Mass Function Given the joint pdf (, ) of two continuous random variables, the marginal probability density function (p), or simply the marginal density, of and , can be obtained by integrating the joint pdf over the other variable. If we wish to obtain information about two or more (discrete) random variables simultaneously, we must introduce the concept of compound (or joint) probability mass functions. for instance, for two random variables x and y, their compound pmf is given by is said to be the marginal pmf for x i. Assume that each customer purchases a drink with probability $p$, independently from other customers, and independently from the value of $n$. let $x$ be the number of customers who purchase drinks. We can represent probability mass functions numerically with a table, graphically with a histogram, or analytically with a formula. the following example demonstrates the numerical and graphical representations.

Marginal Probability Mass Function Assume that each customer purchases a drink with probability $p$, independently from other customers, and independently from the value of $n$. let $x$ be the number of customers who purchase drinks. We can represent probability mass functions numerically with a table, graphically with a histogram, or analytically with a formula. the following example demonstrates the numerical and graphical representations.

Comments are closed.