Map Reduce Made Simple

Map Reduce Images Free Hd Download On Lummi In the world of big data and distributed systems, map reduce stands out as a fundamental model for processing massive datasets. it efficiently divides tasks across a cluster, enabling parallel. Map reduce is a framework in which we can write applications to run huge amount of data in parallel and in large cluster of commodity hardware in a reliable manner.

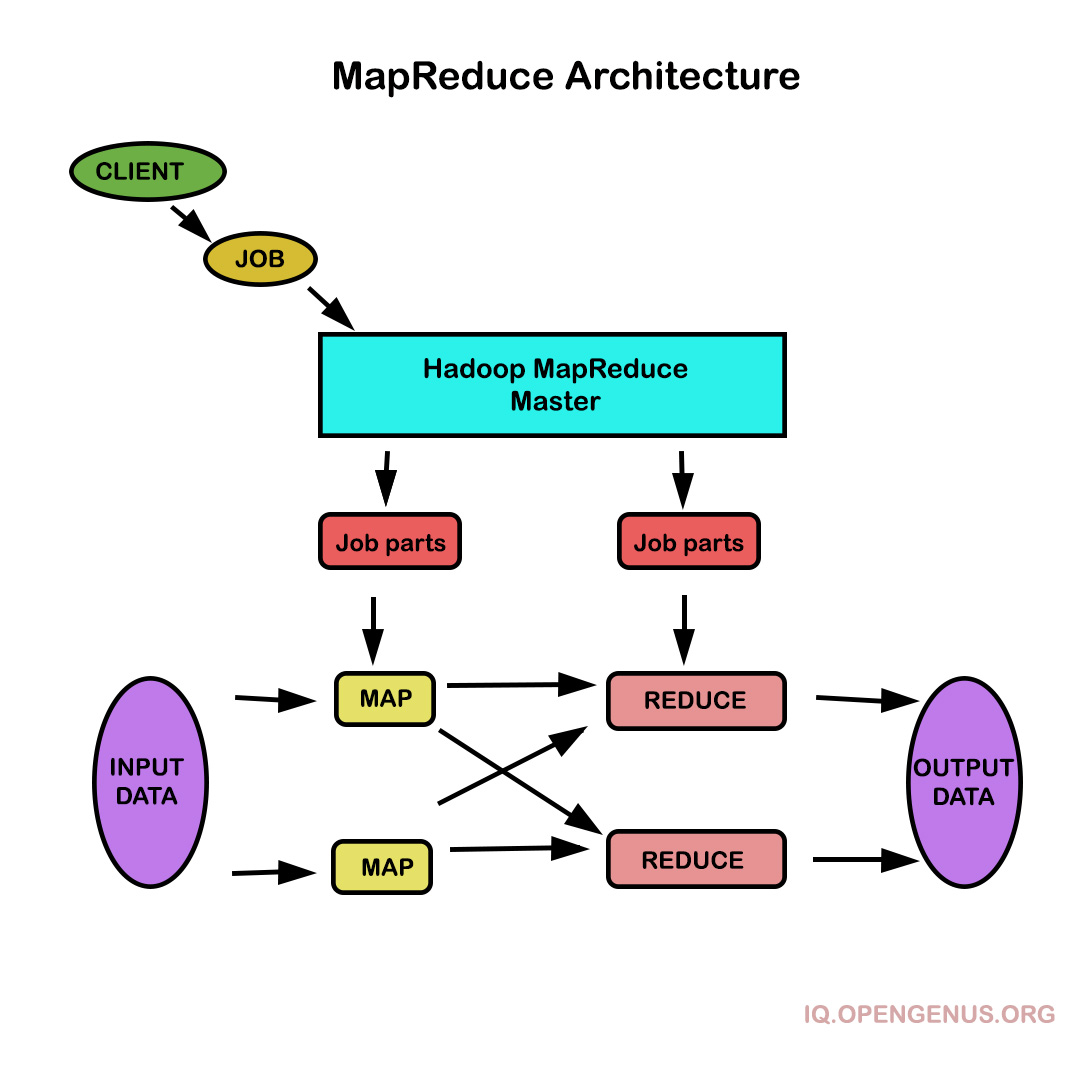

Map Reduce Tutorial Mapredeuce is composed of two main functions: map (k,v): filters and sorts data. reduce (k,v): aggregates data according to keys (k). mapreduce is broken down into several steps: record reader splits input into fixed size pieces for each mapper. In this tutorial, we will focus on mapreduce algorithm, its working, example, word count problem, implementation of wordcount problem in pyspark, mapreduce components, applications, and limitations. Mapreduce is a programming model for writing applications that can process big data in parallel on multiple nodes. mapreduce provides analytical capabilities for analyzing huge volumes of complex data. In this article, we’ll take a step by step journey through hadoop mapreduce, breaking down each phase of its execution with practical examples spanning multiple nodes.

Map Reduce A Simple Explanation Improve Repeat Mapreduce is a programming model for writing applications that can process big data in parallel on multiple nodes. mapreduce provides analytical capabilities for analyzing huge volumes of complex data. In this article, we’ll take a step by step journey through hadoop mapreduce, breaking down each phase of its execution with practical examples spanning multiple nodes. Mapreduce is a programming paradigm and execution framework for processing massive datasets in parallel across thousands of machines without requiring developers to handle distributed systems complexity. 630 views 5 months ago mapreduce made simple more. While hdfs is responsible for storing massive amounts of data, mapreduce handles the actual computation and analysis. it provides a simple yet powerful programming model that allows developers to process large datasets in a distributed and parallel manner. In this section, we will describe the mapreduce programming model and explore how to create programs capable of true big data computations.

Mapreduce In System Design Mapreduce is a programming paradigm and execution framework for processing massive datasets in parallel across thousands of machines without requiring developers to handle distributed systems complexity. 630 views 5 months ago mapreduce made simple more. While hdfs is responsible for storing massive amounts of data, mapreduce handles the actual computation and analysis. it provides a simple yet powerful programming model that allows developers to process large datasets in a distributed and parallel manner. In this section, we will describe the mapreduce programming model and explore how to create programs capable of true big data computations.

Comments are closed.