Making Spark Accessible My Databricks Summer Internship Databricks Blog

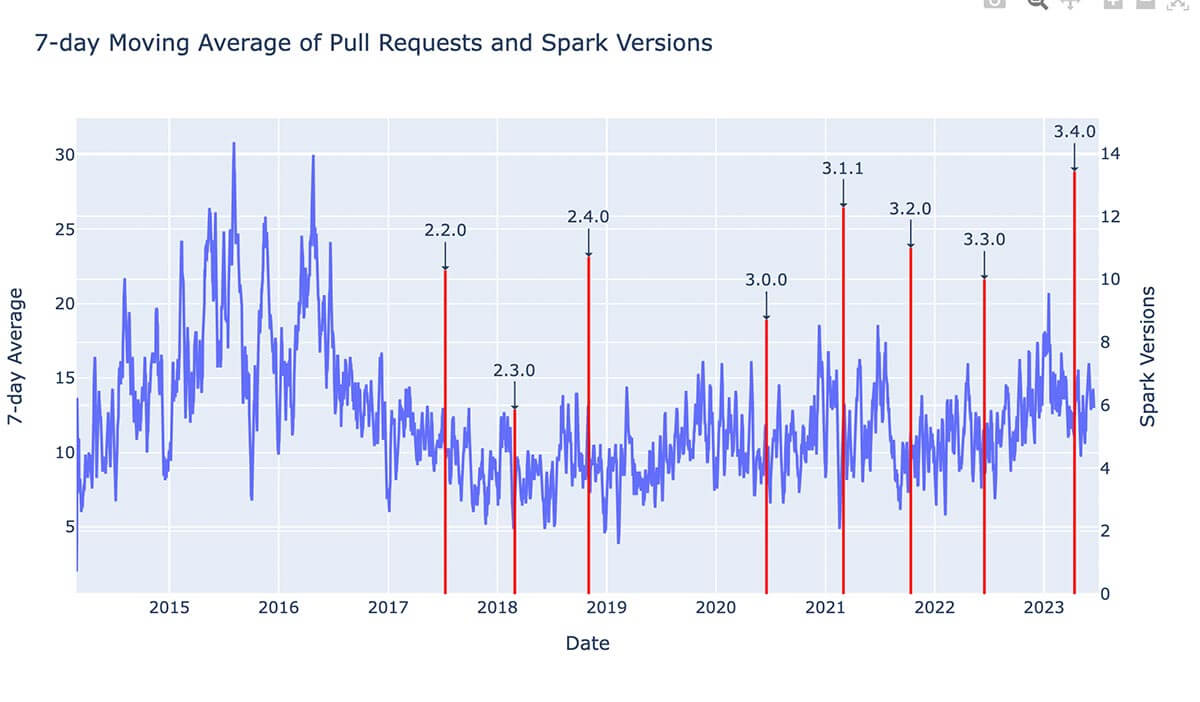

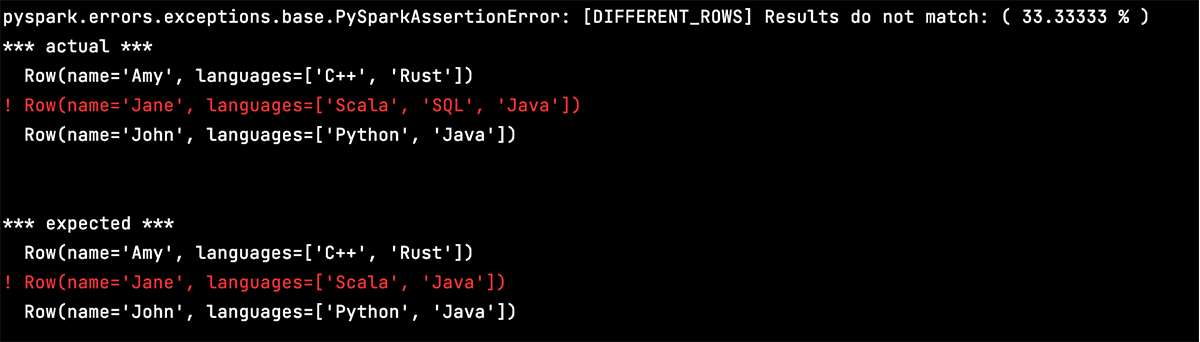

Making Spark Accessible My Databricks Summer Internship The News Intel My summer internship on the pyspark team was a whirlwind of exciting events. the pyspark team develops the python apis of the open source apache spark library and databricks runtime. over the course of the 12 weeks, i drove a project to implement a new built in pyspark test framework. I had an amazing internship at databricks working on oss apache spark this summer!.

Making Spark Accessible My Databricks Summer Internship The News Intel Read all databricks blog articles by amanda liu. In this blog, i am going to share my experience with the cdc internship process. 2) how did you get into databricks? what was the selection procedure? getting into databricks is similar to. Databricks post date september 26, 2023 no comments on making spark accessible: my databricks summer internship engineering blog. Just scheduled my technical screens, hoping for the best. if anyone has taken the technicals in a past recruiting season it'd be massively helpful if you could share your experiences.

Making Spark Accessible My Databricks Summer Internship The News Intel Databricks post date september 26, 2023 no comments on making spark accessible: my databricks summer internship engineering blog. Just scheduled my technical screens, hoping for the best. if anyone has taken the technicals in a past recruiting season it'd be massively helpful if you could share your experiences. To understand how data can be accessed within databricks, we need to examine unity catalog’s governance model. because the entire setup can be somewhat complex, we’ll explain in detail what the implications are. the foundation of unity catalog is the metastore. My time at databricks has left an indelible mark on my professional journey, reinforcing my passion for product management and propelling me toward new horizons. 27 databricks software engineer intern interview questions and 25 interview reviews. free interview details posted anonymously by databricks interview candidates. In short, this starter kit will help you to develop your spark application locally and deploy it to databricks jobs with a single command, and schedule it to run periodically, using databricks connect, databricks unity catalog, and databricks jobs (python wheel task).

Comments are closed.