Machine Learning System Design Harmful Content Detection

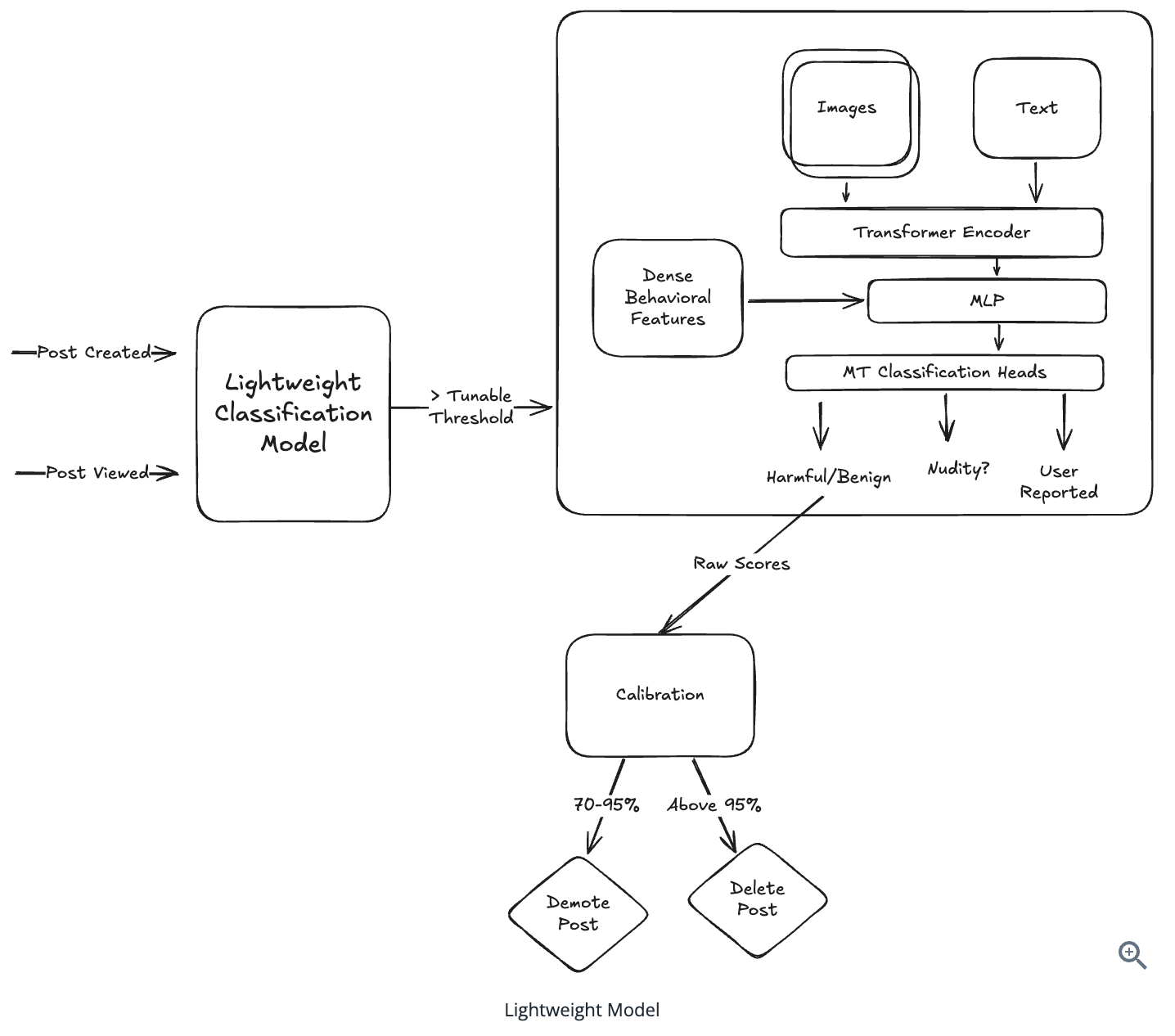

Github Pankace Harmful Content Detection Industrial Machine Learning In this chapter, we focus on detecting posts that might contain harmful content. in particular, we design a system that proactively monitors new posts, detects harmful content, and removes or demotes them if the content violates the platform's guidelines. Harmful content becomes easier to detect the more people are exposed to it. their behaviors (whether blocking, unliking, commenting, etc.) make the challenge of classification of any particular piece of content easier with time.

Topic Harmful Content Detection Ainews What types of harmful content are we aiming to detect? (e.g., hate speech, explicit images, cyberbullying)? what are the potential sources of harmful content? (e.g., social media, user generated content platforms). Below is a sample “interview‐style” answer that walks through the end‐to‐end design of a system for detecting bad or harmful facebook posts—covering everything from data gathering. Design a machine learning system that detects and filters harmful content (hate speech, violence, adult content, spam) from user generated posts and comments in real time. Machine learning (ml) models are widely adopted to detect such content; however, they remain highly vulnerable to adversarial attacks, wherein malicious users subtly modify text to evade detection.

Malicious Web Content Detection Using Machine Learning Malicious Web Design a machine learning system that detects and filters harmful content (hate speech, violence, adult content, spam) from user generated posts and comments in real time. Machine learning (ml) models are widely adopted to detect such content; however, they remain highly vulnerable to adversarial attacks, wherein malicious users subtly modify text to evade detection. In this video, we design a harmful content detection system from scratch using machine learning. Unlike ad prediction or recommendations, the goal isn't just to optimize for engagement or revenue; it's to ensure user safety by acting on harmful content in near real time. the system must handle a massive influx of multi modal data—text, images, and videos—and stop harmful content before it goes viral. Big part of this note is a summary of chapter 5 from the book machine learning system design interview by ali aminian. it is also possible to find several videos discussing the same chapter. Fairmod will increase the overall health of the internet and its accessibility and the goal is to achieve 90% accuracy while having faster detection speed. the content restriction system (crs) harnesses the power of machine learning to mitigate the proliferation of harmful content in digital spaces. the current systems are mostly made by private social media giants and there is a lack of a.

Harmful Content Detection Jiesun Logbook In this video, we design a harmful content detection system from scratch using machine learning. Unlike ad prediction or recommendations, the goal isn't just to optimize for engagement or revenue; it's to ensure user safety by acting on harmful content in near real time. the system must handle a massive influx of multi modal data—text, images, and videos—and stop harmful content before it goes viral. Big part of this note is a summary of chapter 5 from the book machine learning system design interview by ali aminian. it is also possible to find several videos discussing the same chapter. Fairmod will increase the overall health of the internet and its accessibility and the goal is to achieve 90% accuracy while having faster detection speed. the content restriction system (crs) harnesses the power of machine learning to mitigate the proliferation of harmful content in digital spaces. the current systems are mostly made by private social media giants and there is a lack of a.

Comments are closed.