Machine Learning K Fold Cross Validation I2tutorials

K Fold Cross Validation Data Science Learning Data Science Machine For every k fold in our dataset, build the model on k 1 folds and validate or check the model for effectiveness at kth fold. note the scores or errors on each of the predictions. K fold cross validation is a statistical technique to measure the performance of a machine learning model by dividing the dataset into k subsets of equal size (folds).

K Fold Cross Validation Technique In Machine Learning K fold cross validation splits the dataset into k equal sized folds. the model is trained on k 1 folds and tested on the remaining fold. this process is repeated k times each time using a different fold for testing. K‑fold cross validation is a model evaluation technique that divides the dataset into k equal parts (folds) and trains the model multiple times, each time using a different fold as the test set and the remaining folds as training data. This repository contains a practice exercise for implementing k fold cross validation in machine learning. the exercise focuses on using the iris dataset from the sklearn library. When building machine learning models, one of the most critical questions we face is: “how well will my model perform on unseen data?” this is where k fold cross validation comes to the.

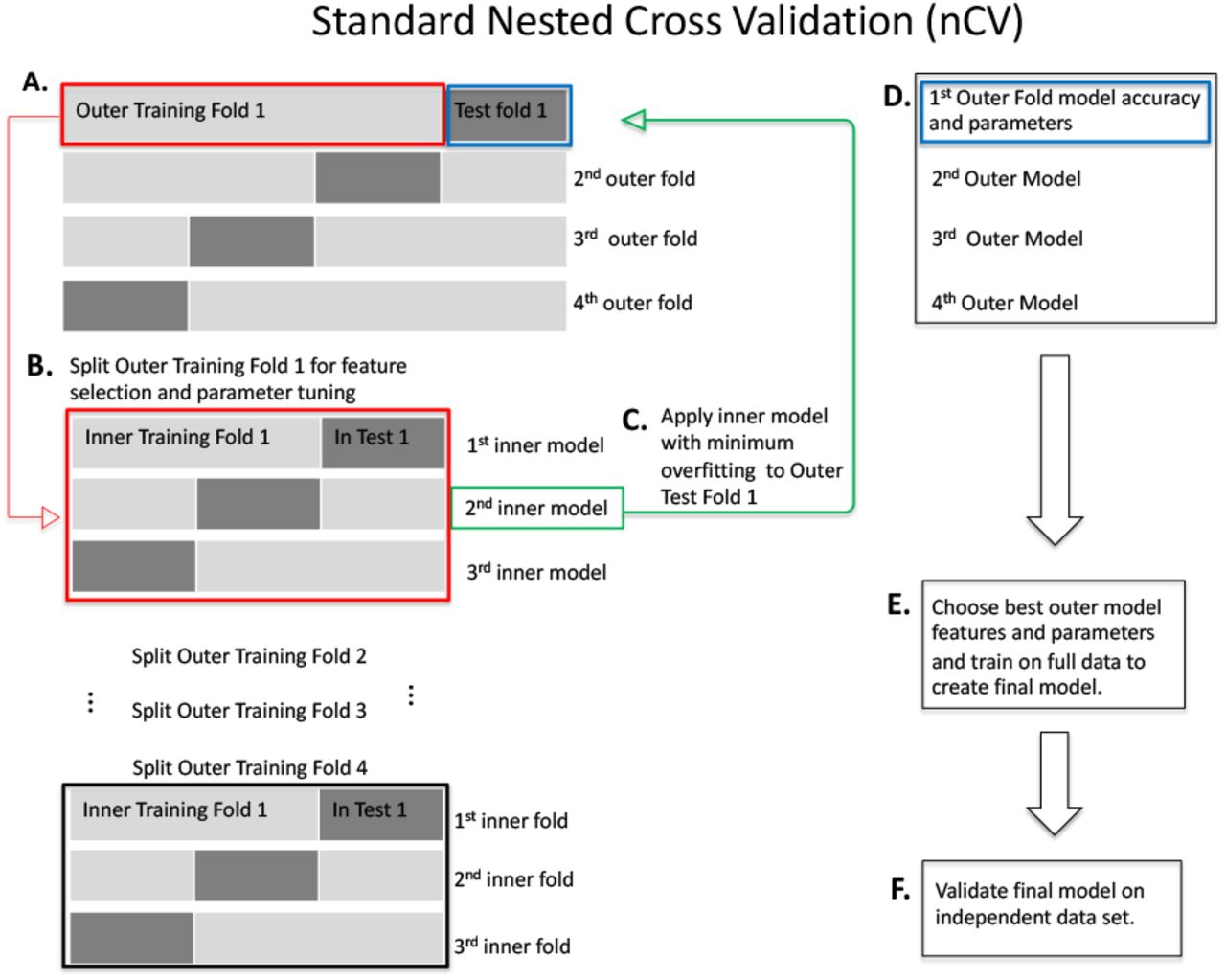

K Fold Cross Validation In Machine Learning Geeksforgeeks This repository contains a practice exercise for implementing k fold cross validation in machine learning. the exercise focuses on using the iris dataset from the sklearn library. When building machine learning models, one of the most critical questions we face is: “how well will my model perform on unseen data?” this is where k fold cross validation comes to the. In this tutorial, you will discover a gentle introduction to the k fold cross validation procedure for estimating the skill of machine learning models. after completing this tutorial, you will know: that k fold cross validation is a procedure used to estimate the skill of the model on new data. K fold cross validation is a resampling technique used to evaluate machine learning models by splitting the dataset into k equal sized folds. the model is trained on k 1 folds and validated on the remaining fold, repeating the process k times. In this article, you will learn about k fold cross validation, a powerful technique for evaluating machine learning models. we will explore what is k fold cross validation, how it works, and its importance in preventing overfitting. This guide has shown you how k fold cross validation is a powerful tool for evaluating machine learning models. it's better than the simple train test split because it tests the model on various parts of your data, helping you trust that it will work well on unseen data too.

K Fold Cross Validation In Machine Learning 2026 In this tutorial, you will discover a gentle introduction to the k fold cross validation procedure for estimating the skill of machine learning models. after completing this tutorial, you will know: that k fold cross validation is a procedure used to estimate the skill of the model on new data. K fold cross validation is a resampling technique used to evaluate machine learning models by splitting the dataset into k equal sized folds. the model is trained on k 1 folds and validated on the remaining fold, repeating the process k times. In this article, you will learn about k fold cross validation, a powerful technique for evaluating machine learning models. we will explore what is k fold cross validation, how it works, and its importance in preventing overfitting. This guide has shown you how k fold cross validation is a powerful tool for evaluating machine learning models. it's better than the simple train test split because it tests the model on various parts of your data, helping you trust that it will work well on unseen data too.

K Fold Cross Validation In Machine Learning How Does K Fold Work In this article, you will learn about k fold cross validation, a powerful technique for evaluating machine learning models. we will explore what is k fold cross validation, how it works, and its importance in preventing overfitting. This guide has shown you how k fold cross validation is a powerful tool for evaluating machine learning models. it's better than the simple train test split because it tests the model on various parts of your data, helping you trust that it will work well on unseen data too.

Comments are closed.