Lvq

Lvq Neural Network Structure Lvq Learning Vector Quantization Learning vector quantization (lvq) is a type of artificial neural network that’s inspired by how our brain processes information. it's a supervised classification algorithm that uses a prototype based approach. In computer science, learning vector quantization (lvq) is a prototype based supervised classification algorithm. lvq is the supervised counterpart of vector quantization systems.

Github Miikeydev Learning Vector Quantization Lvq Explained A Step Throughout this article, we will unravel the core mechanics of lvq, step by step. we’ll explore how lvq iteratively refines its prototype vectors using a smart update rule, and we’ll gain. Learn how to use sklearn glvq, a python library for learning vector quantization (lvq) and its variants. lvq is a method for constructing a sparse model of the data by representing data classes by prototypes. Learn how to use lvq, an artificial neural network algorithm that learns a collection of codebook vectors from training data for classification problems. find out the representation, procedure, parameters and data preparation for lvq. Introduction learning vector quantization is a precursor of the well known self organizing maps (also called kohonen feature maps) and like them it can be seen as a special kind of artificial neural network. both types of networks represent a set of reference vectors, the positions of which are optimized w.r.t. a given dataset. note, however, that this document cannot provide an exhaustive.

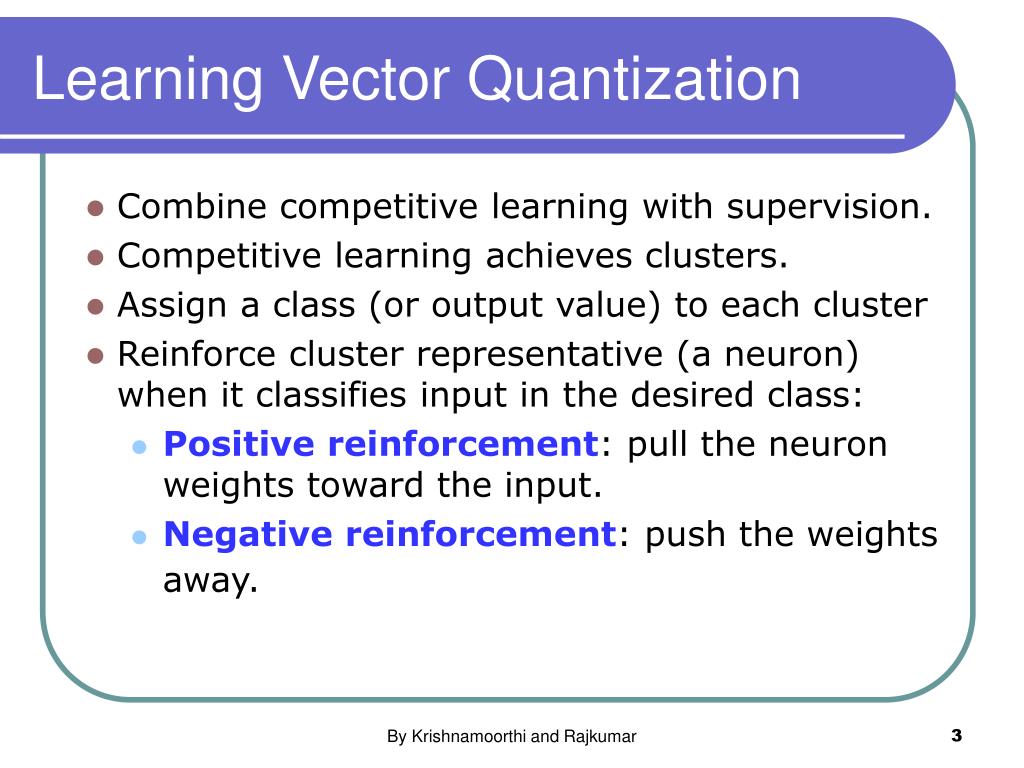

Ppt Lvq Algorithms Powerpoint Presentation Free Download Id 1385074 Learn how to use lvq, an artificial neural network algorithm that learns a collection of codebook vectors from training data for classification problems. find out the representation, procedure, parameters and data preparation for lvq. Introduction learning vector quantization is a precursor of the well known self organizing maps (also called kohonen feature maps) and like them it can be seen as a special kind of artificial neural network. both types of networks represent a set of reference vectors, the positions of which are optimized w.r.t. a given dataset. note, however, that this document cannot provide an exhaustive. The lvq is made up of a competitive layer, which includes a competitive subnet, and a linear layer. in the rst layer (not counting the input layer), each neuron is assigned to a class. Multiple passes of the lvq training algorithm are suggested for more robust usage, where the first pass has a large learning rate to prepare the codebook vectors and the second pass has a low learning rate and runs for a long time (perhaps 10 times more iterations). Learning vector quantization (lvq) has, since its introduction by kohonen (1990), become an important family of supervised learning algorithms. in the training phase, the algorithms determine prototypes that represent the classes in the presented data. Learning vector quantization (lvq), different from vector quantization (vq) and kohonen self organizing maps (ksom), basically is a competitive network which uses supervised learning. we may define it as a process of classifying the patterns where each output unit represents a class.

Lvq Neural Network Architecture Download Scientific Diagram The lvq is made up of a competitive layer, which includes a competitive subnet, and a linear layer. in the rst layer (not counting the input layer), each neuron is assigned to a class. Multiple passes of the lvq training algorithm are suggested for more robust usage, where the first pass has a large learning rate to prepare the codebook vectors and the second pass has a low learning rate and runs for a long time (perhaps 10 times more iterations). Learning vector quantization (lvq) has, since its introduction by kohonen (1990), become an important family of supervised learning algorithms. in the training phase, the algorithms determine prototypes that represent the classes in the presented data. Learning vector quantization (lvq), different from vector quantization (vq) and kohonen self organizing maps (ksom), basically is a competitive network which uses supervised learning. we may define it as a process of classifying the patterns where each output unit represents a class.

Architecture Of Lvq Neural Network Download Scientific Diagram Learning vector quantization (lvq) has, since its introduction by kohonen (1990), become an important family of supervised learning algorithms. in the training phase, the algorithms determine prototypes that represent the classes in the presented data. Learning vector quantization (lvq), different from vector quantization (vq) and kohonen self organizing maps (ksom), basically is a competitive network which uses supervised learning. we may define it as a process of classifying the patterns where each output unit represents a class.

Architecture Of Lvq Neural Network Download Scientific Diagram

Comments are closed.