Long Termism Vs Existential Risk Ea Forum

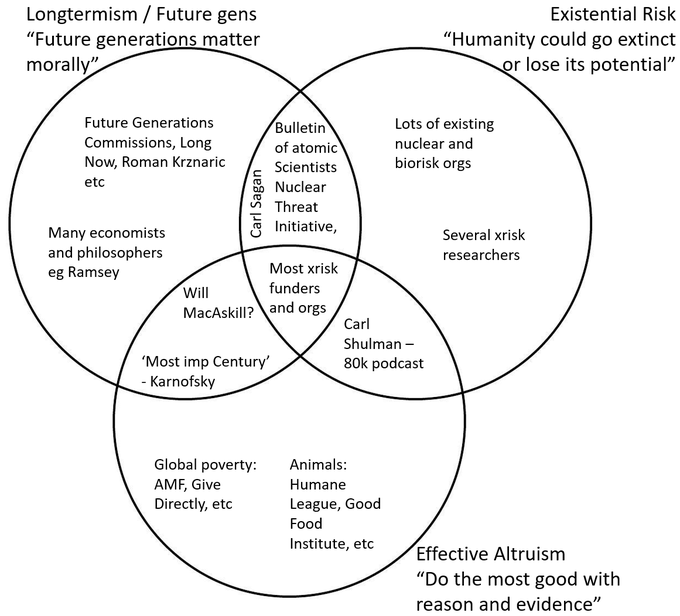

Long Termism Vs Existential Risk Ea Forum An existential risk is a risk that threatens the destruction of humanity's long term potential. but s risks are worrisome not only because of the potential they threaten to destroy, but also because of what they threaten to replace this potential with (astronomical amounts of suffering). First, message testing from rethink suggests that longtermism and existential risk have similarly good reactions from the educated general public, and ai risk doesn’t do great.

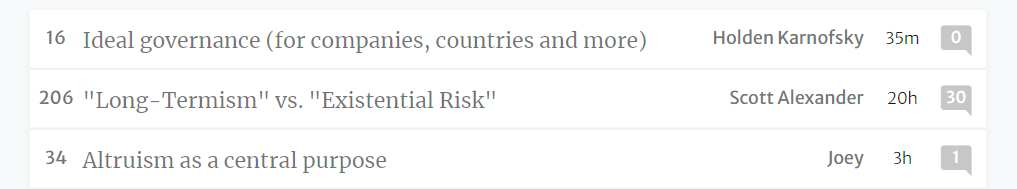

Long Termism Vs Existential Risk Ea Forum In my book the sudden shift to "longtermism" in the ea community is the case of wealthy techies getting bored buying mosquito nets and want to play with rockets, looking for some justification to do just that. A lot of eas who prioritize existential risk reduction are making increasingly awkward and convoluted rhetorical maneuvers to use “eas” or “longtermists” as the main label for people we see as aligned with our goals and priorities. i suspect this is suboptimal and, in the long term, infeasible. Scott alexander's "long termism" vs. "existential risk" worries that “longtermism” may be a worse brand (though not necessarily a worse philosophy) than “existential risk”. Targeted (or narrow) longtermism attempts to positively influence the long term future by focusing on specific, identifiable scenarios, such as the risks of misaligned ai or an engineered pandemic.

Long Termism Vs Existential Risk Ea Forum Scott alexander's "long termism" vs. "existential risk" worries that “longtermism” may be a worse brand (though not necessarily a worse philosophy) than “existential risk”. Targeted (or narrow) longtermism attempts to positively influence the long term future by focusing on specific, identifiable scenarios, such as the risks of misaligned ai or an engineered pandemic. Some people might come to your ea group specifically looking to learn more about longtermism; perhaps they read the precipice and were inspired, or are particularly concerned about pandemic preparedness. An existential risk is a risk that threatens the destruction of the long term potential of life. [1] an existential risk could threaten the extinction of humans (and other sentient beings), or it could threaten some other unrecoverable collapse or permanent failure to achieve a potential good state. While the word long termism itself isn't new, it's a relatively new way of describing the school of thought in moral philosophy being discussed here — if only because that school of thought itself has been quite small until recently. On september 10th, the secretary general of the united nations released a report called “ our common agenda ”. this report seems highly relevant for those working on longtermism and existential risk, and appears to signal unexpectedly strong interest from the un.

Comments are closed.